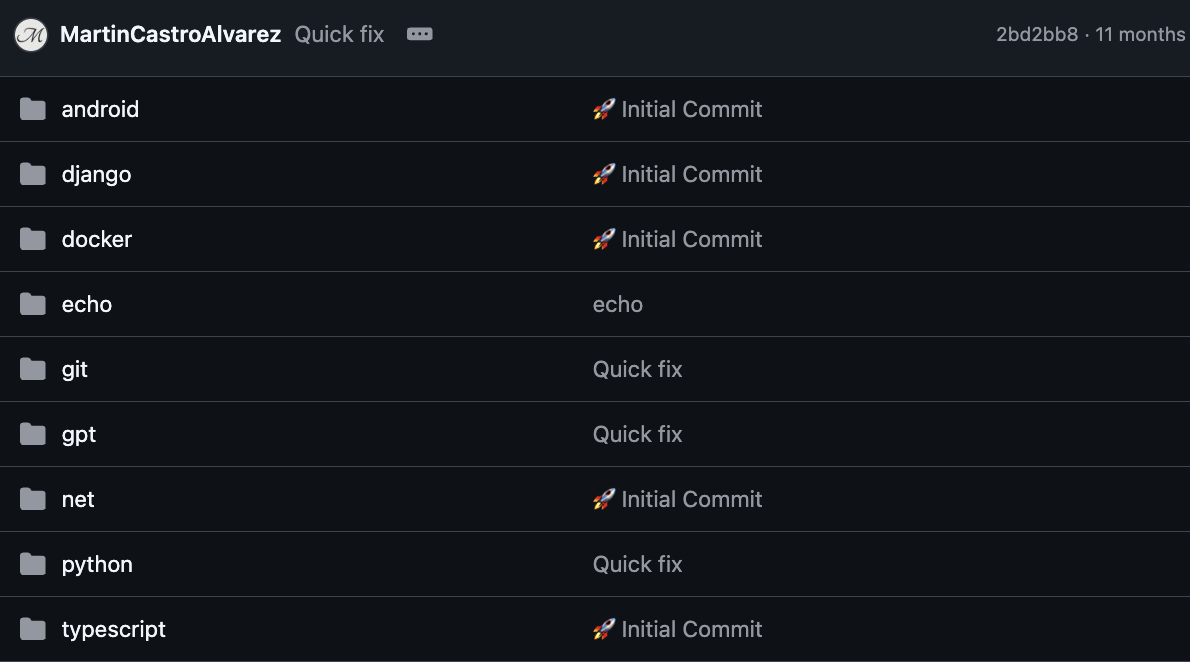

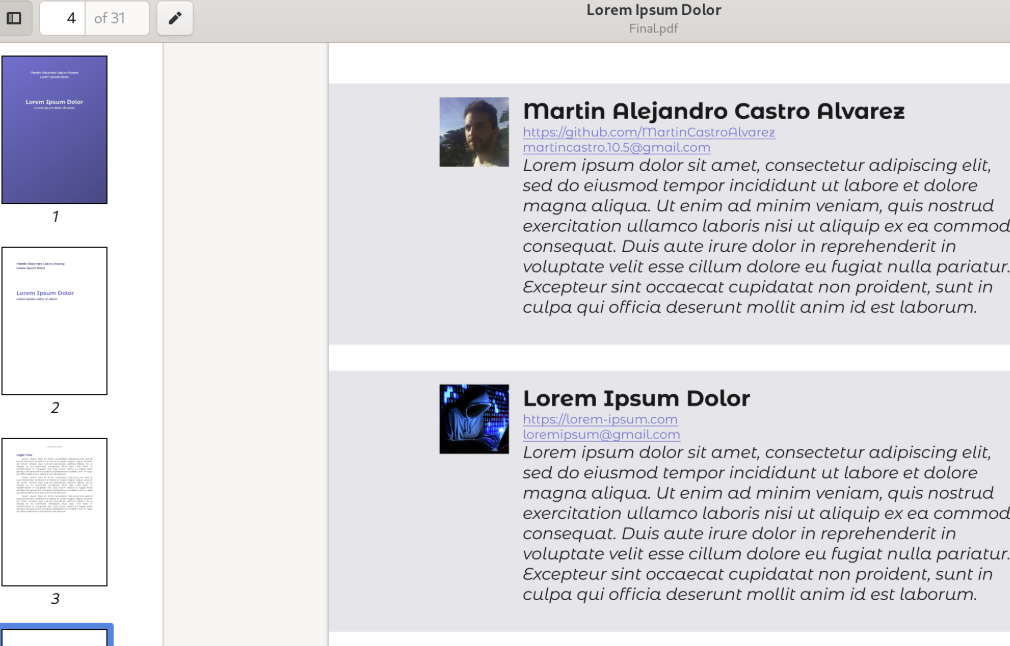

Martín Alejandro Castro Álvarez

Software Engineer

martincastro.10.5@gmail.comhttps://martincastroalvarez.comhttps://github.com/MartinCastroAlvarezhttps://www.linkedin.com/in/martincastroalvarez/Martín Alejandro Castro Álvarez, Software Engineer

martincastro.10.5@gmail.com

https://martincastroalvarez.com

https://github.com/MartinCastroAlvarez

https://www.linkedin.com/in/martincastroalvarez/

I am an experienced software engineer with a passion for building scalable, AI-powered solutions. My proven track record includes leading cross-functional teams and delivering production-grade systems across multiple industries.

I specialize in full-stack development, cloud infrastructure, and machine learning. My strong background includes Python, JavaScript, and modern web technologies.

I have extensive experience leading technical teams and architecting production systems across AI, cloud infrastructure, and full-stack development domains.

I am a good fit because I combine technical expertise with leadership experience, enabling me to deliver scalable solutions while mentoring teams and driving technical excellence.

I am an experienced software engineer with a passion for building scalable, AI-powered solutions. My proven track record includes leading cross-functional teams and delivering production-grade systems across multiple industries.

I specialize in full-stack development, cloud infrastructure, and machine learning. My strong background includes Python, JavaScript, and modern web technologies.

I have extensive experience leading technical teams and architecting production systems across AI, cloud infrastructure, and full-stack development domains.

I am a good fit because I combine technical expertise with leadership experience, enabling me to deliver scalable solutions while mentoring teams and driving technical excellence.

Skills

Team Leadership

MentoringProduct DesignCode ReviewCross-functionalRoadmappingTechnical DecisionsTeam ProductivityConflictArchitecture Design

System ArchitectureMicroservicesEvent-DrivenData PipelinesMicrofrontendsDistributed SystemsAPI DesignScalabilityBackend

DjangoNodeRustFastAPIFlaskPythonWarpJavaFrontend

ReactGraphQLVueJSMicrofrontendsNodeReduxSvelteAngularTypeScriptWeb3

SolidityRustEthereumweb3.jsethers.jsAlchemyIPFSMetaMaskFoundryAnvilSmart ContractsNFTsOpenZeppelinData Engineering

Streaming de datosSparkCassandraNoSQLRedshiftBigQueryAWS RDSAWS S3LookerTableauAWS ElastiCacheKafkaETLSQLAWS DynamoDBElasticsearchSnowflakeAWS KinesisGoogle Cloud SQLGoogle Cloud StorageMetabaseAmazon NeptuneAWS AthenaDevOps

DockerLinuxVPCAWS CDKAnsibleCloud MonitoringAWS API GatewayRabbitMQCeleryGitLab CIAWS CodePipelineKubernetesAWS CloudWatchTerraformPulumiShellAWS LambdaGoogle Pub/SubAWS SQSGitHub ActionsCircleCIAI

LangChainOpenAI Agent SDKPyTorchPandasRAGSpaCyOpenCVGoogle ADKNumPyKerasTensorFlowEmbeddingsGensimStreamlitTesting

jestpytestbehaveplaywrightseleniumvitestunittestlocustcypressLanguages

SpanishEnglish2024 - Today · AI Tech Lead · Laminr

San Francisco, US

Laminr is an innovative AI company specializing in developing advanced agent-based solutions to automate complex business processes and workflows.

I established a comprehensive mentorship program focused on knowledge transfer to engineering team members, translating complex business requirements into executable technical tasks. I conducted regular educational meetings, live-coding sessions, pair-programming workshops, and coding bootcamps to foster team collaboration and accelerate skill development across the organization.

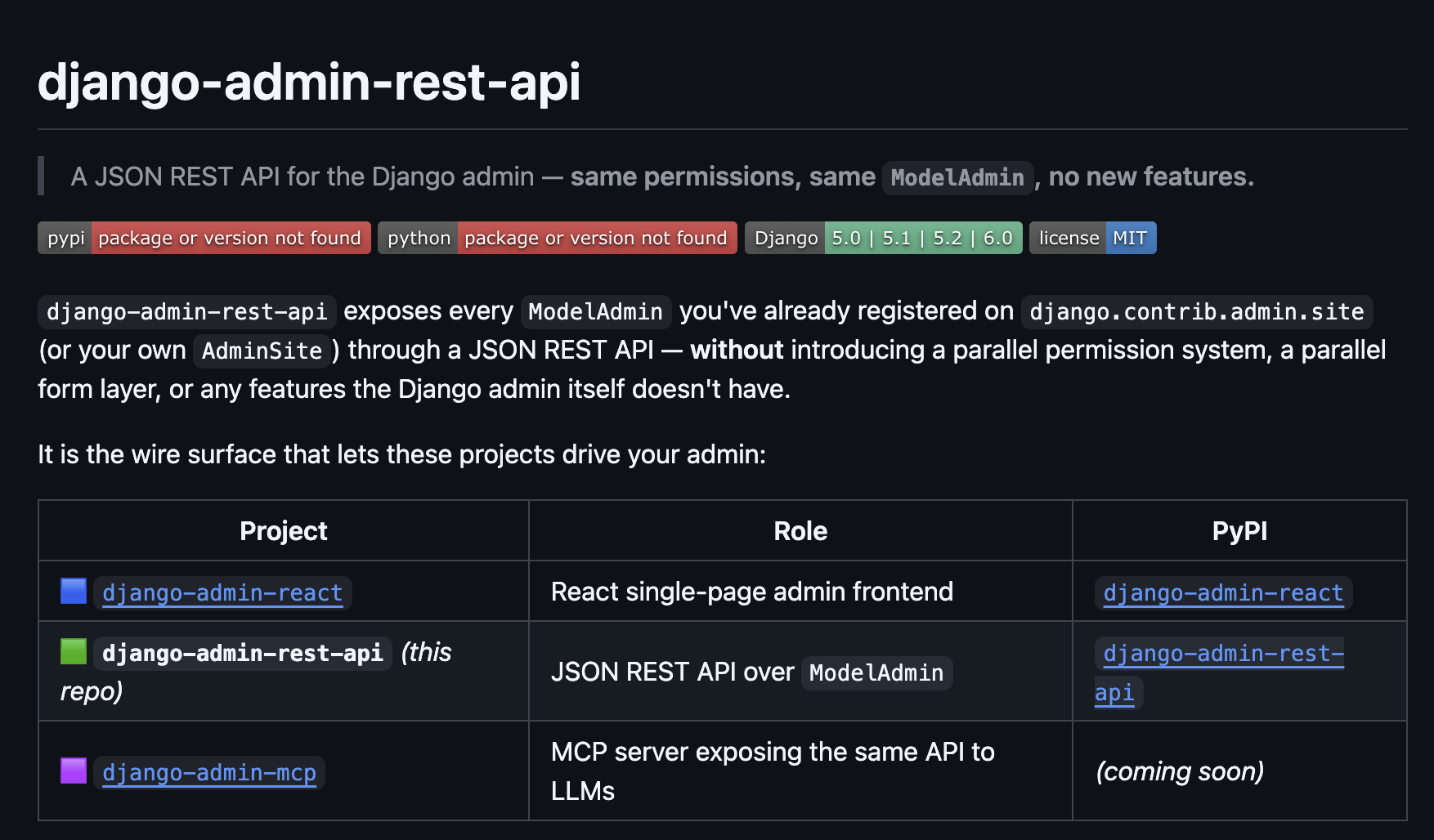

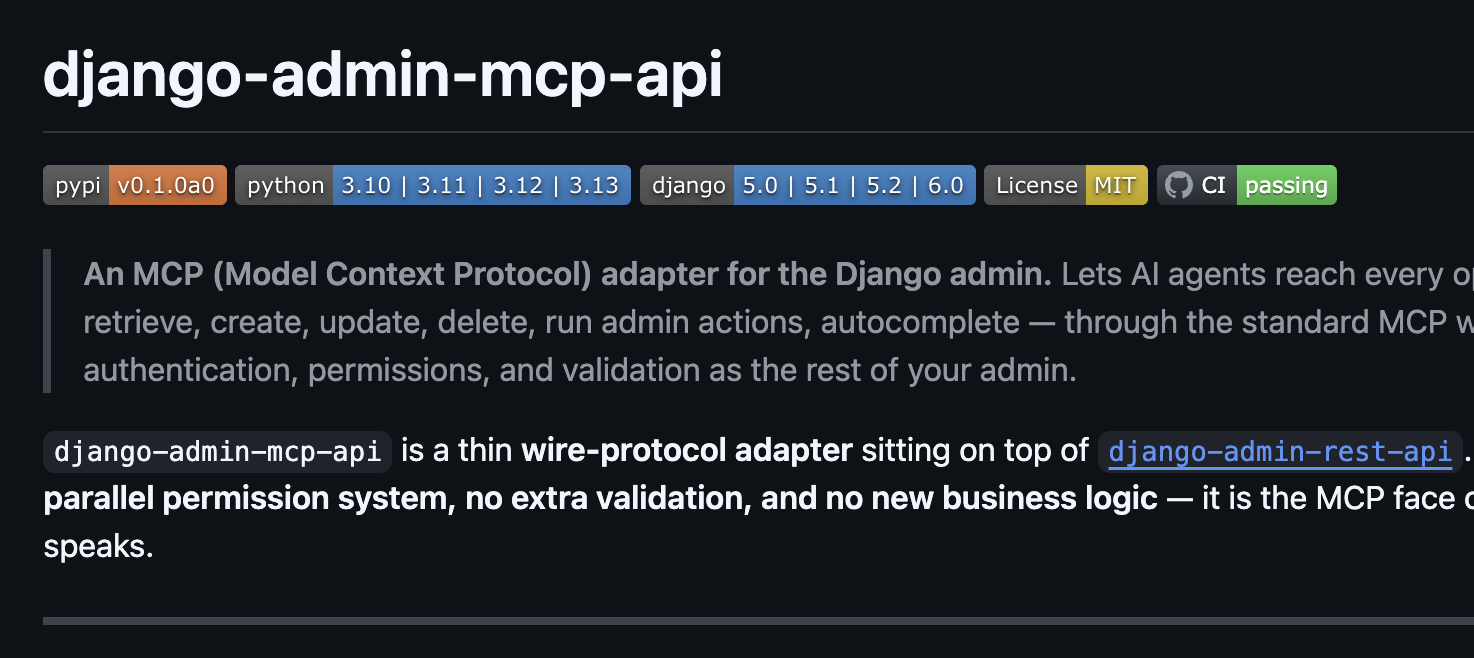

I architected and implemented advanced LLM agents with OKR tracking and Computer Vision capabilities for automated document processing. I developed end-to-end pipelines for image-to-text data extraction using state-of-the-art models. I integrated multi-modal AI systems with business workflows for seamless automation. I utilized Model Context Protocol (MCP) and Google Agent Development Kit (ADK) for tool integrations and agent orchestration.

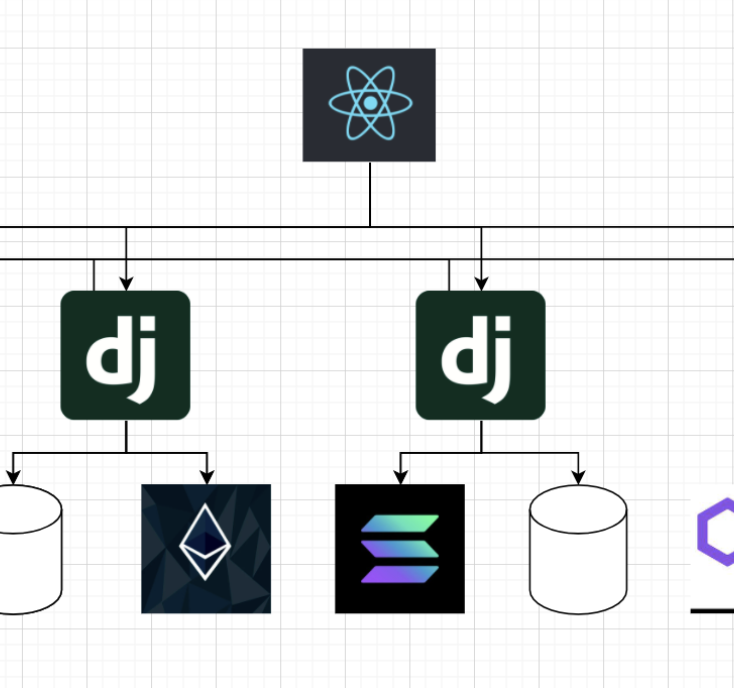

I led the design and deployment of scalable AI automation platforms, applying full-stack expertise in Django and React for production-grade systems. I engineered cloud infrastructure with business intelligence dashboards (Metabase) to drive operational insights.

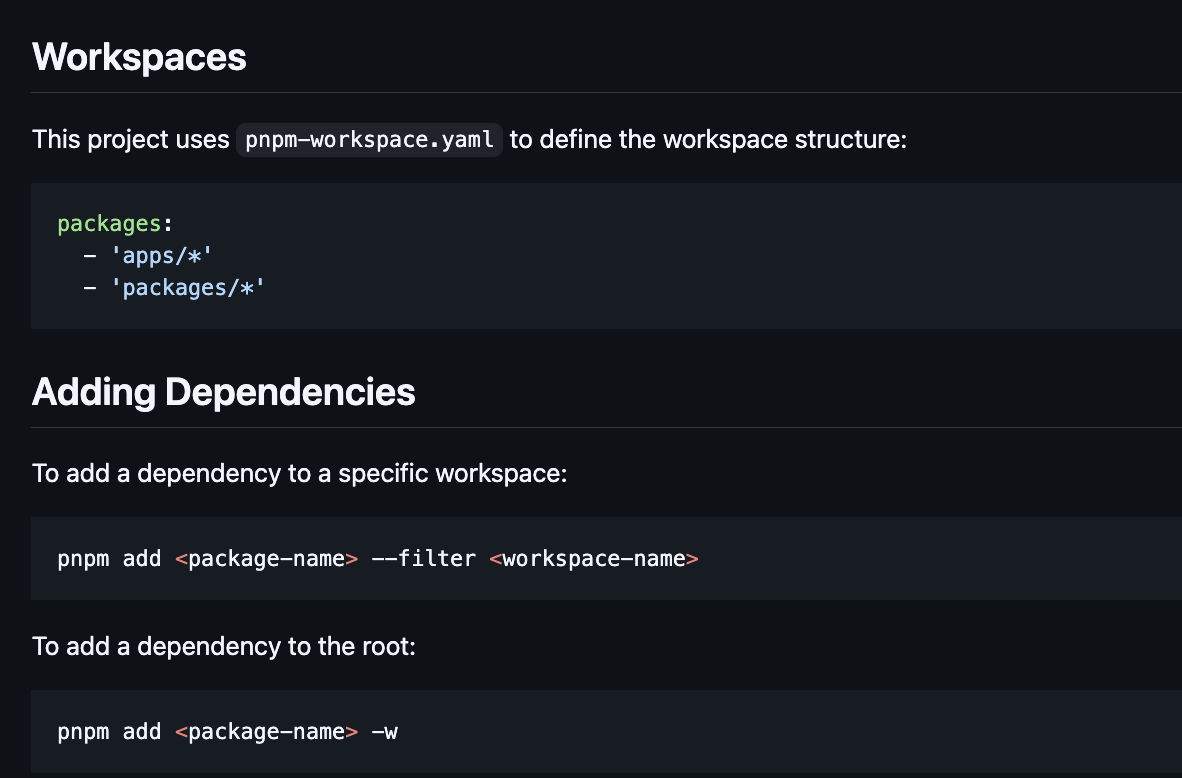

I architected and implemented modern, responsive web applications using React Workspaces with PNPM monorepo setup, Zustand for state management, and React Query for efficient data fetching. I leveraged TypeScript for type safety and Tailwind CSS for rapid UI development, ensuring maintainable and scalable frontend architecture across multiple packages.

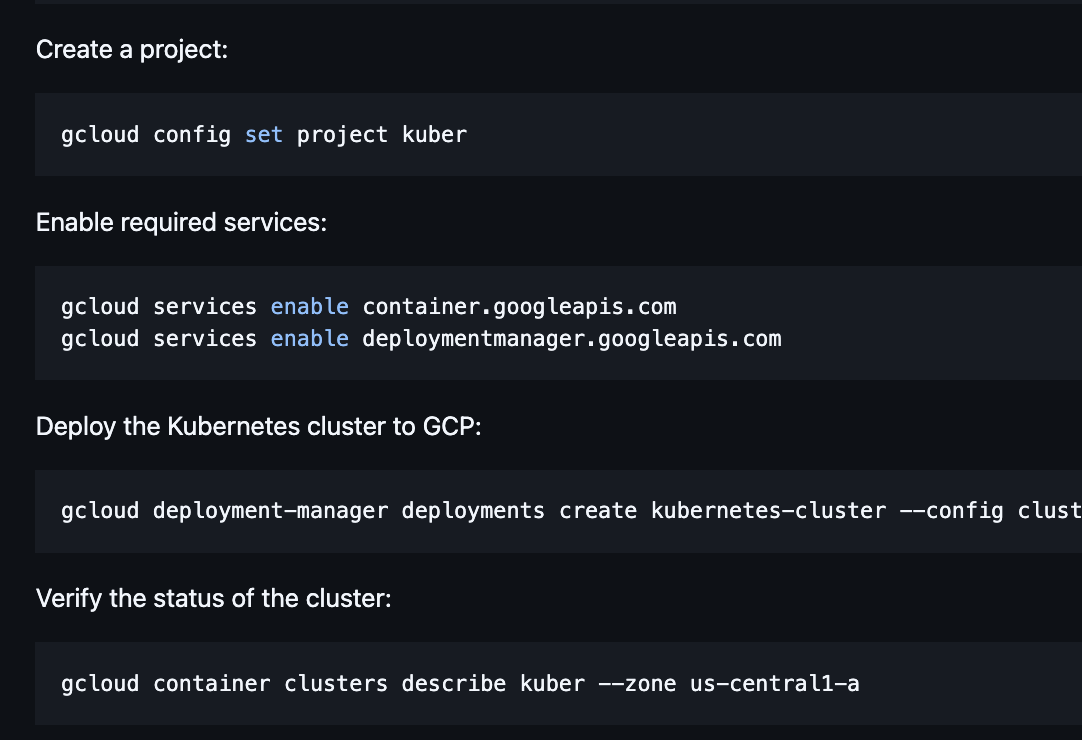

I architected and implemented cloud-native infrastructure using Pulumi for IaC, deploying services on GCP (Cloud Run, GKE, Cloud SQL, Memorystore Redis) with zero-downtime deployments. I orchestrated containerized applications on Google Kubernetes Engine (GKE) — Google Cloud's managed Kubernetes service that automates cluster provisioning, autoscaling, upgrades, and security patching — using it to run scalable microservices with horizontal pod autoscaling, node auto-provisioning, workload identity for secure GCP access, and rolling deployments across multi-zone clusters. I established CI/CD pipelines and infrastructure automation for seamless deployments and scaling.

I built a comprehensive QA framework with Playwright for E2E testing and integrated monitoring solutions (Datadog, Sentry) for real-time observability and incident response. I implemented automated testing pipelines and established monitoring best practices for production systems.

I led a mixed-style team (high-autonomy builders and risk-focused reviewers) by setting clear interfaces, ownership boundaries, and decision logs—so execution stayed parallel and predictable.

I built a mentorship and knowledge-transfer program (pair programming, live-coding, bootcamps) to level up engineers with very different working styles and reduce onboarding time.

I turned ‘what could go wrong’ concerns into concrete mitigations (tests, monitoring, rollout plans), reducing incidents while maintaining shipping cadence.

I achieved a 40% reduction in onboarding time for new engineers and increased team velocity by 25% within 6 months through structured knowledge transfer.

I reduced document processing time by 80% (from 5 minutes to 1 minute per document) and increased automation coverage from 30% to 85% of business workflows within 4 months.

I increased API response time by 60% (from 500ms to 200ms average) and reduced infrastructure costs by 35% through optimized database queries and caching strategies.

I reduced bundle size by 45% and improved page load time by 50% (from 3.2s to 1.6s) through code splitting and lazy loading optimizations.

I achieved 99.9% uptime and reduced deployment time from 45 minutes to 8 minutes (82% reduction) through automated CI/CD pipelines and infrastructure as code.

I reduced production incidents by 70% and decreased mean time to resolution (MTTR) from 4 hours to 45 minutes through comprehensive test coverage and proactive monitoring.

Tech Stack: Product Requirements, Technical Leadership, Live-coding, Pair-programming, Technical Workshops, LLM Agents, Computer Vision, OCR, Document Processing, LangChain, PyTorch, TensorFlow, AI Agents, Django, React, AI Agents, Metabase, React, PNPM, Monorepo, Zustand, React Query, Axios, TypeScript, Tailwind, Pulumi, Cloud Run, GKE, Kubernetes, Cloud SQL, Redis, Google Cloud Monitoring, CI/CD, Infrastructure as Code (IaC), Google Cloud Platform (GCP), Playwright, Sentry, Datadog, E2E, Cloud Monitoring, Automated Tests

2022 - 2024 · Blockchain Engineer · Makersplace

San Francisco, US

MakersPlace is a digital creation platform powered by blockchain, enabling creators to sell unique digital artwork.

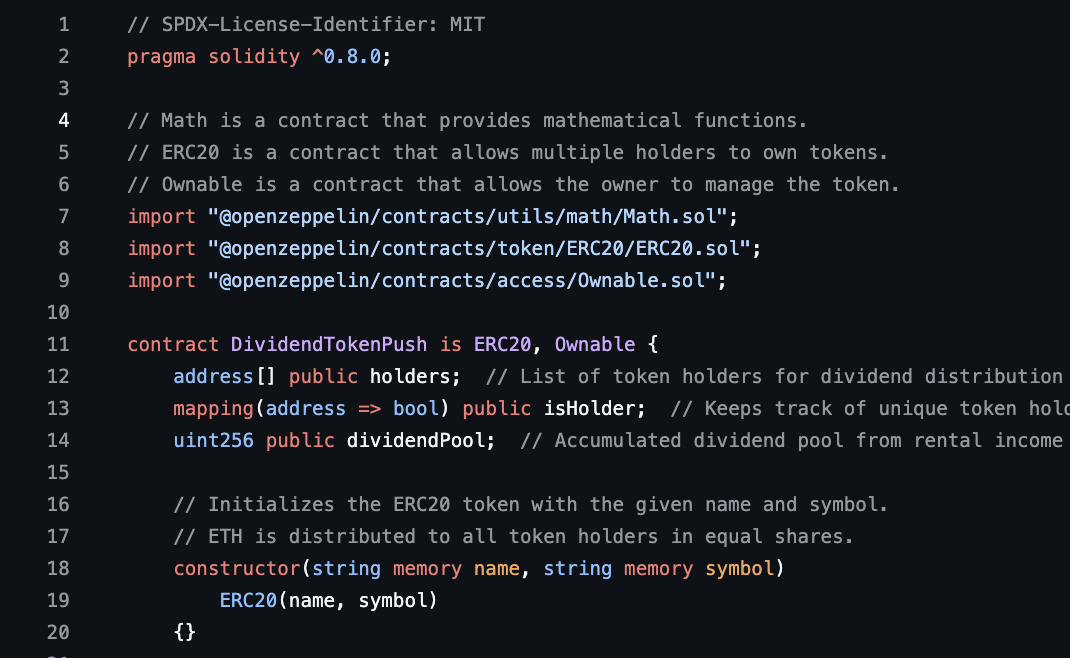

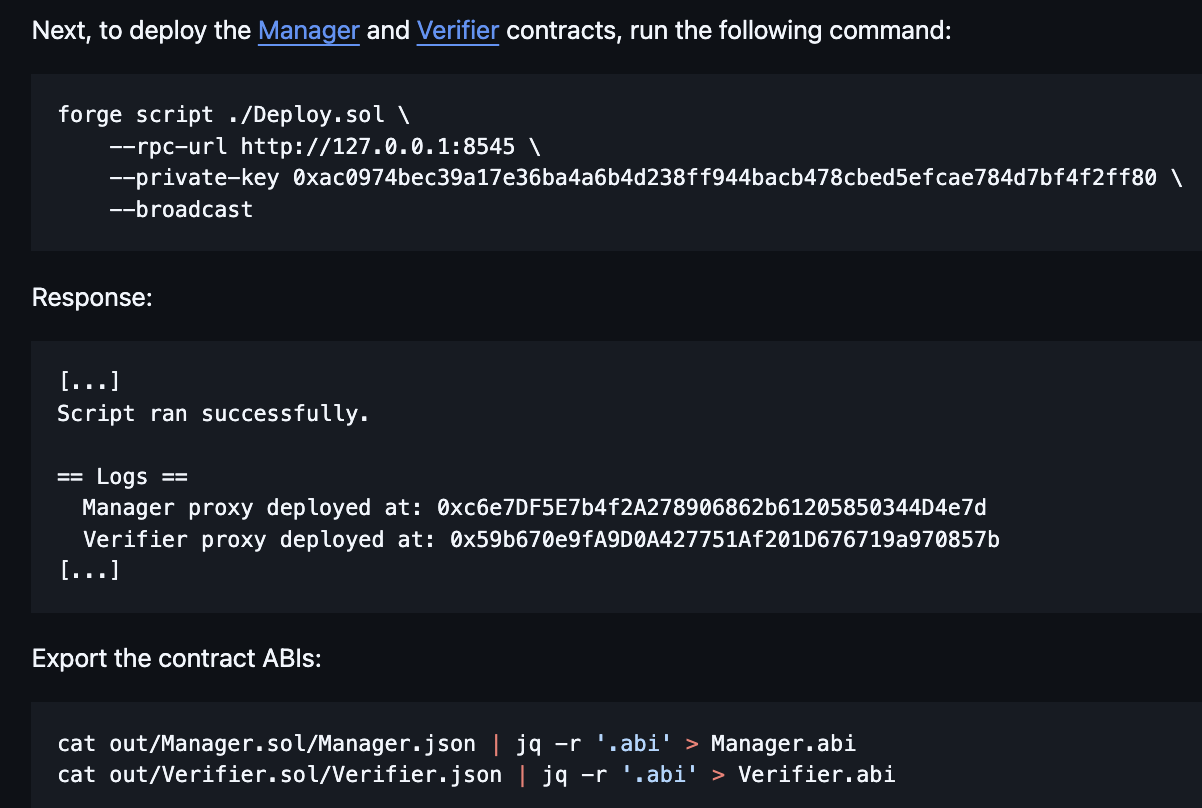

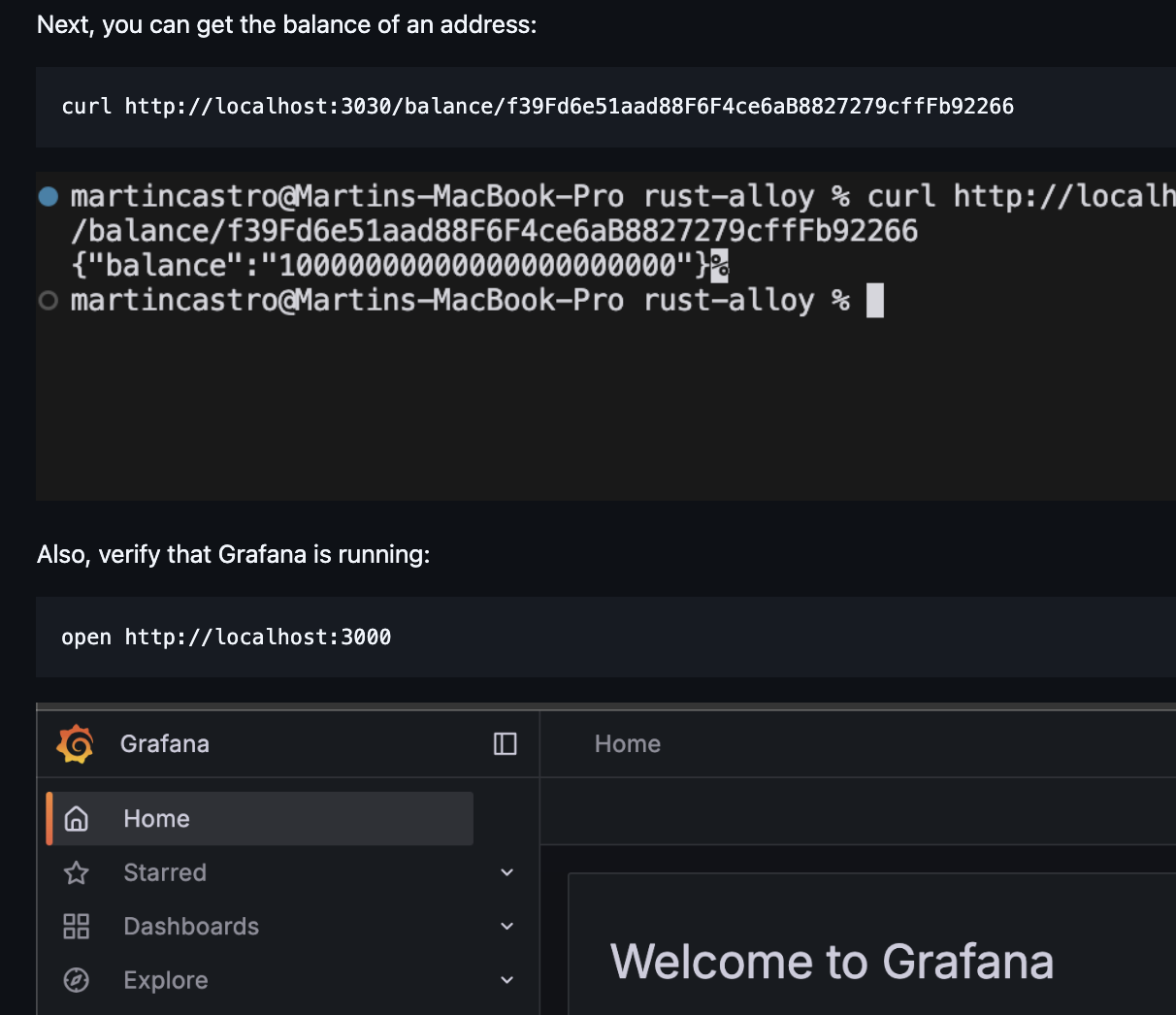

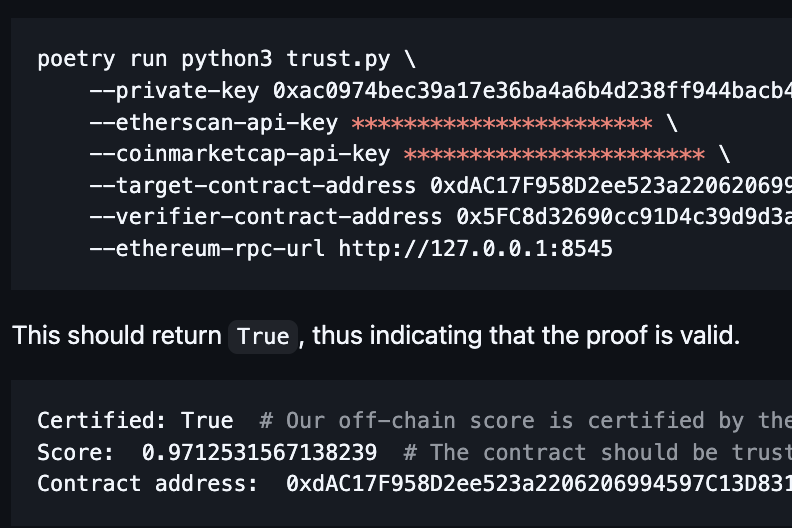

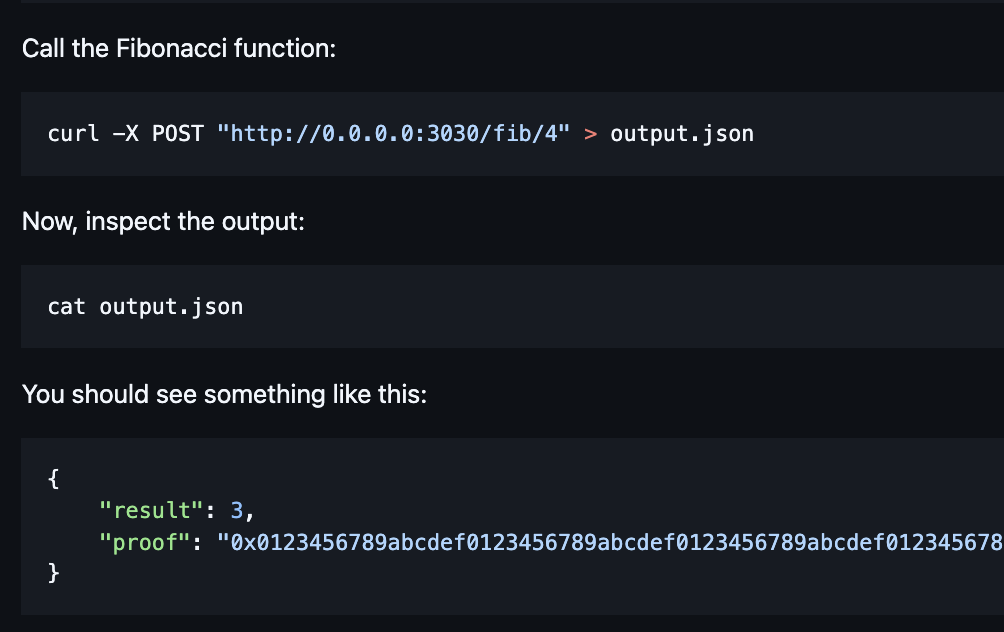

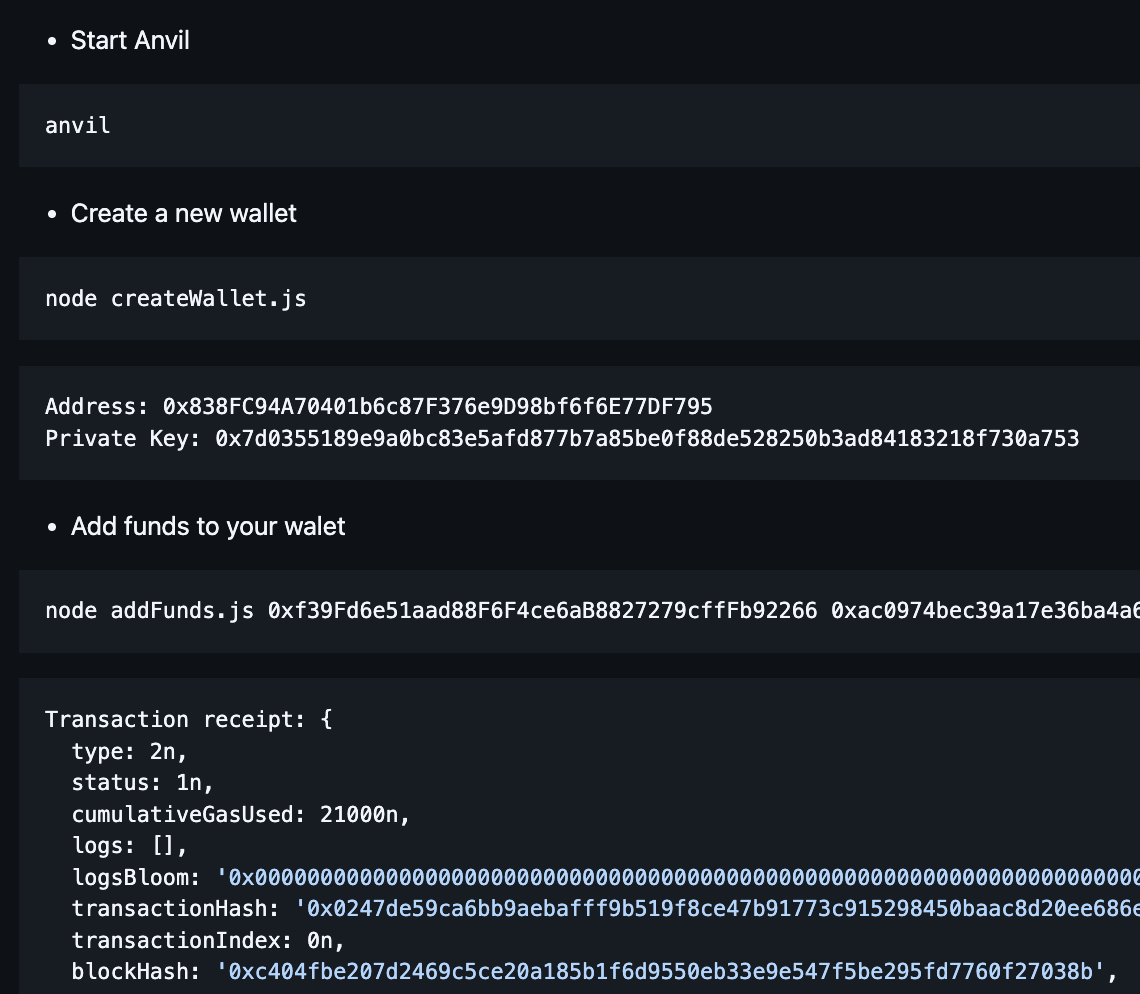

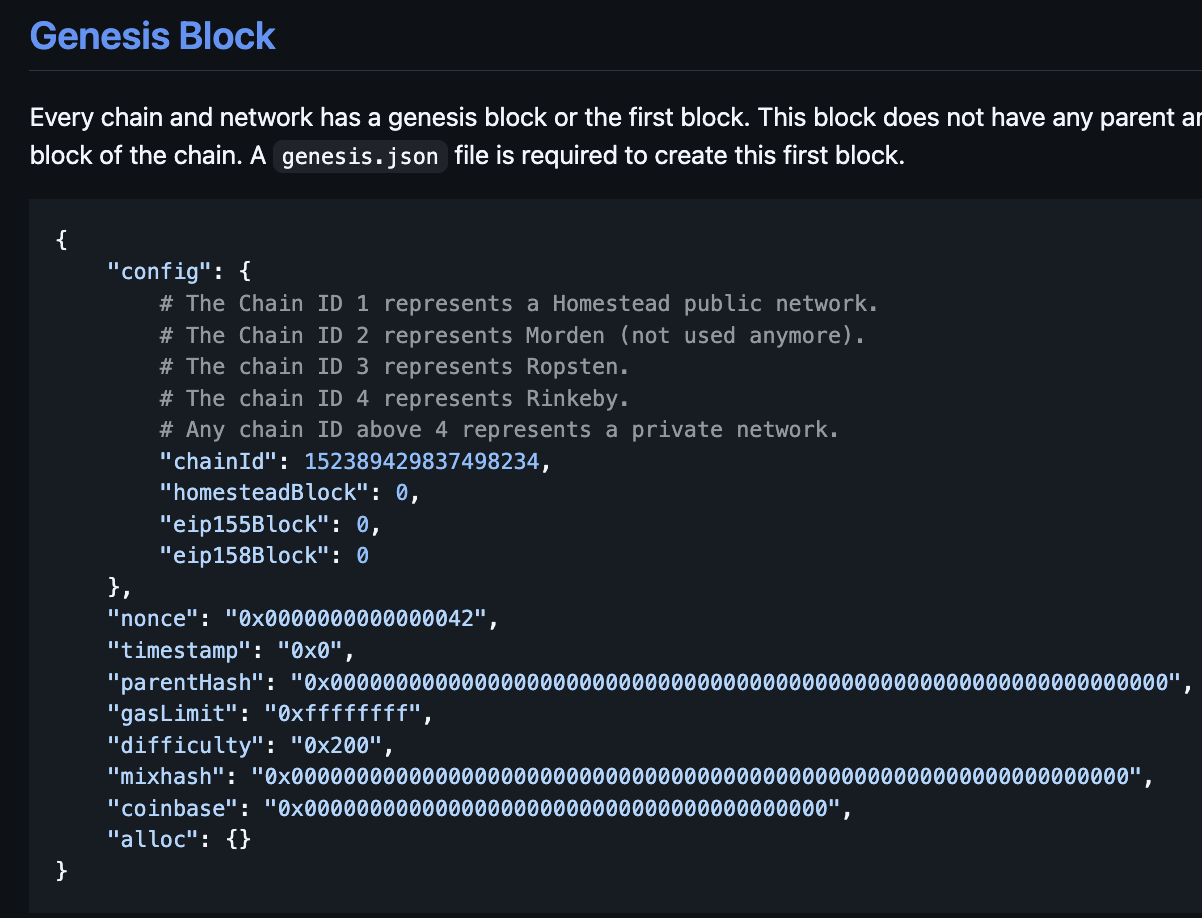

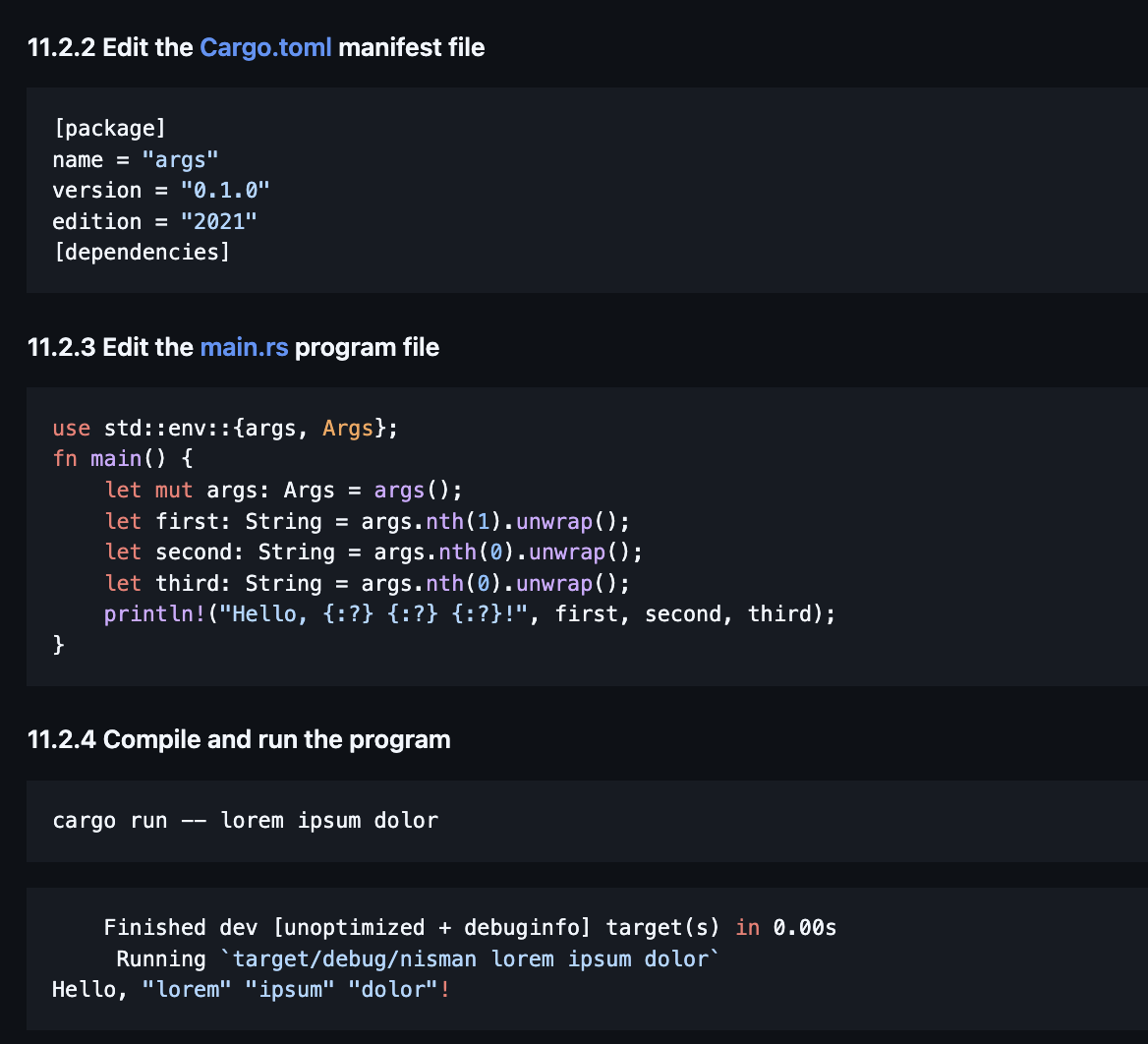

I built end-to-end digital asset infrastructure integrating Django backends with Solidity smart contracts and Rust-based logic for Web3 protocols. I led NFT and phygital asset deployments using web3.js, Alchemy, and IPFS, integrating smart contracts with full-stack applications. I partnered with cross-functional teams (marketing, sales) to launch blockchain-based digital campaigns that increased user engagement and retention.

I diagnosed and resolved critical failures in blockchain workflows, including transaction validation, IPFS metadata syncing, and dynamic gas optimization. I designed fault-tolerant microservices for real-time blockchain transaction monitoring and distributed data pipelines.

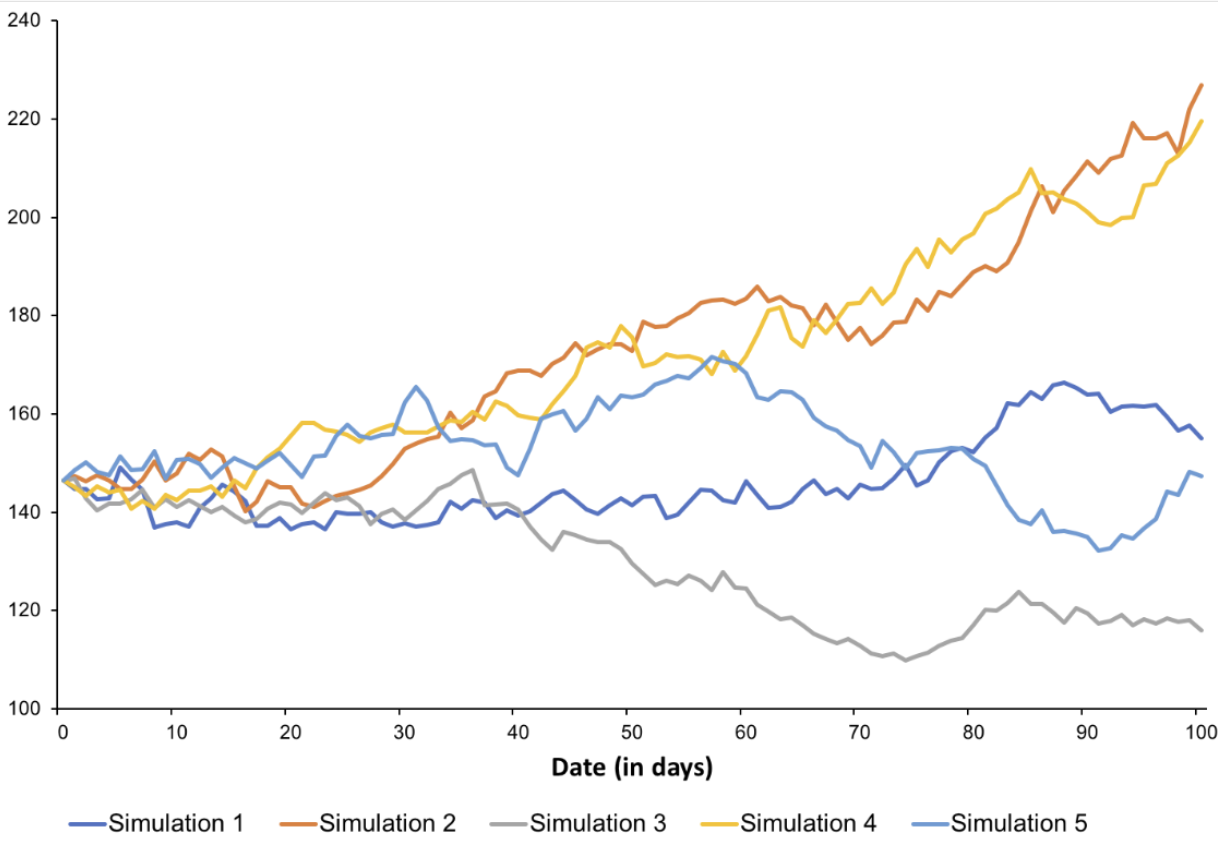

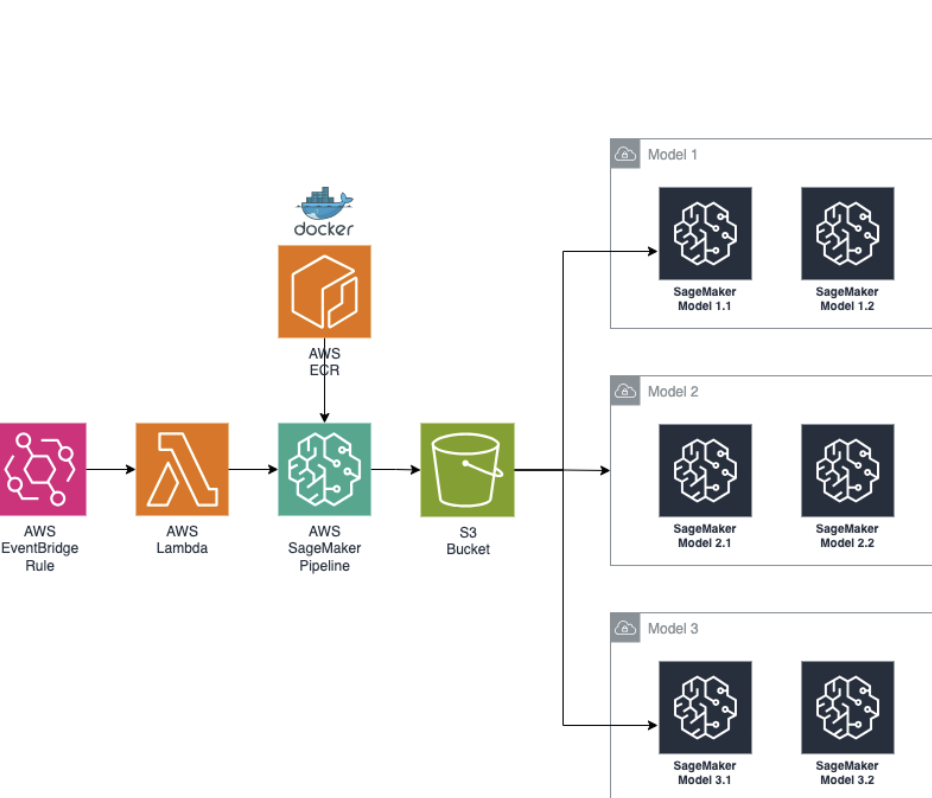

I developed production-grade MLOps pipeline on AWS for scalable model lifecycle management, leveraging Docker, Kubernetes, and SageMaker. I enabled model versioning and continuous monitoring for production ML workflows, ensuring data drift detection and model rollback.

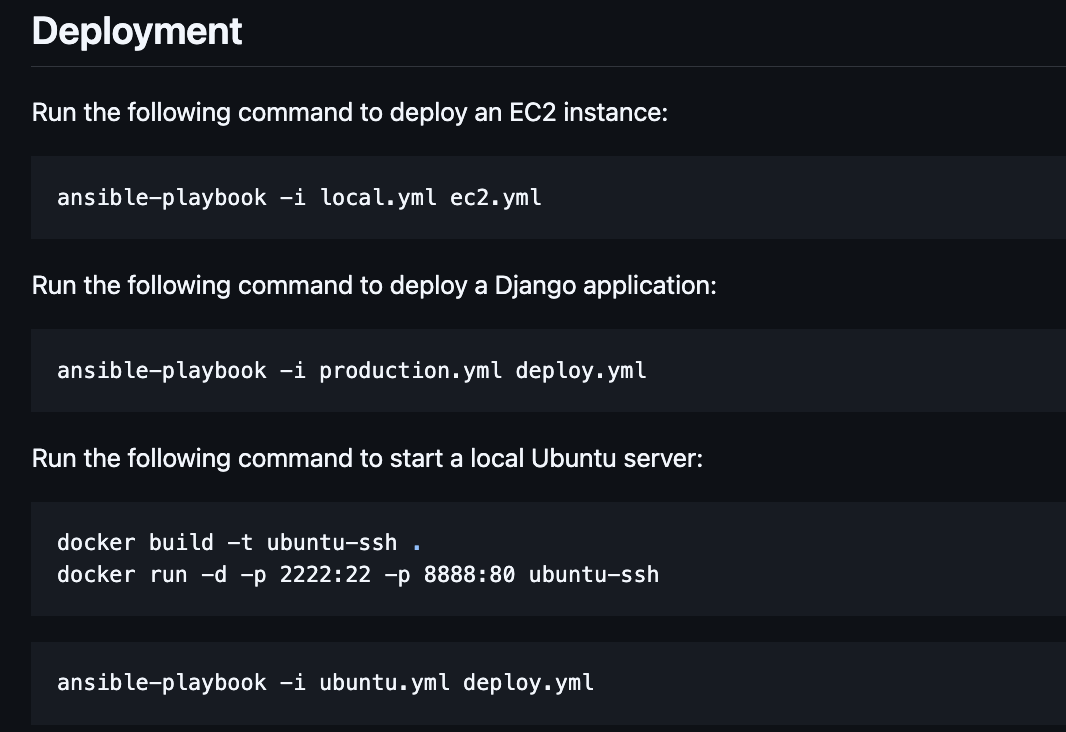

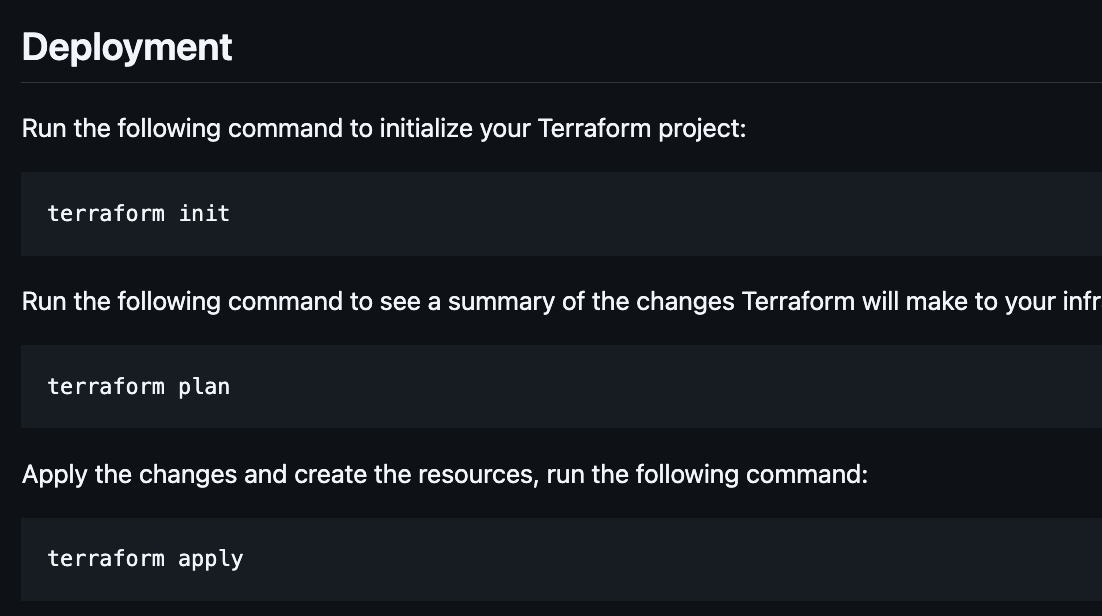

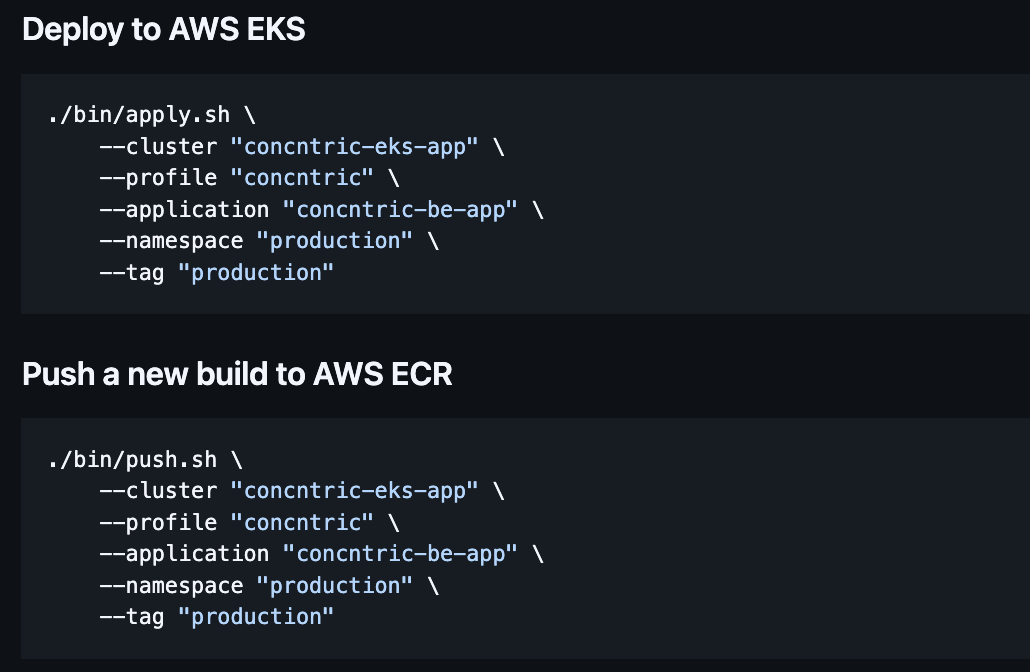

I automated deployment infrastructure using AWS CDK and CI/CD pipelines (GitHub Actions, Elastic Beanstalk, ECR), achieving zero-downtime rollouts. I dockerized applications and managed deployment environments using Elastic Beanstalk, ECR, RDS, and Opensearch.

I directed enterprise-scale data migration to GCP BigQuery, optimizing ETL pipelines with Data Fusion for low-latency analytics. I enabled real-time data access for business intelligence.

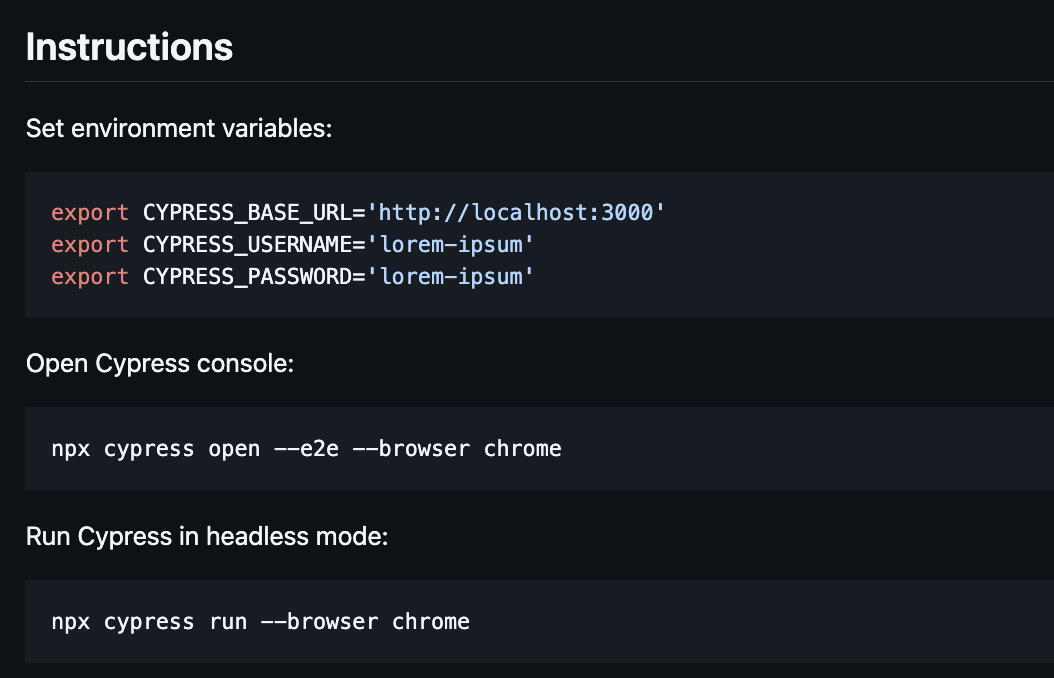

I built full-spectrum test automation suite with Cypress, PyTest, and integration testing frameworks — enforced zero-regression policies pre-launch. I validated digital drops and NFT-related product features to ensure high quality and zero regression.

I delivered under aggressive launch timelines by making explicit tradeoffs (guardrails, rollback plans, monitoring) that protected reliability while meeting drop deadlines.

I partnered cross-functionally (marketing/sales + engineering) to ship blockchain features fast, while keeping transaction integrity high through automation and fault-tolerant services.

I increased transaction success rate from 85% to 98% and reduced gas costs by 40% through optimized smart contract design and dynamic gas pricing strategies.

I reduced system downtime by 90% (from 2% to 0.2% monthly) and improved transaction processing throughput by 3x through fault-tolerant architecture and optimized data pipelines.

I reduced model deployment time from 2 weeks to 2 days (90% reduction) and improved model accuracy monitoring coverage from 40% to 95% through automated MLOps pipelines.

I achieved 100% zero-downtime deployments and reduced infrastructure provisioning time from 4 hours to 15 minutes (94% reduction) through infrastructure as code and automated CI/CD.

I reduced data processing latency by 75% (from 4 hours to 1 hour) and decreased data warehouse costs by 50% through optimized ETL pipelines and query optimization.

I increased test coverage from 45% to 92% and reduced regression bugs in production by 85% through comprehensive automated testing.

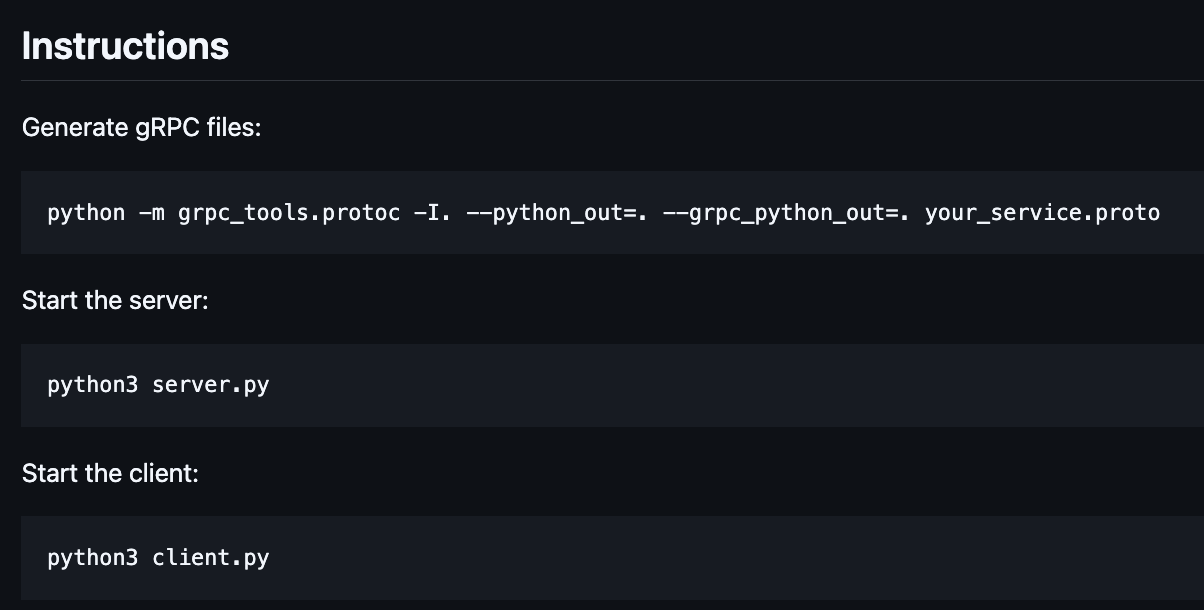

Tech Stack: web3.js, ethers.js, Alchemy, Moralis, Ethereum, Etherscan, IPFS, Solidity, Rust, MetaMask, Coinbase, Wallet Connect, Solscan, Royalty Registry, Python, Django, Celery, Unit Tests, Airflow, AWS SageMaker, TensorFlow, MLOps, gRPC, Docker, Kubernetes, AWS CDK, AWS Elastic Beanstalk, AWS ECR, AWS RDS, AWS Opensearch, AWS Elasticache, AWS S3, AWS Cloudfront, AWS DMS, BigQuery, Data Fusion, Data Streams, Cypress, Unit Tests, Integration Tests, Functional Tests

2020 - 2022 · Data Engineer · Rings AI

San Francisco, US

An AI-powered platform for opportunity intelligence through relationship data.

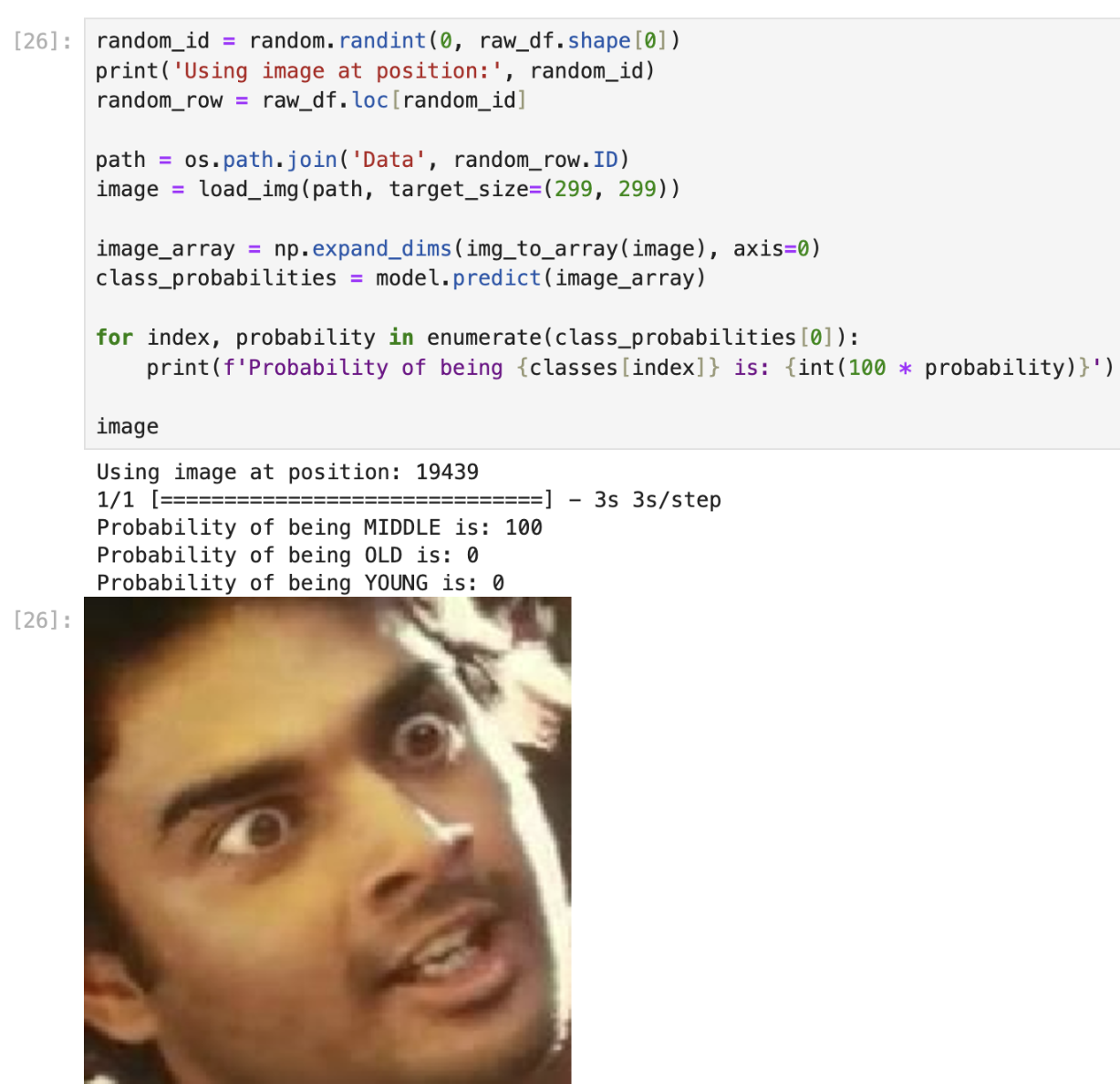

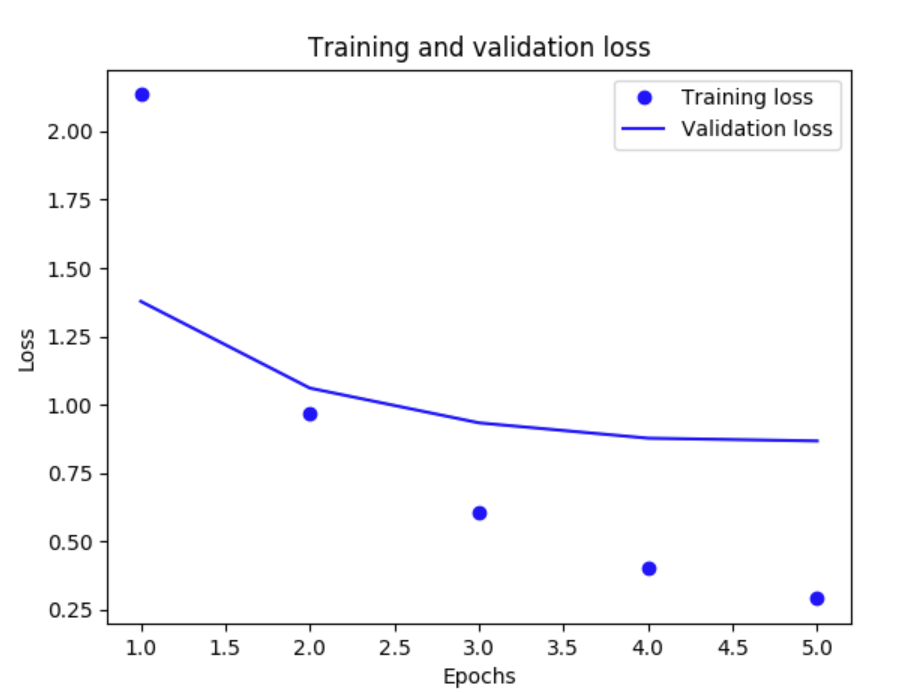

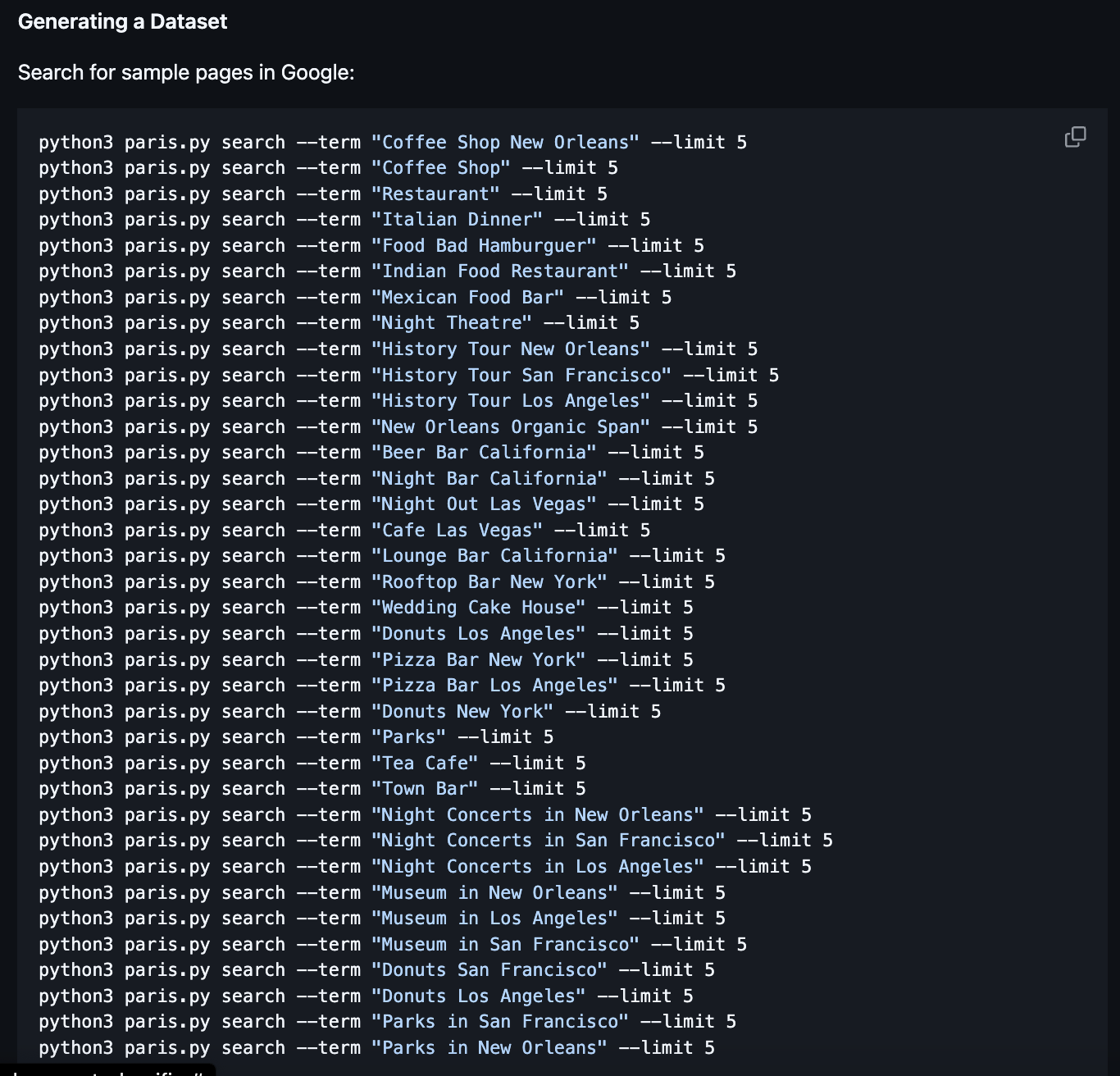

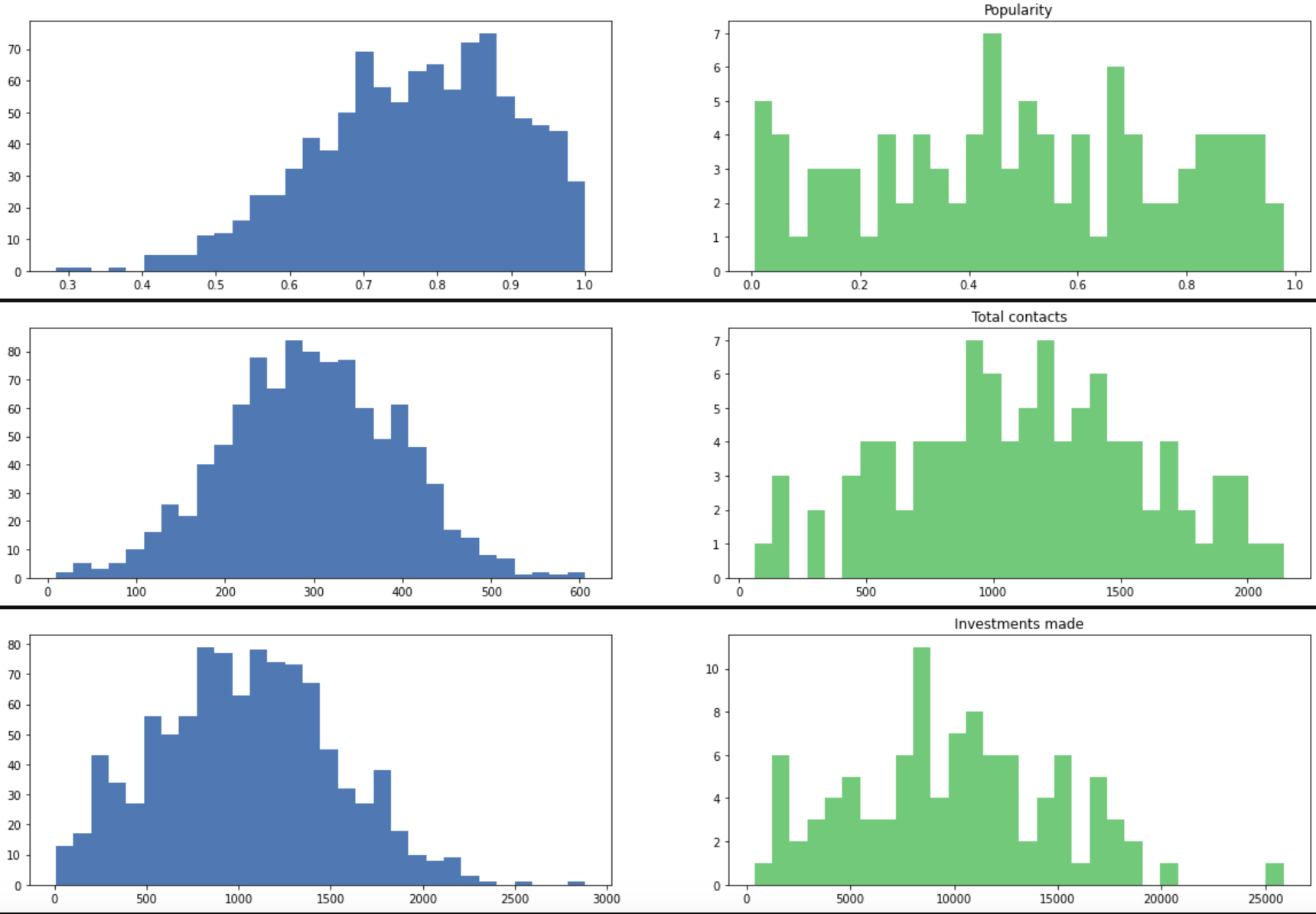

I architected and developed a custom CRM platform designed to improve outreach effectiveness using AI-driven insights from network relationship data. I implemented intelligent dataset enrichment by integrating multiple external data sources, enabling personalized outreach strategies and opportunity intelligence. I built machine learning models to analyze relationship patterns and predict optimal engagement approaches. I integrated computer vision capabilities for automated profile image analysis and document processing to enhance contact data quality.

I built real-time distributed graph algorithm in Spark for relationship path analysis. I streamlined data materialization using AWS Glue, SQS, and ETL processes.

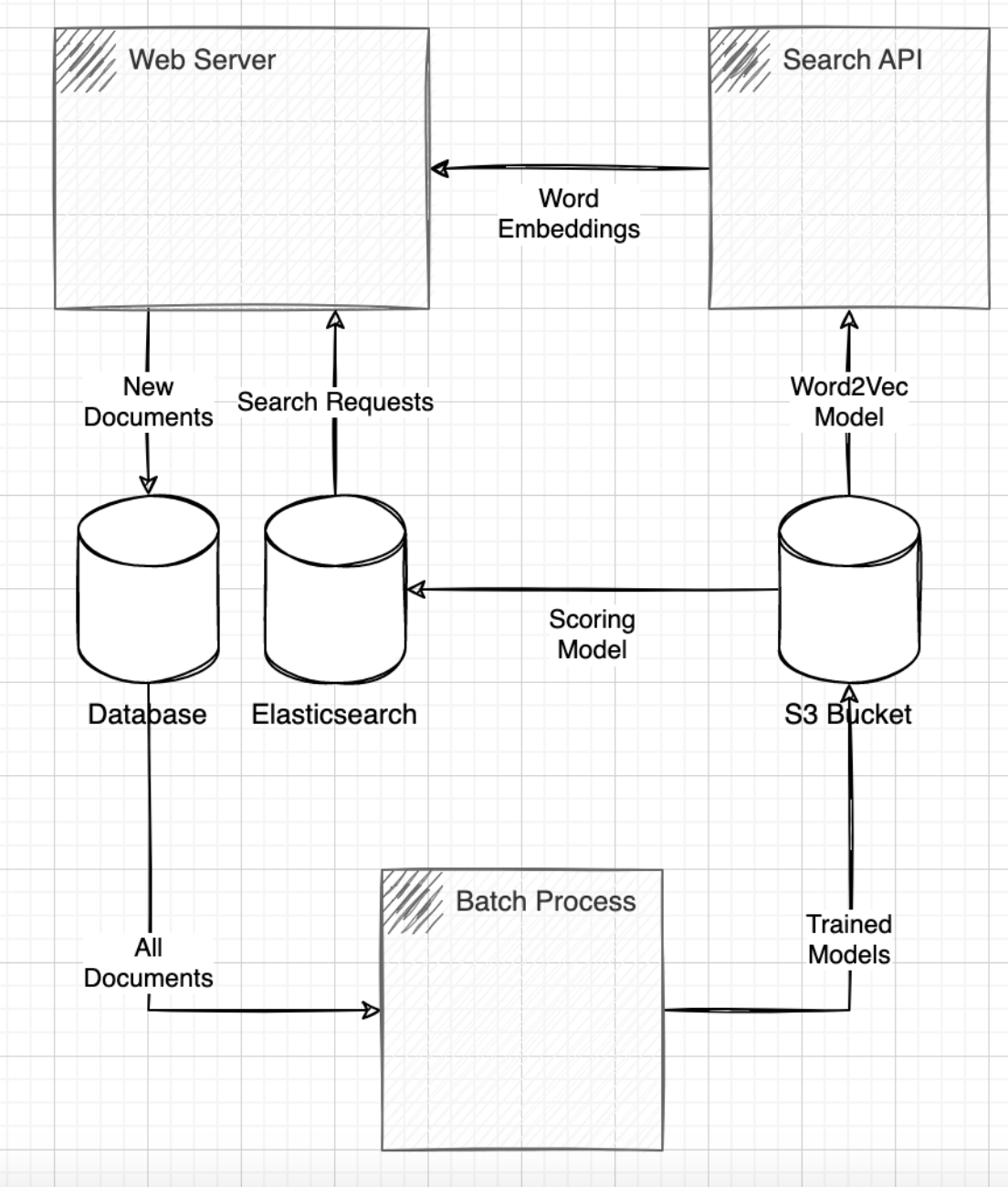

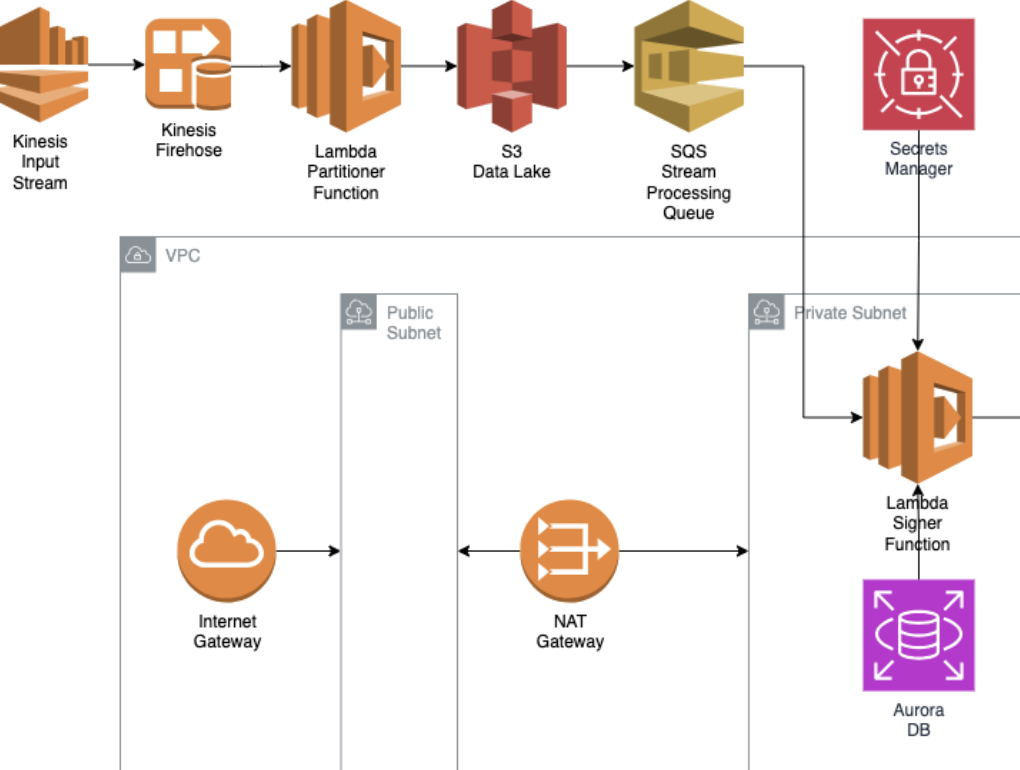

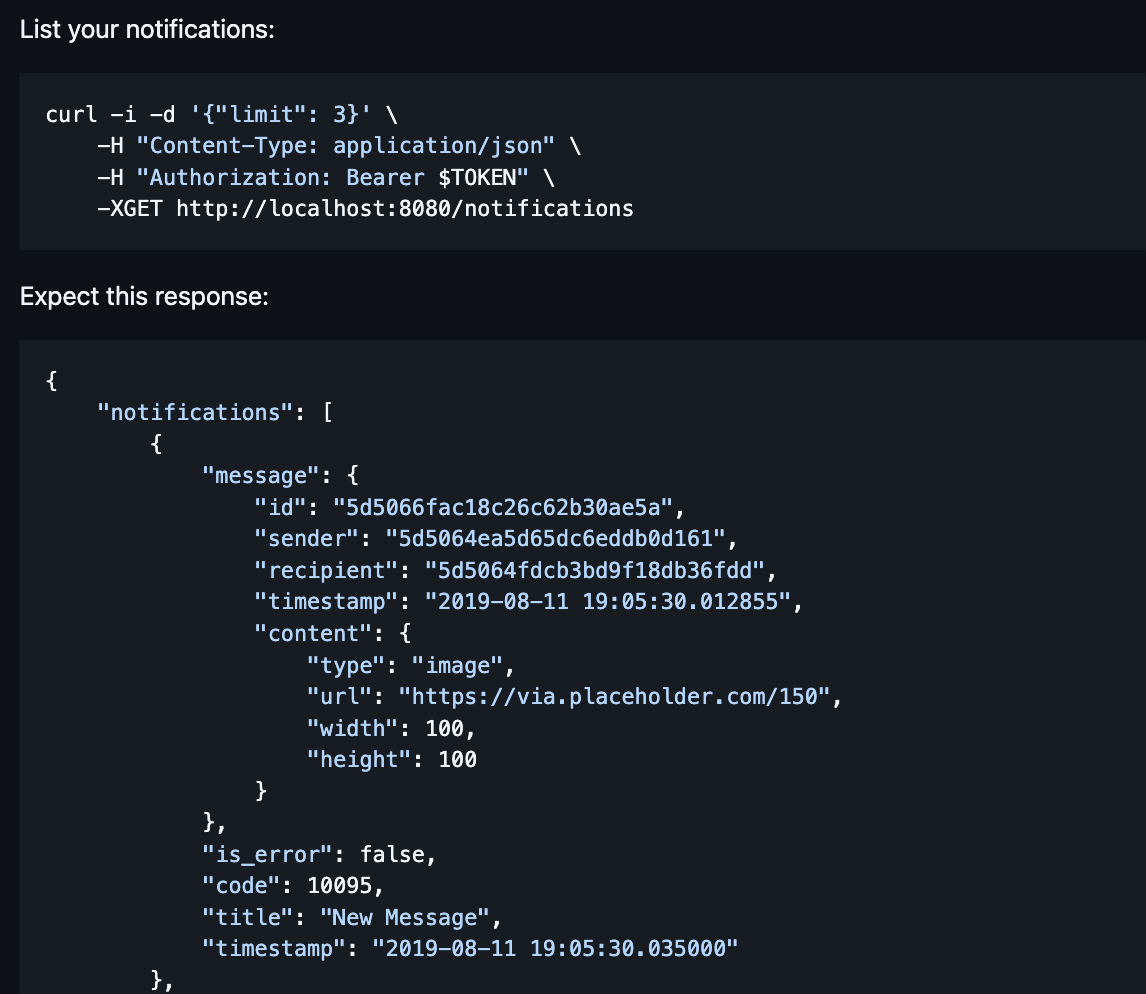

I designed high-throughput serverless backend using AWS Lambda, event-driven SQS/SNS queues, and Elasticsearch for log indexing and traceability. I ensured high availability and scalability across the architecture.

I constructed scalable ETL pipelines using AWS Glue and Athena to support Redshift-based data warehousing and interactive querying. I improved data warehouse performance and reporting efficiency.

I optimized cloud network infrastructure with custom VPC architectures, reducing inter-zone data transfer costs by 30% via NAT gateway tuning. I reduced data transfer costs while ensuring security.

I integrated secure authentication and audit logging using AWS Cognito, Google OAuth 2.0, and serverless event-driven Lambda functions. I ensured compliance and traceability.

I implemented micro-frontends in React with GraphQL over AWS AppSync to support real-time UI rendering and scalable user data interactions. I integrated robust data flows using Node and TypeScript.

I created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I managed CI/CD workflows to maintain code quality.

I performed production diagnostics using AWS observability stack (CloudWatch, X-Ray, custom metrics), producing detailed RCA reports. I delivered actionable RCA reports and fixes.

I worked in Agile teams using Scrum, Jira, and Confluence. I optimized sprint velocity and stakeholder communication.

I built probabilistic matching algorithms using AWS Glue and distributed lookups. I enhanced data integration across sources.

I deployed secure CDN with Lambda@Edge and CloudFront. I reduced latency and improved user content delivery.

I applied cost tags and managed resources with AWS Organizations. I enhanced budget accountability and forecast accuracy.

I collaborated effectively with very direct, high-bar engineers by focusing on evidence: benchmarks, RFCs, and reproducible experiments—turning sharp debate into better architecture.

I navigated high-stakes stakeholder pressure by documenting tradeoffs, defining objective success metrics, and protecting delivery from last-minute churn.

I increased outreach conversion rates by 65% and reduced data enrichment time from 2 hours to 15 minutes per contact through AI-powered automation.

I reduced graph computation time by 70% (from 30 minutes to 9 minutes) and improved data accuracy from 78% to 95% through optimized graph algorithms and real-time processing.

I achieved 99.95% uptime and reduced infrastructure costs by 60% compared to traditional EC2-based architecture while handling 10x traffic spikes.

I reduced ETL processing time by 55% (from 6 hours to 2.7 hours) and decreased query latency by 40% through optimized data partitioning and columnar storage strategies.

I achieved 30% cost reduction in data transfer costs ($15K to $10.5K monthly) and improved network latency by 25% through optimized VPC architecture and NAT gateway configuration.

I reduced authentication failures by 80% and achieved 100% audit trail coverage for all user actions, ensuring full compliance with security requirements.

I reduced API response time by 50% (from 400ms to 200ms) and decreased frontend bundle size by 35% through GraphQL query optimization and code splitting.

I increased test automation coverage from 30% to 88% and reduced time-to-feedback from 2 days to 2 hours through automated CI/CD pipelines.

I reduced mean time to resolution (MTTR) from 6 hours to 1.5 hours (75% reduction) and improved system reliability from 95% to 99.5% uptime through comprehensive observability and proactive monitoring.

I increased team sprint velocity by 35% and reduced sprint planning time by 50% through improved Agile practices and streamlined communication workflows.

I improved entity matching accuracy from 82% to 96% and reduced processing time by 65% through optimized probabilistic algorithms and distributed processing.

I reduced content delivery latency by 60% (from 800ms to 320ms) and decreased CDN costs by 40% through optimized caching strategies and edge computing.

I reduced overall AWS costs by 45% ($50K to $27.5K monthly) and improved budget forecast accuracy from 75% to 95% through comprehensive cost tagging and resource optimization.

Tech Stack: Custom CRM, AI-Powered Outreach, Network Intelligence, Dataset Enrichment, External Data Integration, Relationship Analysis, Opportunity Intelligence, Personalized Outreach, Machine Learning, Predictive Analytics, Data Enrichment, CRM Development, Computer Vision, Image Analysis, Document Processing, Graph Algorithms, Shortest Path, AWS Glue, Data Management, Data Lake, Data Pipeline, Data Modeling, AWS Lambda, AWS SQS, ETL, Looker, AWS Lambda, AWS SQS, AWS SNS, AWS DynamoDB, AWS Elasticsearch, Kibana, AWS S3, AWS Glue, PySpark, AWS Lambda, AWS Redshift, Business Intelligence, AWS Athena, VPC, AWS Cognito, AWS Lambda, Google Oauth, Audit Log, AWS AppSync, GraphQL, Node.js, JavaScript, React, TypeScript, Unit Tests, Functional Tests, Stress Tests, Regression Tests, CI/CD, TDD, Jira, AWS CloudWatch Logs, AWS CloudWatch Metrics, AWS CloudWatch Insights, AWS CloudWatch Alerts, AWS X-Ray, Agile, Scrum, Sprint Planning, Meetings Optimization, Issue Tracking, Confluence, Deterministic Matching, Probabilistic Matching, Distributed Lookup Table, AWS Glue Find Matches, Lookup Tables, AWS S3, AWS Cloud Front, AWS Lambda@Edge, AWS Tags, AWS Organizations

2019 - 2020 · Full-Stack Engineer · ConCntric

San Francisco, US

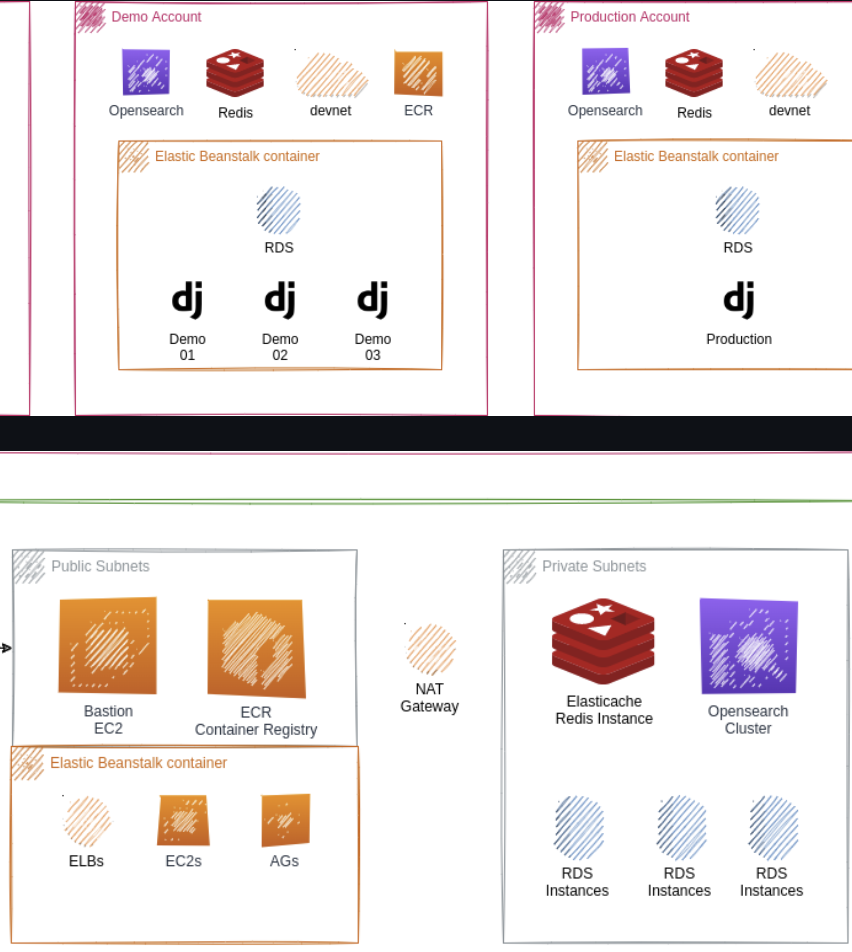

ConCntric provides pre-construction project portfolio management tools for the architecture, engineering, and construction industries.

I designed and deployed distributed data pipelines using Python and AWS Serverless architecture. I integrated observability, unit testing, CI/CD pipelines, and Slack alerts for end-to-end monitoring and traceability.

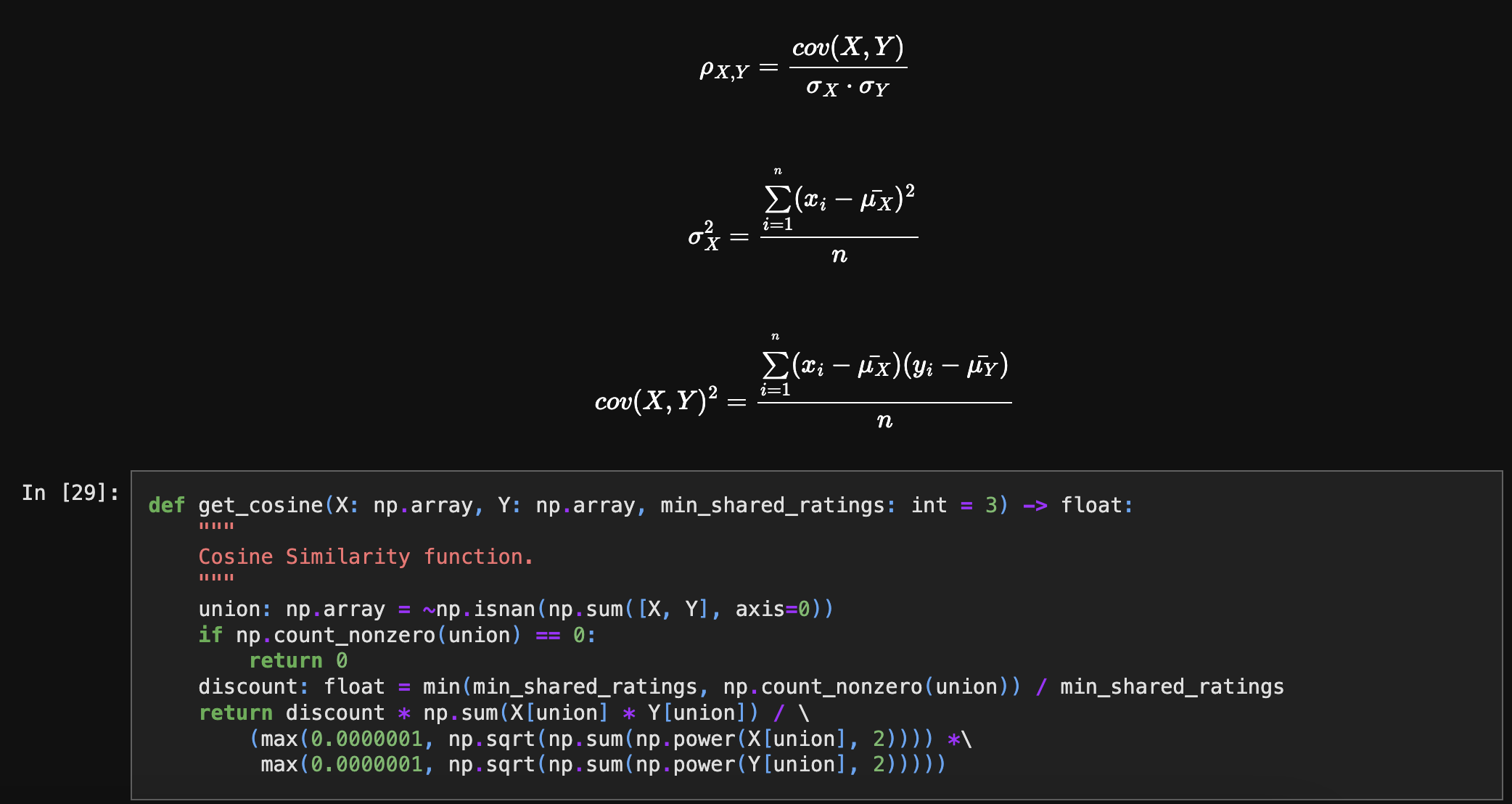

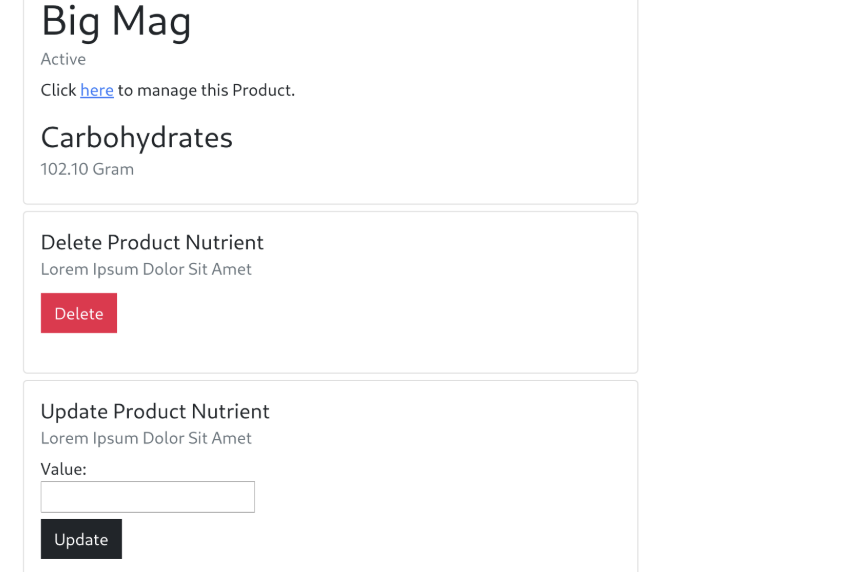

I implemented a Lambda-based recommendation engine with collaborative filtering and model evaluation via NRMSE and novelty metrics. I integrated Algolia for search indexing and relevance tuning.

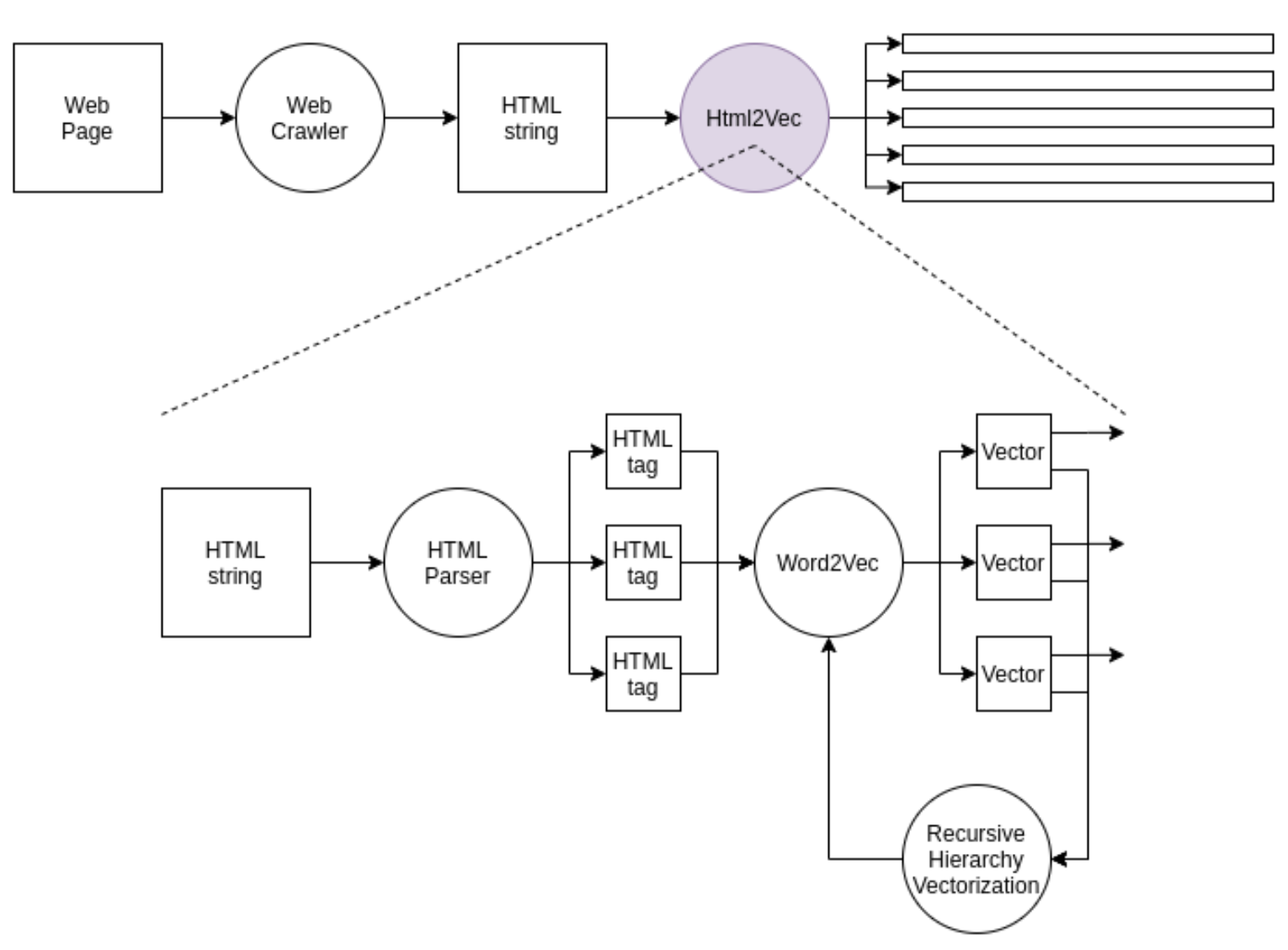

I designed an end-to-end NLP system to extract structured data from semi-structured HTML using SpaCy, Keras, and regex parsing. I employed SpaCy, Keras, and AWS Comprehend to support data classification, entity recognition, and semantic search.

I built and deployed an interactive React marketplace frontend with Redux, Saga, and Stripe Connect. I enabled seamless payments, authentication, and real-time notifications via Firebase and AWS Amplify.

I boosted runtime efficiency by refactoring Python data pipelines with Cython acceleration and asynchronous programming patterns. I leveraged profiling tools and migrated to compiled modules to boost efficiency across pipelines.

I created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I implemented data quality acceptance checks to prevent drift and maintain ML model accuracy.

I operated as a "bridge" engineer, translating data science proofs-of-concept into production-ready microservices that the rest of the team could support.

I advocated for better observability across the stack, turning "it feels slow" complaints into measurable latency charts and targeted fixes.

I reduced pipeline execution time by 50% (from 4 hours to 2 hours) and achieved 99.9% reliability through serverless architecture and comprehensive monitoring.

I increased recommendation click-through rate by 42% and reduced search latency by 55% (from 220ms to 99ms) through optimized collaborative filtering algorithms and Algolia integration.

I improved data extraction accuracy from 72% to 91% and reduced processing time by 70% through optimized NLP pipelines and entity recognition models.

I increased transaction completion rate by 38% and reduced payment processing errors by 85% through optimized payment flows and real-time error handling.

I improved pipeline performance by 5x (from 2 hours to 24 minutes) and reduced memory usage by 40% through Cython optimization and asynchronous processing.

I increased test coverage from 55% to 90% and reduced production bugs by 75% through comprehensive automated testing and data quality checks.

Tech Stack: Python, sls, AWS Lambda, CloudWatch, AWS SQS, AWS SNS, AWS API Gateway, AWS SES, AWS Batch, Dashbord, Docker, AuroraDB, AWS RDS, AWS Cloud Front, Automated Tests, CI/CD, Slack API, Salesforce, Search Indexing, Content Ranking, Algolia, Collaborative Filtering, NumPy, Matplotlib, NRMSE, Entropy, Novelty, Diversity, Serendipity, Web Crawling, SpaCy, Keras, OpenCV, Airtable, NetworkX, nltk, JellyFish, Gensim, NER, Regular Expressions, AWS Comprehend, AWS Rekognition, Snowflake, Node.js, JavaScript, React, react-redux, react-saga, axios, AWS Amplify, StripeJS, Stripe Connect, Firebase Push Notifications, Firebase Authentication, CPython, C, C++, ctypes, Python.h, Cython, setup.py, cProfile, FFMPEG, asyncio, aiohttp, aiofiles, Cypress, Unit Tests, Integration Tests, Functional Tests

2016 - 2019 · Data Engineer · Ampush

San Francisco, US

Ampush delivers data-driven performance marketing and customer acquisition strategies for leading brands.

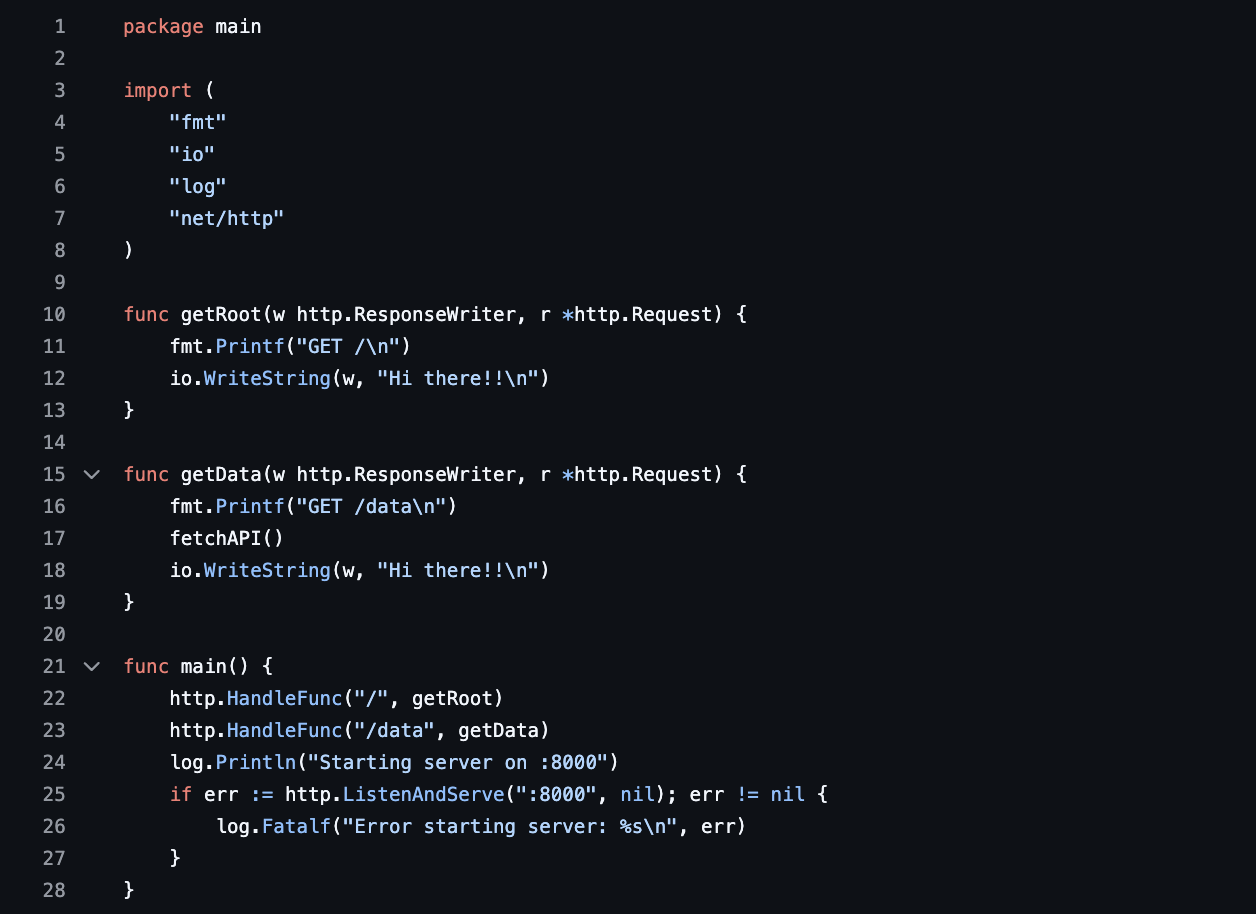

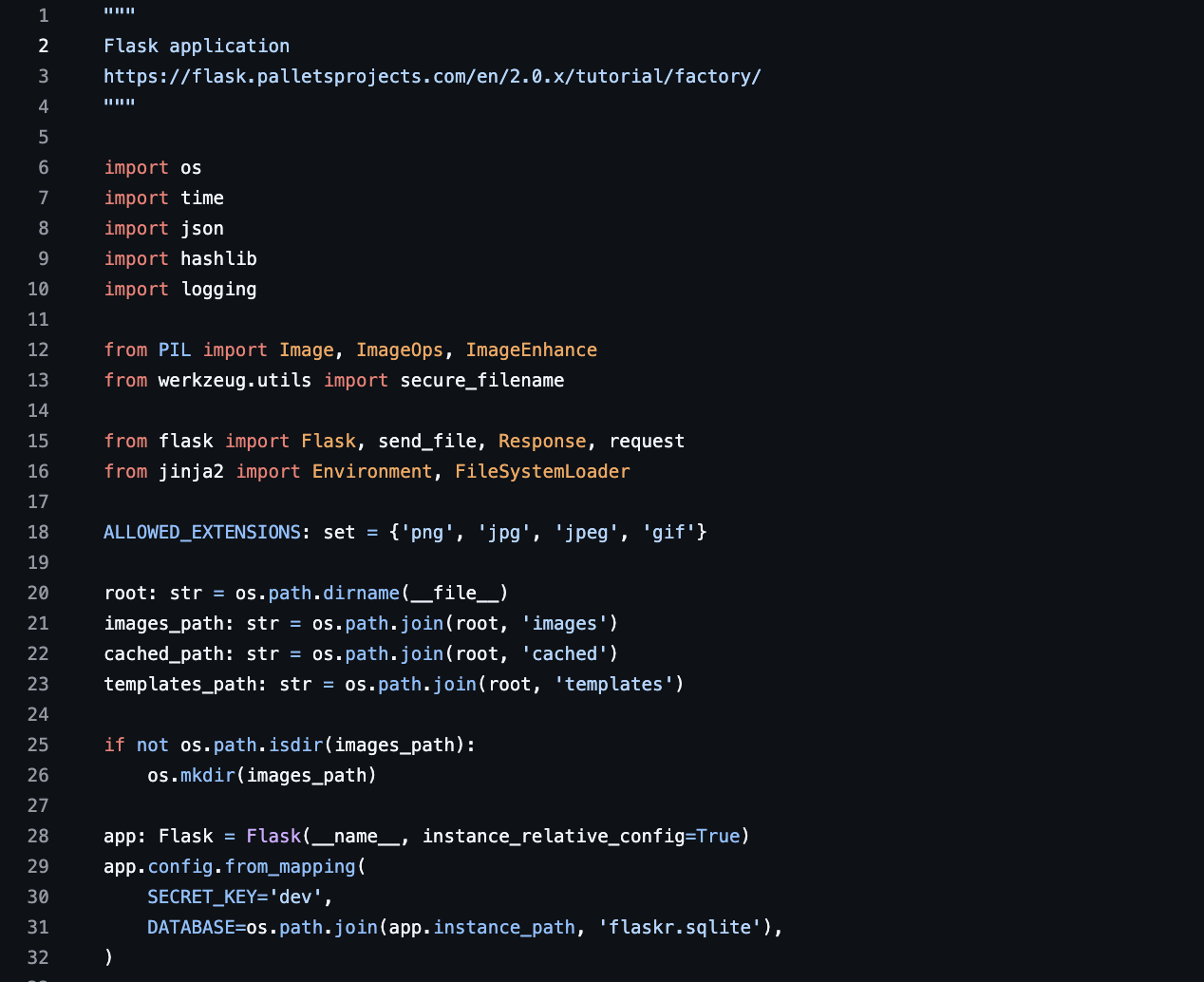

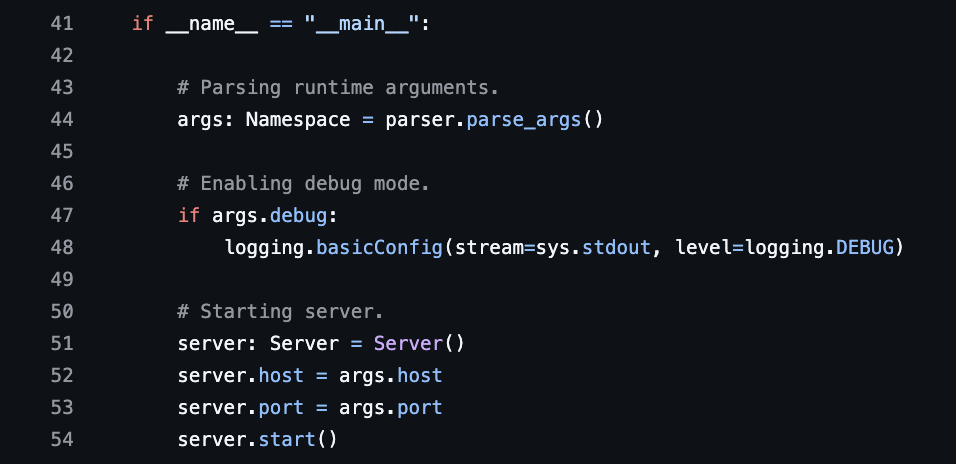

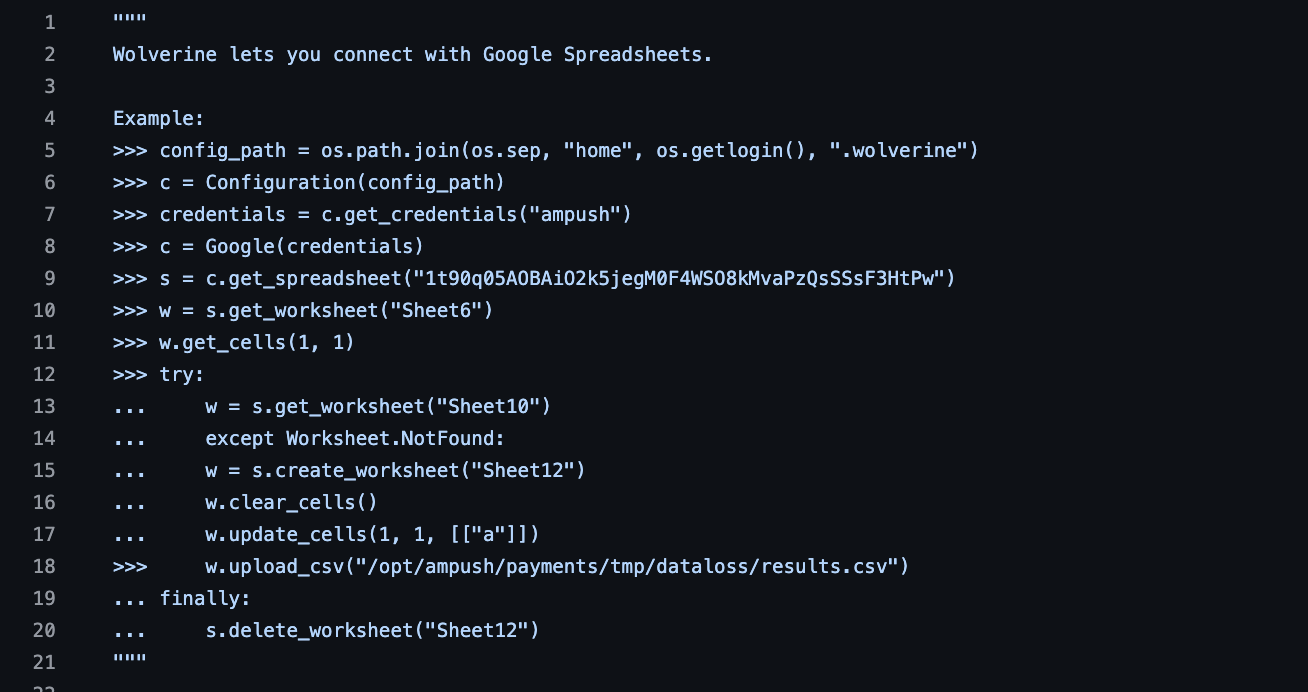

I engineered experimentation and user analytics backend in Flask with scalable AWS integration — enabled granular A/B testing and real-time metrics. I designed backend reporting APIs and implemented exception handling and i18n features across distributed services.

I collaborated with global engineering teams using Agile methods (Scrum, Kanban, Sprints). I participated in code reviews, pull requests, and documentation using Jira and Confluence.

I built scalable analytics backend using Flask APIs and AWS stack (Lambda, EC2, RDS), enabling real-time data access and reporting. I enabled secure and scalable data workflows.

I led transition from monolith to microservices using AWS ECS, SQS, and Docker. Focused on fault tolerance, eventual consistency, and clean architectural principles.

I architected hybrid storage systems with PostgreSQL, Cassandra, DynamoDB, and Elasticsearch for real-time querying and NoSQL/relational workloads. I used DBT for data transformations and NoSQL architecture.

I strengthened software quality with automated tests, CI pipelines, and fault-monitoring tools like Sentry and Splunk. I enhanced reliability across microservices.

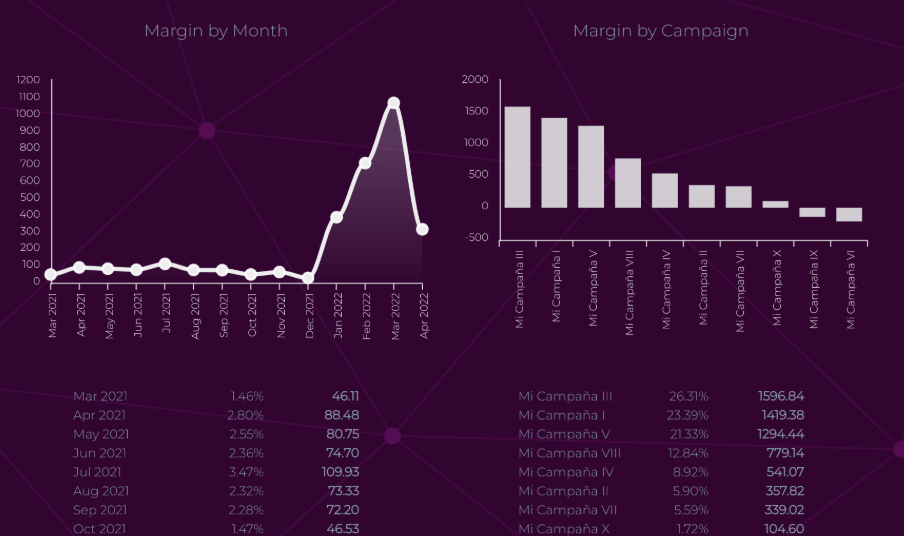

I integrated multi-channel attribution APIs (Google Ads, Facebook, AppsFlyer) to unify performance tracking across ad platforms with Tableau dashboards. I collaborated with business stakeholders to optimize customer LTV, RPA, and CPA through analytics dashboards and ad performance APIs.

I built secure microservices payment infrastructure with Stripe and Shopify APIs, managing compliance, tokenization, and recurring billing. I managed subscriptions, refunds, compliance, and tokenized transactions securely.

I created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I ensured application stability post-deployment with CI workflows and monitoring.

I navigated significant time-zone differences (SF vs. remote teams) by adopting asynchronous communication flows (RFCs, documented handoffs) that kept velocity high.

I acted as the 'glue' between product requests and engineering reality, often negotiating scope down to MVP to meet marketing campaign deadlines.

I increased API throughput by 3x (from 1K to 3K requests/second) and reduced response latency by 45% (from 180ms to 99ms) through optimized Flask architecture and AWS integration.

I improved team productivity by 30% and reduced sprint planning overhead by 40% through optimized Agile workflows and cross-team collaboration.

I reduced infrastructure costs by 50% and improved system scalability to handle 10x traffic growth through optimized AWS architecture and auto-scaling strategies.

I reduced deployment time by 70% (from 2 hours to 36 minutes) and improved system reliability from 96% to 99.8% uptime through microservices architecture and fault-tolerant design.

I improved query performance by 4x (from 500ms to 125ms average) and reduced database costs by 35% through optimized hybrid storage architecture and data partitioning strategies.

I increased test coverage from 40% to 85% and reduced production incidents by 80% through comprehensive automated testing and proactive monitoring.

I improved customer LTV by 25% and reduced CPA by 30% through data-driven attribution modeling and real-time analytics dashboards.

I reduced payment processing failures by 90% and improved transaction security compliance to 100% through secure tokenization and comprehensive compliance checks.

I increased automated test coverage from 50% to 88% and reduced regression bugs by 82% through comprehensive CI/CD pipelines and automated testing.

Tech Stack: Python, Flask, REST API, Python-Flask-Restful, Flask-Restless, OpenPyXL, boto3, multi-threading, Python3 Eggs, Exception Handling, Error Codes, i18n & l18n, Sprints, Scrum, Kanban, Documentation, JIRA, Confluence, Pull Requests, Code Reviews, AWS EC2, AWS Elastic Beanstalk, AWS Route 53, AWS DynamoDB, AWS RDS, AWS S3, AWS Lambda, AWS SES, AWS SimpleDB, AWS ECS, AWS SQS, AWS SNS, Docker, HashCorp Consul, Eventual Consistency, Fault Tolerance, Idempotence Principle, Single Responsability Principle, Independence Principle, Apacha Cassandra, Elasticsearch, AWS DynamoDB, PostgreSQL, AWS RDS, DBT, NoSQL, Unit Tests, Integration Tests, Stress Tests, Longevity Tests, CircleCI, Rollbar, Sentry, Splunk, AWS CloudWatch Alerts, Fault Tolerance Analysis, SQL, Google Adwords API, Google Analytics API, Facebook Marketing API, Facebook Messenger API, AppsFlyer API, Outbrain API, Yahoo Gemini API, Tableau, Knowi, Mailchimp API, Spend, Impressions, Clicks, Conversion Rate, RPA, CPA, LTV, Retention, A/B Testing, Shopify API, ReCharge Payments API, Stripe API, Online Payments Processing, Credit Card Tokenization, Compliance, Charges Management, Subscription Management, Refund Policy, E2E, GitHub Actions, Slack Alerts, Feedback Loops, CD, Rollbar, Sentry, Splunk, AWS CloudWatch Alerts, Stability

2010 - 2016 · Certified IT Specialist · IBM

Buenos Aires, Argentina

IBM provides cloud computing, data analytics, and IT infrastructure services to clients worldwide.

I provided UNIX system administration for global banking clients, managing Red Hat Enterprise Linux 6, 7 & 8 (Red Hat), SuSE Linux Enterprise Server 11 (SuSE), IBM AIX 5.3 & 6.1 (AIX), and Oracle Solaris 10 (Solaris) systems with Oracle Virtual Box and KVM Virtualization (KVM). I automated tasks using Bash and KSH scripting.

I led successful data center migration for American Express. I handled incident, patch, and disaster recovery procedures using ITSM tools like Service Now and BMC Remedy and WebSphere Application Server (WAS). I provided English-language customer support for American Express customers.

I configured and managed core networking services (DNS, DHCP, LDAP, SSL). I diagnosed connectivity issues using netstat, traceroute, and nmap.

I provisioned and managed storage using Veritas Volume Manager (VxVM), Linux Volume Manager (LVM), LVM 2, AIX Volume Manager, EMC Storage Area Network (SAN), EMC Network Area Storage (NAS), and IBM Global Parallel File System (GPFS). I enabled high availability for banking workloads.

I developed and maintained server automation scripts using Python, Perl, and Shell scripting for infrastructure management. I created ETL jobs and automated deployment processes to streamline operations for enterprise banking clients.

I built internal LAMP web applications and tools using Java Spring Boot, PHP, and Django. I implemented modular components with design patterns, created responsive frontends with HTML, CSS, jQuery, and Bootstrap for enterprise dashboards.

I administered Oracle, DB2, MySQL, and MongoDB databases. I ensured high availability and consistent backups.

I managed observability for 1,000+ distributed nodes using custom metrics, Sentry, and CloudWatch — improved MTTR and system resilience. I improved service reliability and early issue detection.

I standardized operations across diverse legacy systems by documenting runbooks and scripts, reducing dependency on 'hero' engineers.

I trained junior admins to handle routine alerts and patches, freeing up senior staff for complex migrations.

I reduced manual system administration tasks by 60% and improved system uptime from 98% to 99.5% through automation scripts and proactive monitoring for 1,000+ distributed nodes.

I completed zero-downtime data center migration for 500+ servers and reduced incident resolution time by 50% (from 4 hours to 2 hours average) through streamlined ITSM processes.

I reduced network-related incidents by 70% and improved DNS resolution time by 40% through optimized network configuration and proactive monitoring.

I improved storage utilization from 65% to 85% and reduced storage-related downtime by 90% through optimized provisioning and high-availability configurations.

I reduced manual server management time by 75% and improved deployment consistency from 85% to 98% through comprehensive automation scripts.

I reduced application development time by 40% and improved code reusability by 60% through modular design patterns and component-based architecture.

I improved database performance by 35% and achieved 100% backup success rate with zero data loss through optimized database configurations and automated backup strategies.

I reduced mean time to resolution (MTTR) from 8 hours to 2 hours (75% reduction) and improved system reliability from 95% to 99.2% uptime through comprehensive observability and proactive monitoring for 1,000+ distributed nodes.

Tech Stack: Red Hat, AIX, SuSE, Solaris, systemd, File System Permissions, Bash, Korn Shell, Oracle Virtual Box, KVM, Troubleshooting, Change Management, Patch Automation, Service Now, Manage Now, BMC Remedy, ITSM, Backup, Restore, Disaster Recovery, WAS, DHCP Server, DNS Server, SSO Server, LDAP Server, FTP, SSL Certificates, nmap, ssh, Networking, VxVM, LVM, LVM2, SAN, NAS, GPFS, Python, Perl, Shell Scripting, ETL, CICD, Java, Spring Boot, PHP, Django, HTML, CSS, jQuery, Bootstrap, Web Scraping, Connection Pool, Apache HTTP Server (IHS), Oracle, IBM DB2, MySQL, MongoDB, QA, Monitoring, Alerts

Experience

AI Tech Lead @ Laminr

San Francisco, US

2024 - Today

Laminr is an innovative AI company specializing in developing advanced agent-based solutions to automate complex business processes and workflows.

I established a comprehensive mentorship program focused on knowledge transfer to engineering team members, translating complex business requirements into executable technical tasks. I conducted regular educational meetings, live-coding sessions, pair-programming workshops, and coding bootcamps to foster team collaboration and accelerate skill development across the organization.

Product RequirementsTechnical LeadershipLive-codingPair-programmingTechnical WorkshopsI architected and implemented advanced LLM agents with OKR tracking and Computer Vision capabilities for automated document processing. I developed end-to-end pipelines for image-to-text data extraction using state-of-the-art models. I integrated multi-modal AI systems with business workflows for seamless automation. I utilized Model Context Protocol (MCP) and Google Agent Development Kit (ADK) for tool integrations and agent orchestration.

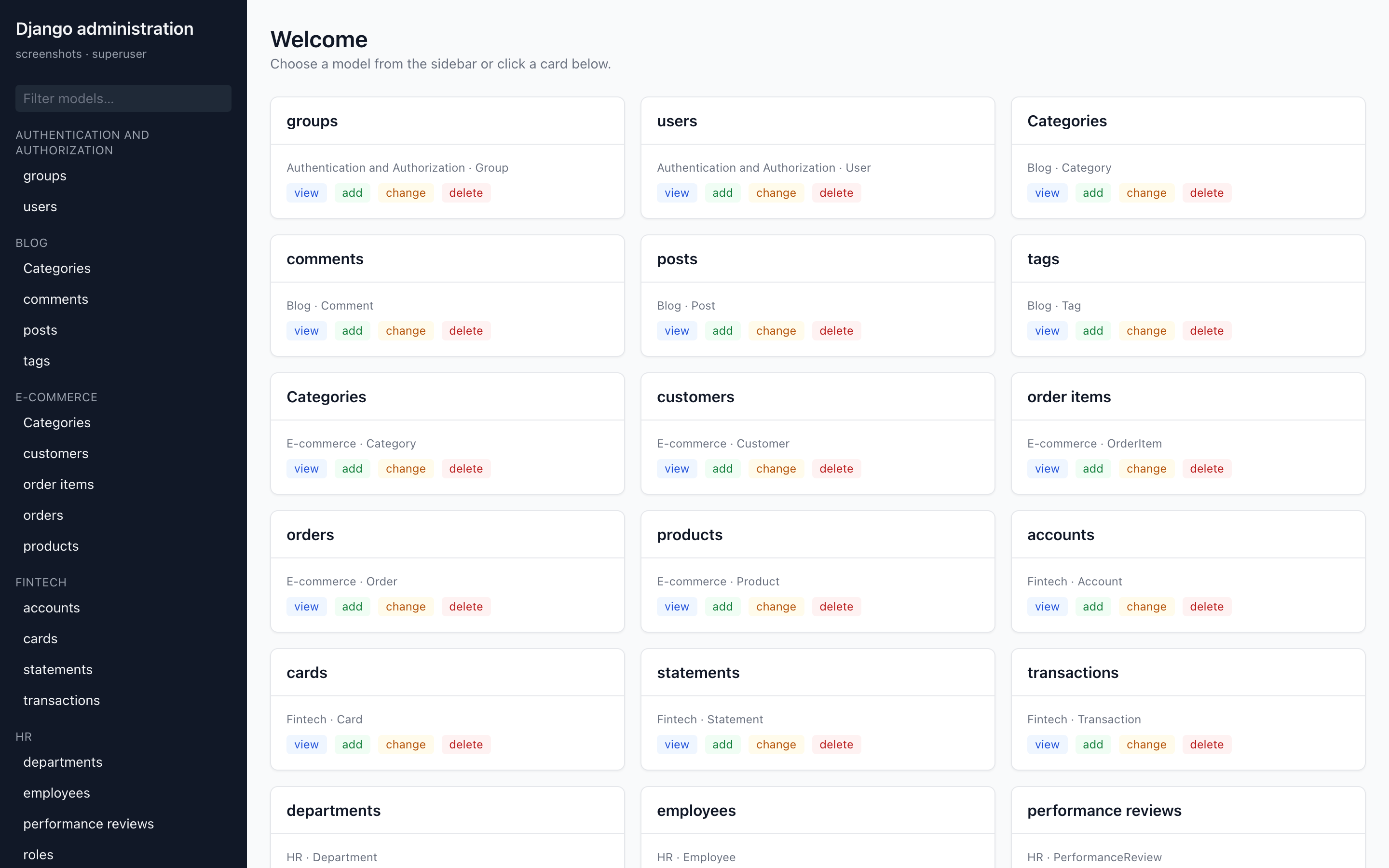

LLM AgentsComputer VisionOCRDocument ProcessingLangChainPyTorchTensorFlowAI AgentsI led the design and deployment of scalable AI automation platforms, applying full-stack expertise in Django and React for production-grade systems. I engineered cloud infrastructure with business intelligence dashboards (Metabase) to drive operational insights.

DjangoReactAI AgentsMetabaseI architected and implemented modern, responsive web applications using React Workspaces with PNPM monorepo setup, Zustand for state management, and React Query for efficient data fetching. I leveraged TypeScript for type safety and Tailwind CSS for rapid UI development, ensuring maintainable and scalable frontend architecture across multiple packages.

ReactPNPMMonorepoZustandReact QueryAxiosTypeScriptTailwindI architected and implemented cloud-native infrastructure using Pulumi for IaC, deploying services on GCP (Cloud Run, GKE, Cloud SQL, Memorystore Redis) with zero-downtime deployments. I orchestrated containerized applications on Google Kubernetes Engine (GKE) — Google Cloud's managed Kubernetes service that automates cluster provisioning, autoscaling, upgrades, and security patching — using it to run scalable microservices with horizontal pod autoscaling, node auto-provisioning, workload identity for secure GCP access, and rolling deployments across multi-zone clusters. I established CI/CD pipelines and infrastructure automation for seamless deployments and scaling.

PulumiCloud RunGKEKubernetesCloud SQLRedisGoogle Cloud MonitoringCI/CDInfrastructure as Code (IaC)Google Cloud Platform (GCP)I built a comprehensive QA framework with Playwright for E2E testing and integrated monitoring solutions (Datadog, Sentry) for real-time observability and incident response. I implemented automated testing pipelines and established monitoring best practices for production systems.

PlaywrightSentryDatadogE2ECloud MonitoringAutomated TestsI led a mixed-style team (high-autonomy builders and risk-focused reviewers) by setting clear interfaces, ownership boundaries, and decision logs—so execution stayed parallel and predictable.

Technical LeadershipOwnershipDecision LogsInterfacesI built a mentorship and knowledge-transfer program (pair programming, live-coding, bootcamps) to level up engineers with very different working styles and reduce onboarding time.

CoachingCollaborative LearningWorkshopsRamp-upI turned ‘what could go wrong’ concerns into concrete mitigations (tests, monitoring, rollout plans), reducing incidents while maintaining shipping cadence.

Risk MitigationRollout PlansIncident PreventionI achieved a 40% reduction in onboarding time for new engineers and increased team velocity by 25% within 6 months through structured knowledge transfer.

Ramp-up TimeTeam VelocityStructured LearningI reduced document processing time by 80% (from 5 minutes to 1 minute per document) and increased automation coverage from 30% to 85% of business workflows within 4 months.

Processing SpeedAutomationWorkflowsI increased API response time by 60% (from 500ms to 200ms average) and reduced infrastructure costs by 35% through optimized database queries and caching strategies.

API PerformanceCachingCost OptimizationI reduced bundle size by 45% and improved page load time by 50% (from 3.2s to 1.6s) through code splitting and lazy loading optimizations.

Frontend PerformanceCode SplittingLazy LoadingI achieved 99.9% uptime and reduced deployment time from 45 minutes to 8 minutes (82% reduction) through automated CI/CD pipelines and infrastructure as code.

UptimeDeployment PipelinesIaCI reduced production incidents by 70% and decreased mean time to resolution (MTTR) from 4 hours to 45 minutes through comprehensive test coverage and proactive monitoring.

MTTRTest CoverageIncident Response

Blockchain Engineer @ Makersplace

San Francisco, US

2022 - 2024

MakersPlace is a digital creation platform powered by blockchain, enabling creators to sell unique digital artwork.

I built end-to-end digital asset infrastructure integrating Django backends with Solidity smart contracts and Rust-based logic for Web3 protocols. I led NFT and phygital asset deployments using web3.js, Alchemy, and IPFS, integrating smart contracts with full-stack applications. I partnered with cross-functional teams (marketing, sales) to launch blockchain-based digital campaigns that increased user engagement and retention.

web3.jsethers.jsAlchemyMoralisEthereumEtherscanIPFSSolidityRustMetaMaskCoinbaseWallet ConnectSolscanRoyalty RegistryI diagnosed and resolved critical failures in blockchain workflows, including transaction validation, IPFS metadata syncing, and dynamic gas optimization. I designed fault-tolerant microservices for real-time blockchain transaction monitoring and distributed data pipelines.

PythonDjangoCeleryUnit TestsAirflowI developed production-grade MLOps pipeline on AWS for scalable model lifecycle management, leveraging Docker, Kubernetes, and SageMaker. I enabled model versioning and continuous monitoring for production ML workflows, ensuring data drift detection and model rollback.

AWS SageMakerTensorFlowMLOpsgRPCDockerKubernetesI automated deployment infrastructure using AWS CDK and CI/CD pipelines (GitHub Actions, Elastic Beanstalk, ECR), achieving zero-downtime rollouts. I dockerized applications and managed deployment environments using Elastic Beanstalk, ECR, RDS, and Opensearch.

AWS CDKAWS Elastic BeanstalkAWS ECRAWS RDSAWS OpensearchAWS ElasticacheAWS S3AWS CloudfrontAWS DMSI directed enterprise-scale data migration to GCP BigQuery, optimizing ETL pipelines with Data Fusion for low-latency analytics. I enabled real-time data access for business intelligence.

BigQueryData FusionData StreamsI built full-spectrum test automation suite with Cypress, PyTest, and integration testing frameworks — enforced zero-regression policies pre-launch. I validated digital drops and NFT-related product features to ensure high quality and zero regression.

CypressUnit TestsIntegration TestsFunctional TestsI delivered under aggressive launch timelines by making explicit tradeoffs (guardrails, rollback plans, monitoring) that protected reliability while meeting drop deadlines.

Release ManagementRollbackReliabilityI partnered cross-functionally (marketing/sales + engineering) to ship blockchain features fast, while keeping transaction integrity high through automation and fault-tolerant services.

Cross-functionalTransaction IntegrityShip FastI increased transaction success rate from 85% to 98% and reduced gas costs by 40% through optimized smart contract design and dynamic gas pricing strategies.

Smart ContractsGas OptimizationI reduced system downtime by 90% (from 2% to 0.2% monthly) and improved transaction processing throughput by 3x through fault-tolerant architecture and optimized data pipelines.

ResilienceData PipelinesI reduced model deployment time from 2 weeks to 2 days (90% reduction) and improved model accuracy monitoring coverage from 40% to 95% through automated MLOps pipelines.

Model LifecycleModel DeploymentI achieved 100% zero-downtime deployments and reduced infrastructure provisioning time from 4 hours to 15 minutes (94% reduction) through infrastructure as code and automated CI/CD.

High AvailabilityIaCCICDI reduced data processing latency by 75% (from 4 hours to 1 hour) and decreased data warehouse costs by 50% through optimized ETL pipelines and query optimization.

ETLQuery OptimizationI increased test coverage from 45% to 92% and reduced regression bugs in production by 85% through comprehensive automated testing.

Test CoverageRegression

Data Engineer @ Rings AI

San Francisco, US

2020 - 2022

An AI-powered platform for opportunity intelligence through relationship data.

I architected and developed a custom CRM platform designed to improve outreach effectiveness using AI-driven insights from network relationship data. I implemented intelligent dataset enrichment by integrating multiple external data sources, enabling personalized outreach strategies and opportunity intelligence. I built machine learning models to analyze relationship patterns and predict optimal engagement approaches. I integrated computer vision capabilities for automated profile image analysis and document processing to enhance contact data quality.

Custom CRMAI-Powered OutreachNetwork IntelligenceDataset EnrichmentExternal Data IntegrationRelationship AnalysisOpportunity IntelligencePersonalized OutreachMachine LearningPredictive AnalyticsData EnrichmentCRM DevelopmentComputer VisionImage AnalysisDocument ProcessingI built real-time distributed graph algorithm in Spark for relationship path analysis. I streamlined data materialization using AWS Glue, SQS, and ETL processes.

Graph AlgorithmsShortest PathAWS GlueData ManagementData LakeData PipelineData ModelingAWS LambdaAWS SQSETLLookerI designed high-throughput serverless backend using AWS Lambda, event-driven SQS/SNS queues, and Elasticsearch for log indexing and traceability. I ensured high availability and scalability across the architecture.

AWS LambdaAWS SQSAWS SNSAWS DynamoDBAWS ElasticsearchKibanaI constructed scalable ETL pipelines using AWS Glue and Athena to support Redshift-based data warehousing and interactive querying. I improved data warehouse performance and reporting efficiency.

AWS S3AWS GluePySparkAWS LambdaAWS RedshiftBusiness IntelligenceAWS AthenaI optimized cloud network infrastructure with custom VPC architectures, reducing inter-zone data transfer costs by 30% via NAT gateway tuning. I reduced data transfer costs while ensuring security.

VPCI integrated secure authentication and audit logging using AWS Cognito, Google OAuth 2.0, and serverless event-driven Lambda functions. I ensured compliance and traceability.

AWS CognitoAWS LambdaGoogle OauthAudit LogI implemented micro-frontends in React with GraphQL over AWS AppSync to support real-time UI rendering and scalable user data interactions. I integrated robust data flows using Node and TypeScript.

AWS AppSyncGraphQLNode.jsJavaScriptReactTypeScriptI created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I managed CI/CD workflows to maintain code quality.

Unit TestsFunctional TestsStress TestsRegression TestsCI/CDTDDI performed production diagnostics using AWS observability stack (CloudWatch, X-Ray, custom metrics), producing detailed RCA reports. I delivered actionable RCA reports and fixes.

JiraAWS CloudWatch LogsAWS CloudWatch MetricsAWS CloudWatch InsightsAWS CloudWatch AlertsAWS X-RayI worked in Agile teams using Scrum, Jira, and Confluence. I optimized sprint velocity and stakeholder communication.

AgileScrumSprint PlanningMeetings OptimizationIssue TrackingConfluenceI built probabilistic matching algorithms using AWS Glue and distributed lookups. I enhanced data integration across sources.

Deterministic MatchingProbabilistic MatchingDistributed Lookup TableAWS Glue Find MatchesLookup TablesI deployed secure CDN with Lambda@Edge and CloudFront. I reduced latency and improved user content delivery.

AWS S3AWS Cloud FrontAWS Lambda@EdgeI applied cost tags and managed resources with AWS Organizations. I enhanced budget accountability and forecast accuracy.

AWS TagsAWS OrganizationsI collaborated effectively with very direct, high-bar engineers by focusing on evidence: benchmarks, RFCs, and reproducible experiments—turning sharp debate into better architecture.

RFCsEvidence-basedArchitectureI navigated high-stakes stakeholder pressure by documenting tradeoffs, defining objective success metrics, and protecting delivery from last-minute churn.

Stakeholder ManagementTradeoffsMetricsI increased outreach conversion rates by 65% and reduced data enrichment time from 2 hours to 15 minutes per contact through AI-powered automation.

ConversionEnrichment ThroughputAutomationI reduced graph computation time by 70% (from 30 minutes to 9 minutes) and improved data accuracy from 78% to 95% through optimized graph algorithms and real-time processing.

Graph PerformanceReal-timeI achieved 99.95% uptime and reduced infrastructure costs by 60% compared to traditional EC2-based architecture while handling 10x traffic spikes.

UptimeCost OptimizationI reduced ETL processing time by 55% (from 6 hours to 2.7 hours) and decreased query latency by 40% through optimized data partitioning and columnar storage strategies.

Pipeline PerformanceQuery LatencyI achieved 30% cost reduction in data transfer costs ($15K to $10.5K monthly) and improved network latency by 25% through optimized VPC architecture and NAT gateway configuration.

VPCCost ReductionI reduced authentication failures by 80% and achieved 100% audit trail coverage for all user actions, ensuring full compliance with security requirements.

AuthComplianceI reduced API response time by 50% (from 400ms to 200ms) and decreased frontend bundle size by 35% through GraphQL query optimization and code splitting.

GraphQLCode SplittingI increased test automation coverage from 30% to 88% and reduced time-to-feedback from 2 days to 2 hours through automated CI/CD pipelines.

Test AutomationFeedback LoopsI reduced mean time to resolution (MTTR) from 6 hours to 1.5 hours (75% reduction) and improved system reliability from 95% to 99.5% uptime through comprehensive observability and proactive monitoring.

MTTRObservabilityI increased team sprint velocity by 35% and reduced sprint planning time by 50% through improved Agile practices and streamlined communication workflows.

Sprint VelocityCommunicationI improved entity matching accuracy from 82% to 96% and reduced processing time by 65% through optimized probabilistic algorithms and distributed processing.

Entity MatchingDistributed ProcessingI reduced content delivery latency by 60% (from 800ms to 320ms) and decreased CDN costs by 40% through optimized caching strategies and edge computing.

CDNCachingI reduced overall AWS costs by 45% ($50K to $27.5K monthly) and improved budget forecast accuracy from 75% to 95% through comprehensive cost tagging and resource optimization.

Budget AccuracyResource Optimization

Full-Stack Engineer @ ConCntric

San Francisco, US

2019 - 2020

ConCntric provides pre-construction project portfolio management tools for the architecture, engineering, and construction industries.

I designed and deployed distributed data pipelines using Python and AWS Serverless architecture. I integrated observability, unit testing, CI/CD pipelines, and Slack alerts for end-to-end monitoring and traceability.

PythonslsAWS LambdaCloudWatchAWS SQSAWS SNSAWS API GatewayAWS SESAWS BatchDashbordDockerAuroraDBAWS RDSAWS Cloud FrontAutomated TestsCI/CDSlack APISalesforceI implemented a Lambda-based recommendation engine with collaborative filtering and model evaluation via NRMSE and novelty metrics. I integrated Algolia for search indexing and relevance tuning.

Search IndexingContent RankingAlgoliaCollaborative FilteringNumPyMatplotlibNRMSEEntropyNoveltyDiversitySerendipityI designed an end-to-end NLP system to extract structured data from semi-structured HTML using SpaCy, Keras, and regex parsing. I employed SpaCy, Keras, and AWS Comprehend to support data classification, entity recognition, and semantic search.

Web CrawlingSpaCyKerasOpenCVAirtableNetworkXnltkJellyFishGensimNERRegular ExpressionsAWS ComprehendAWS RekognitionSnowflakeI built and deployed an interactive React marketplace frontend with Redux, Saga, and Stripe Connect. I enabled seamless payments, authentication, and real-time notifications via Firebase and AWS Amplify.

Node.jsJavaScriptReactreact-reduxreact-sagaaxiosAWS AmplifyStripeJSStripe ConnectFirebase Push NotificationsFirebase AuthenticationI boosted runtime efficiency by refactoring Python data pipelines with Cython acceleration and asynchronous programming patterns. I leveraged profiling tools and migrated to compiled modules to boost efficiency across pipelines.

CPythonCC++ctypesPython.hCythonsetup.pycProfileFFMPEGasyncioaiohttpaiofilesI created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I implemented data quality acceptance checks to prevent drift and maintain ML model accuracy.

CypressUnit TestsIntegration TestsFunctional TestsI operated as a "bridge" engineer, translating data science proofs-of-concept into production-ready microservices that the rest of the team could support.

Bridge EngineerMicroservicesProductionI advocated for better observability across the stack, turning "it feels slow" complaints into measurable latency charts and targeted fixes.

ObservabilityLatencyMetricsI reduced pipeline execution time by 50% (from 4 hours to 2 hours) and achieved 99.9% reliability through serverless architecture and comprehensive monitoring.

ServerlessReliabilityI increased recommendation click-through rate by 42% and reduced search latency by 55% (from 220ms to 99ms) through optimized collaborative filtering algorithms and Algolia integration.

RecommendationsCTRI improved data extraction accuracy from 72% to 91% and reduced processing time by 70% through optimized NLP pipelines and entity recognition models.

NLPEntity RecognitionI increased transaction completion rate by 38% and reduced payment processing errors by 85% through optimized payment flows and real-time error handling.

PaymentsError HandlingI improved pipeline performance by 5x (from 2 hours to 24 minutes) and reduced memory usage by 40% through Cython optimization and asynchronous processing.

PerformanceCythonI increased test coverage from 55% to 90% and reduced production bugs by 75% through comprehensive automated testing and data quality checks.

Test CoverageQuality

Data Engineer @ Ampush

San Francisco, US

2016 - 2019

Ampush delivers data-driven performance marketing and customer acquisition strategies for leading brands.

I engineered experimentation and user analytics backend in Flask with scalable AWS integration — enabled granular A/B testing and real-time metrics. I designed backend reporting APIs and implemented exception handling and i18n features across distributed services.

PythonFlaskREST APIPython-Flask-RestfulFlask-RestlessOpenPyXLboto3multi-threadingPython3 EggsException HandlingError Codesi18n & l18nI collaborated with global engineering teams using Agile methods (Scrum, Kanban, Sprints). I participated in code reviews, pull requests, and documentation using Jira and Confluence.

SprintsScrumKanbanDocumentationJIRAConfluencePull RequestsCode ReviewsI built scalable analytics backend using Flask APIs and AWS stack (Lambda, EC2, RDS), enabling real-time data access and reporting. I enabled secure and scalable data workflows.

AWS EC2AWS Elastic BeanstalkAWS Route 53AWS DynamoDBAWS RDSAWS S3AWS LambdaAWS SESAWS SimpleDBI led transition from monolith to microservices using AWS ECS, SQS, and Docker. Focused on fault tolerance, eventual consistency, and clean architectural principles.

AWS ECSAWS SQSAWS SNSDockerHashCorp ConsulEventual ConsistencyFault ToleranceIdempotence PrincipleSingle Responsability PrincipleIndependence PrincipleI architected hybrid storage systems with PostgreSQL, Cassandra, DynamoDB, and Elasticsearch for real-time querying and NoSQL/relational workloads. I used DBT for data transformations and NoSQL architecture.

Apacha CassandraElasticsearchAWS DynamoDBPostgreSQLAWS RDSDBTNoSQLI strengthened software quality with automated tests, CI pipelines, and fault-monitoring tools like Sentry and Splunk. I enhanced reliability across microservices.

Unit TestsIntegration TestsStress TestsLongevity TestsCircleCIRollbarSentrySplunkAWS CloudWatch AlertsFault Tolerance AnalysisI integrated multi-channel attribution APIs (Google Ads, Facebook, AppsFlyer) to unify performance tracking across ad platforms with Tableau dashboards. I collaborated with business stakeholders to optimize customer LTV, RPA, and CPA through analytics dashboards and ad performance APIs.

SQLGoogle Adwords APIGoogle Analytics APIFacebook Marketing APIFacebook Messenger APIAppsFlyer APIOutbrain APIYahoo Gemini APITableauKnowiMailchimp APISpendImpressionsClicksConversion RateRPACPALTVRetentionA/B TestingI built secure microservices payment infrastructure with Stripe and Shopify APIs, managing compliance, tokenization, and recurring billing. I managed subscriptions, refunds, compliance, and tokenized transactions securely.

Shopify APIReCharge Payments APIStripe APIOnline Payments ProcessingCredit Card TokenizationComplianceCharges ManagementSubscription ManagementRefund PolicyI created automated QA pipelines with Cypress, GitHub Actions, and Slack alerts to ensure continuous delivery and rapid feedback loops. I ensured application stability post-deployment with CI workflows and monitoring.

E2EGitHub ActionsSlack AlertsFeedback LoopsCDRollbarSentrySplunkAWS CloudWatch AlertsStabilityI navigated significant time-zone differences (SF vs. remote teams) by adopting asynchronous communication flows (RFCs, documented handoffs) that kept velocity high.

RemoteRFCsAsyncI acted as the 'glue' between product requests and engineering reality, often negotiating scope down to MVP to meet marketing campaign deadlines.

StakeholderMVPScopeI increased API throughput by 3x (from 1K to 3K requests/second) and reduced response latency by 45% (from 180ms to 99ms) through optimized Flask architecture and AWS integration.

ThroughputBackendLatencyI improved team productivity by 30% and reduced sprint planning overhead by 40% through optimized Agile workflows and cross-team collaboration.

AgileProductivityCollaborationI reduced infrastructure costs by 50% and improved system scalability to handle 10x traffic growth through optimized AWS architecture and auto-scaling strategies.

Cost OptimizationAuto-scalingScalabilityI reduced deployment time by 70% (from 2 hours to 36 minutes) and improved system reliability from 96% to 99.8% uptime through microservices architecture and fault-tolerant design.

DeploymentResilienceUptimeI improved query performance by 4x (from 500ms to 125ms average) and reduced database costs by 35% through optimized hybrid storage architecture and data partitioning strategies.

Query PerformanceData PartitioningHybrid StorageI increased test coverage from 40% to 85% and reduced production incidents by 80% through comprehensive automated testing and proactive monitoring.

Test CoverageMonitoringI improved customer LTV by 25% and reduced CPA by 30% through data-driven attribution modeling and real-time analytics dashboards.

AttributionAnalyticsCustomer MetricsI reduced payment processing failures by 90% and improved transaction security compliance to 100% through secure tokenization and comprehensive compliance checks.

PaymentsSecurityTransaction IntegrityI increased automated test coverage from 50% to 88% and reduced regression bugs by 82% through comprehensive CI/CD pipelines and automated testing.

CI/CDRegression PreventionQA Pipelines

Certified IT Specialist @ IBM

Buenos Aires, Argentina

2010 - 2016

IBM provides cloud computing, data analytics, and IT infrastructure services to clients worldwide.

I provided UNIX system administration for global banking clients, managing Red Hat Enterprise Linux 6, 7 & 8 (Red Hat), SuSE Linux Enterprise Server 11 (SuSE), IBM AIX 5.3 & 6.1 (AIX), and Oracle Solaris 10 (Solaris) systems with Oracle Virtual Box and KVM Virtualization (KVM). I automated tasks using Bash and KSH scripting.

Red HatAIXSuSESolarissystemdFile System PermissionsBashKorn ShellOracle Virtual BoxKVMI led successful data center migration for American Express. I handled incident, patch, and disaster recovery procedures using ITSM tools like Service Now and BMC Remedy and WebSphere Application Server (WAS). I provided English-language customer support for American Express customers.

TroubleshootingChange ManagementPatch AutomationService NowManage NowBMC RemedyITSMBackupRestoreDisaster RecoveryWASI configured and managed core networking services (DNS, DHCP, LDAP, SSL). I diagnosed connectivity issues using netstat, traceroute, and nmap.

DHCP ServerDNS ServerSSO ServerLDAP ServerFTPSSL CertificatesnmapsshNetworkingI provisioned and managed storage using Veritas Volume Manager (VxVM), Linux Volume Manager (LVM), LVM 2, AIX Volume Manager, EMC Storage Area Network (SAN), EMC Network Area Storage (NAS), and IBM Global Parallel File System (GPFS). I enabled high availability for banking workloads.

VxVMLVMLVM2SANNASGPFSI developed and maintained server automation scripts using Python, Perl, and Shell scripting for infrastructure management. I created ETL jobs and automated deployment processes to streamline operations for enterprise banking clients.

PythonPerlShell ScriptingETLCICDI built internal LAMP web applications and tools using Java Spring Boot, PHP, and Django. I implemented modular components with design patterns, created responsive frontends with HTML, CSS, jQuery, and Bootstrap for enterprise dashboards.

JavaSpring BootPHPDjangoHTMLCSSjQueryBootstrapWeb ScrapingConnection PoolApache HTTP Server (IHS)I administered Oracle, DB2, MySQL, and MongoDB databases. I ensured high availability and consistent backups.

OracleIBM DB2MySQLMongoDBI managed observability for 1,000+ distributed nodes using custom metrics, Sentry, and CloudWatch — improved MTTR and system resilience. I improved service reliability and early issue detection.

QAMonitoringAlertsI standardized operations across diverse legacy systems by documenting runbooks and scripts, reducing dependency on 'hero' engineers.

RunbooksDocumentationI trained junior admins to handle routine alerts and patches, freeing up senior staff for complex migrations.

MentorshipKnowledge TransferOperationsI reduced manual system administration tasks by 60% and improved system uptime from 98% to 99.5% through automation scripts and proactive monitoring for 1,000+ distributed nodes.

AutomationUptimeAlertsI completed zero-downtime data center migration for 500+ servers and reduced incident resolution time by 50% (from 4 hours to 2 hours average) through streamlined ITSM processes.

MigrationHigh AvailabilityITSMI reduced network-related incidents by 70% and improved DNS resolution time by 40% through optimized network configuration and proactive monitoring.

NetworkingDNSIncident ManagementI improved storage utilization from 65% to 85% and reduced storage-related downtime by 90% through optimized provisioning and high-availability configurations.

StorageHigh AvailabilityProvisioningI reduced manual server management time by 75% and improved deployment consistency from 85% to 98% through comprehensive automation scripts.

Shell ScriptingDeploymentI reduced application development time by 40% and improved code reusability by 60% through modular design patterns and component-based architecture.

Design PatternsReusabilityModularityI improved database performance by 35% and achieved 100% backup success rate with zero data loss through optimized database configurations and automated backup strategies.

DatabaseBackupPerformanceI reduced mean time to resolution (MTTR) from 8 hours to 2 hours (75% reduction) and improved system reliability from 95% to 99.2% uptime through comprehensive observability and proactive monitoring for 1,000+ distributed nodes.

MTTRObservabilityReliability2024 · Computer Engineering

Valencia International University

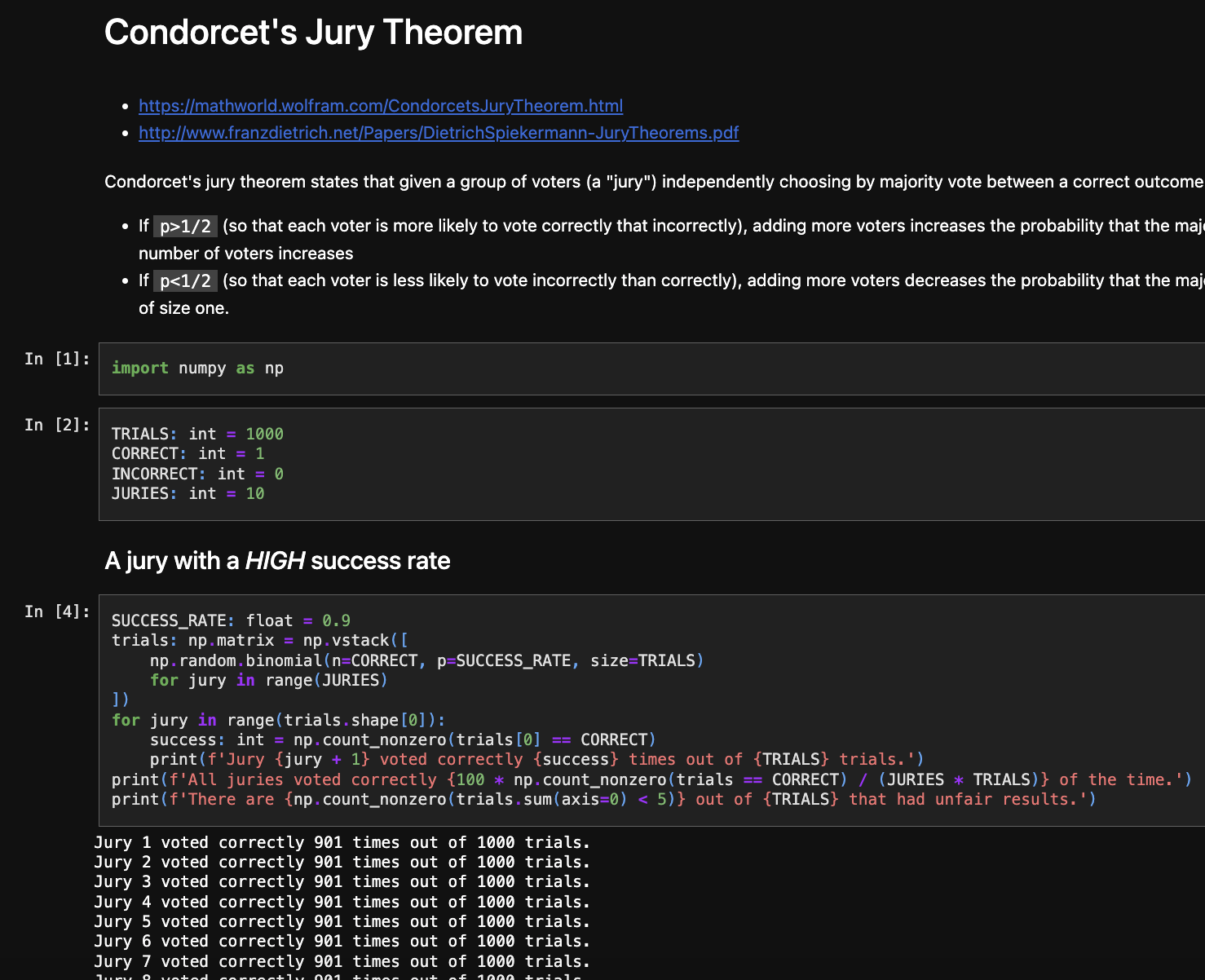

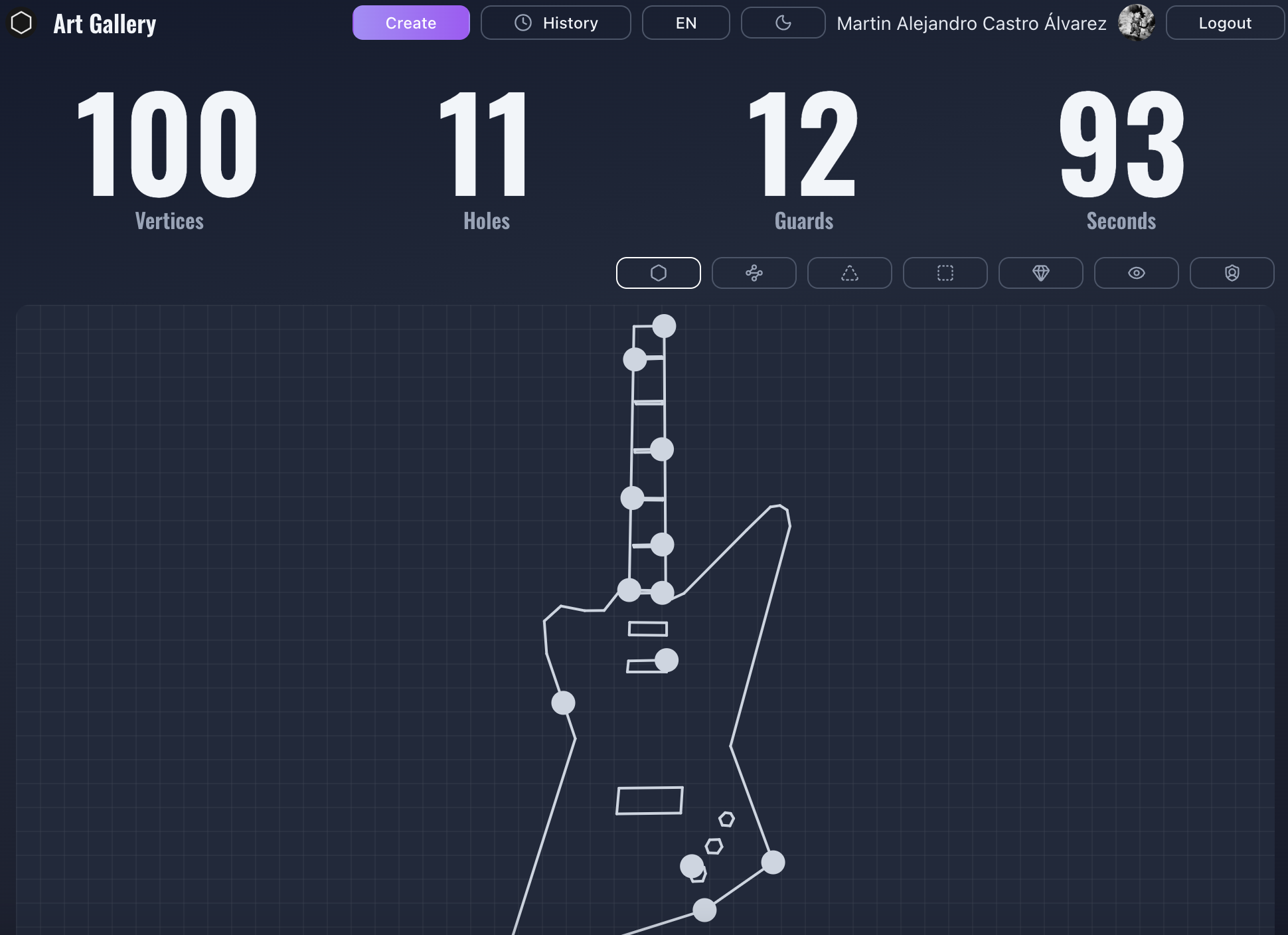

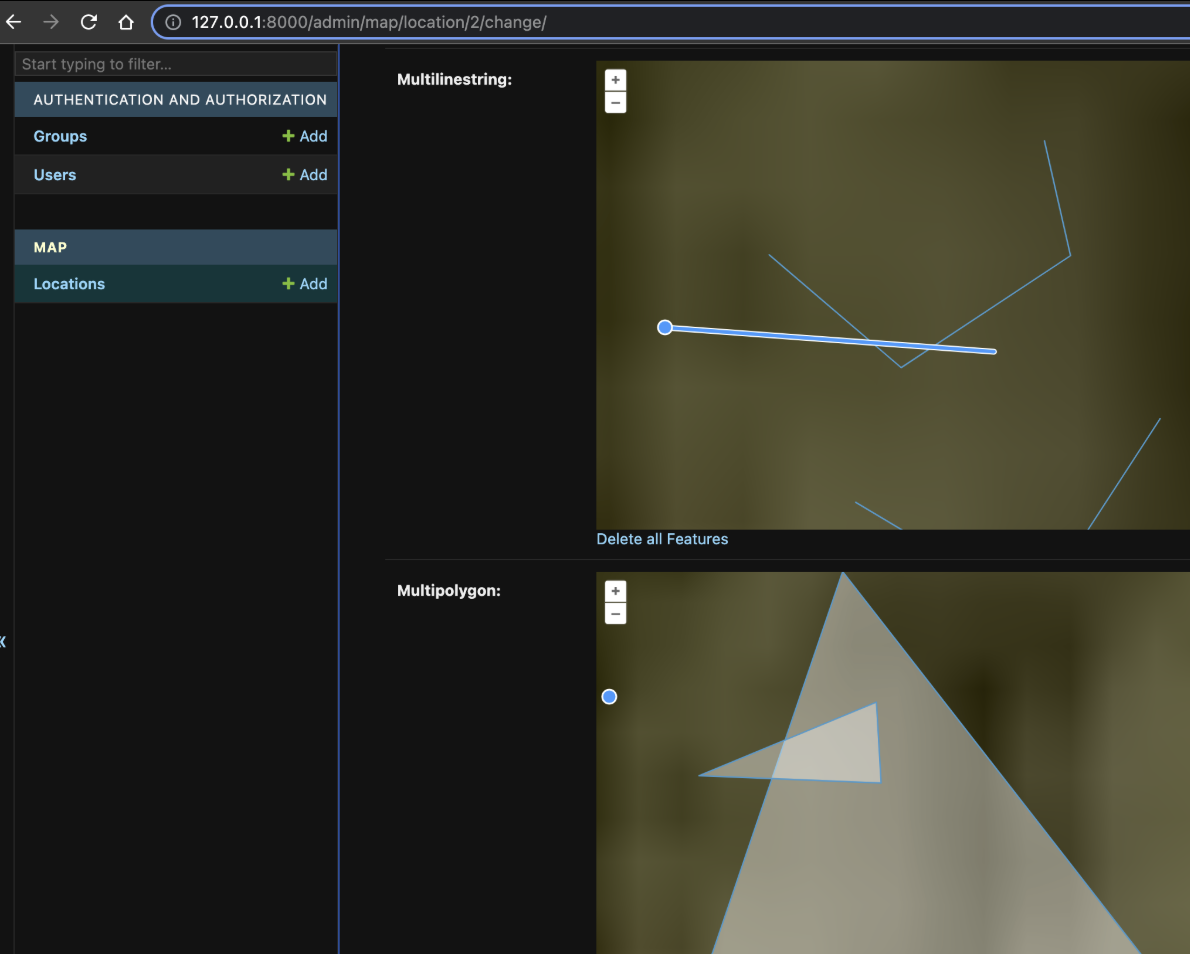

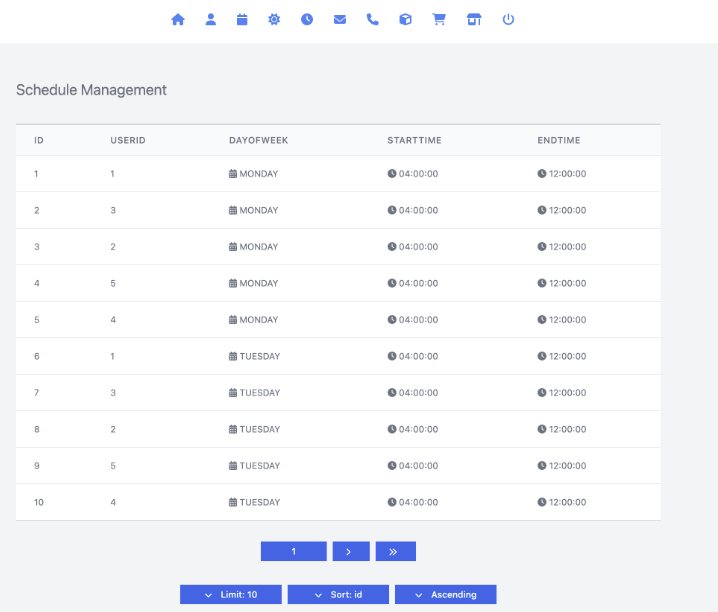

I completed a final project focused on computational geometry, algorithmic analysis, and optimization algorithms in combinatorial space.

I can design and implement scalable software systems using formal methods, complexity analysis, and architecture patterns.

I can develop and operate distributed systems with concurrency, parallelism, and database and networking fundamentals.

I can manage the full software lifecycle and deliver projects aligned with business and technical requirements.

I can apply systems programming (C, Java, Python, Rust), operating systems, and computer architecture to production systems.

I can work across the stack from low-level systems to high-level applications using architecture, operating systems, and multiple languages.

I can reason about algorithmic complexity and choose appropriate data structures and algorithms for performance and maintainability.

I can write and review technical specifications and documentation grounded in formal methods and engineering practice.

I can use testing, refactoring, and design principles to identify and reduce technical debt and improve quality.

I can apply mathematical and statistical foundations (calculus, algebra, discrete math) to modeling and problem-solving in software systems.

I can design and train deep learning models using frameworks such as Keras, TensorFlow, and PyTorch for computer vision, NLP, and sequence modeling.

I can build agentic AI systems and custom agents (e.g. with LangChain) for code assistance, tool use, and autonomous workflows, similar to Cursor-style behavior.

2019 · Business Administration

UNLaM

I built a fintech company from scratch as my final project: business plan, financial projections, regulatory considerations, and go-to-market strategy.

I can manage people and teams: hiring, performance, and coordination aligned with organizational goals.

I can lead financial administration: budgeting, forecasting, cost allocation, and economic and financial modeling for planning and reporting.

I can align technical roadmaps with business goals using project and capital administration from the degree.

I can analyze financial statements, manage risk, and support decision-making with quantitative and process-optimization tools.

I can apply marketing strategies, market research, and positioning to support product and growth decisions in tech and startups.

I can interpret contracts, compliance requirements, and regulatory constraints in business and product contexts.

I can use applied statistics and quantitative methods for forecasting, reporting, and data-driven decisions.

I can optimize processes and allocate resources across teams or projects using cost and operations management.

I can contribute to audit, internal controls, and financial reporting in tech and startup environments.

2012 · Certificate in Advanced English

Cambridge University

I can communicate fluently with US-based teams and stakeholders in meetings, async channels, and written documentation.

I can lead or contribute to technical discussions, code reviews, sprint ceremonies, and RFCs in English with clarity and precision.

I can write clear technical documentation, incident reports, and proposals for distributed and cross-functional audiences.

I can collaborate effectively with Product, Design, and non-engineering partners in English without language barriers.

I can present to customers or partners and handle customer-facing communication when required by the role.

2008 · First Certificate in English

Cambridge University

I can follow technical discussions, stakeholder meetings, and standups in English and contribute to day-to-day collaboration.

I can participate in daily standups, retros, and cross-team conversations in English with confidence at B2 level.

I can write professional emails, tickets, and short documentation in English for remote and distributed teams.

I can engage with English-speaking clients or support when needed and understand requirements and feedback.

I can provide a strong, permanent foundation for further advancement to C1 (CAE) for more complex professional communication.

Education

2024

Computer Engineering

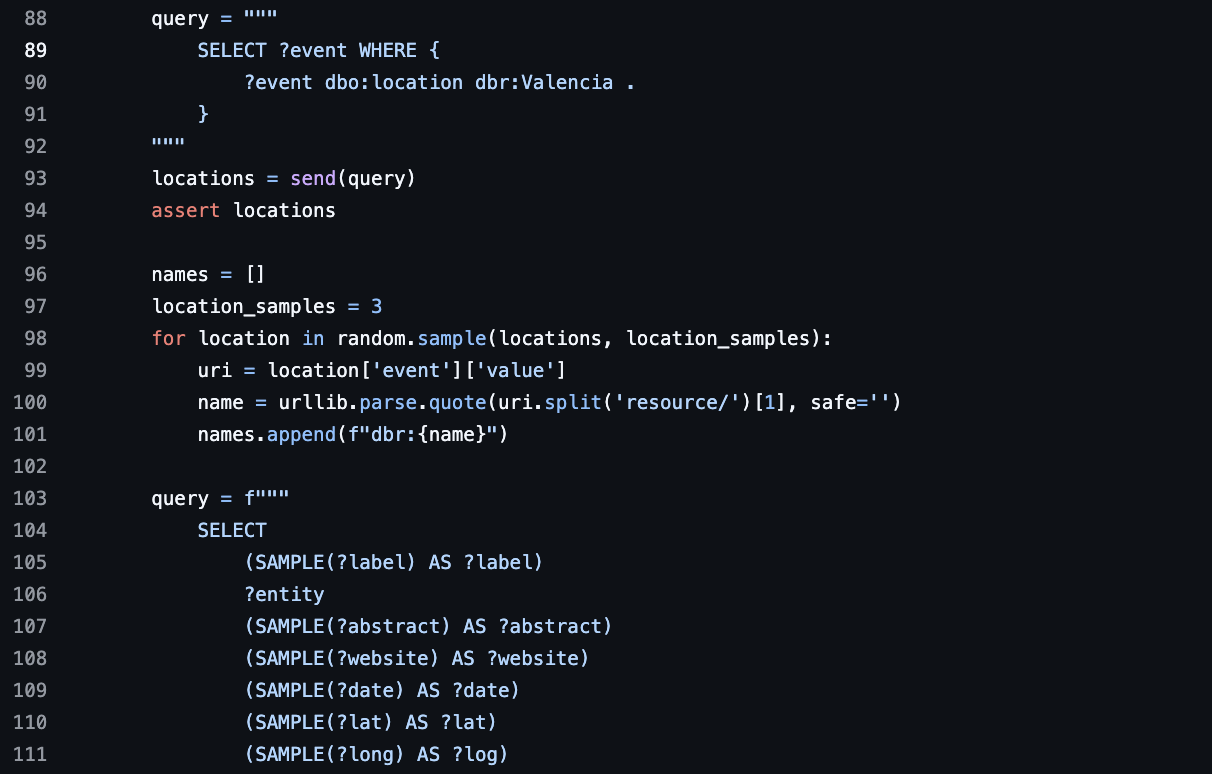

Valencia International UniversityI completed a final project focused on computational geometry, algorithmic analysis, and optimization algorithms in combinatorial space.

PythonAlgorithmsComputational GeometryOptimizationI can design and implement scalable software systems using formal methods, complexity analysis, and architecture patterns.

PythonDjangoI can develop and operate distributed systems with concurrency, parallelism, and database and networking fundamentals.

PostgreSQLKubernetesDockerI can manage the full software lifecycle and deliver projects aligned with business and technical requirements.

CI/CDI can apply systems programming (C, Java, Python, Rust), operating systems, and computer architecture to production systems.

JavaPythonRustI can work across the stack from low-level systems to high-level applications using architecture, operating systems, and multiple languages.

PythonTypeScriptI can reason about algorithmic complexity and choose appropriate data structures and algorithms for performance and maintainability.

AlgorithmsI can write and review technical specifications and documentation grounded in formal methods and engineering practice.

Technical DocumentationI can use testing, refactoring, and design principles to identify and reduce technical debt and improve quality.

Unit TestingIntegration TestingI can apply mathematical and statistical foundations (calculus, algebra, discrete math) to modeling and problem-solving in software systems.

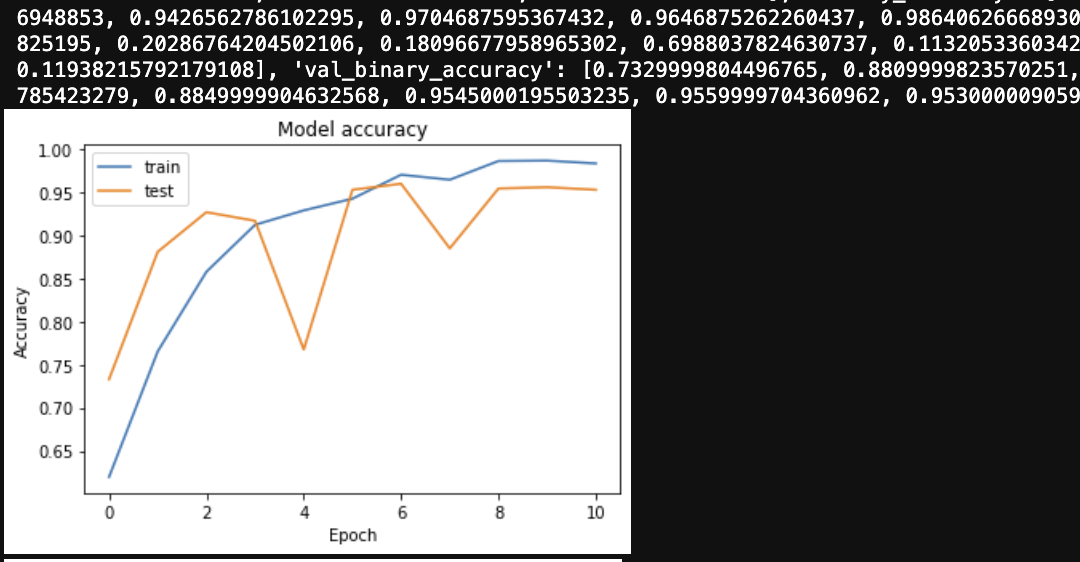

Applied StatisticsI can design and train deep learning models using frameworks such as Keras, TensorFlow, and PyTorch for computer vision, NLP, and sequence modeling.

MLOpsTensorFlowKerasI can build agentic AI systems and custom agents (e.g. with LangChain) for code assistance, tool use, and autonomous workflows, similar to Cursor-style behavior.

AI AgentsLLMsRAG

2019

Business Administration

UNLaMI built a fintech company from scratch as my final project: business plan, financial projections, regulatory considerations, and go-to-market strategy.

Financial ManagementForecastingI can manage people and teams: hiring, performance, and coordination aligned with organizational goals.

Project ManagementTeam ManagementI can lead financial administration: budgeting, forecasting, cost allocation, and economic and financial modeling for planning and reporting.

BudgetingForecastingI can align technical roadmaps with business goals using project and capital administration from the degree.

Project ManagementI can analyze financial statements, manage risk, and support decision-making with quantitative and process-optimization tools.

Risk ManagementFinancial ReportingI can apply marketing strategies, market research, and positioning to support product and growth decisions in tech and startups.

Market ResearchI can interpret contracts, compliance requirements, and regulatory constraints in business and product contexts.

ComplianceI can use applied statistics and quantitative methods for forecasting, reporting, and data-driven decisions.

Applied StatisticsI can optimize processes and allocate resources across teams or projects using cost and operations management.

Process OptimizationI can contribute to audit, internal controls, and financial reporting in tech and startup environments.

AuditingFinancial Reporting

2012

Certificate in Advanced English

Cambridge UniversityI can communicate fluently with US-based teams and stakeholders in meetings, async channels, and written documentation.

Stakeholder CommunicationI can lead or contribute to technical discussions, code reviews, sprint ceremonies, and RFCs in English with clarity and precision.

Technical DocumentationI can write clear technical documentation, incident reports, and proposals for distributed and cross-functional audiences.

Technical DocumentationI can collaborate effectively with Product, Design, and non-engineering partners in English without language barriers.

Stakeholder CommunicationI can present to customers or partners and handle customer-facing communication when required by the role.

Stakeholder Communication

2008

First Certificate in English

Cambridge UniversityI can follow technical discussions, stakeholder meetings, and standups in English and contribute to day-to-day collaboration.

Remote CollaborationI can participate in daily standups, retros, and cross-team conversations in English with confidence at B2 level.

Remote CollaborationI can write professional emails, tickets, and short documentation in English for remote and distributed teams.

Technical DocumentationI can engage with English-speaking clients or support when needed and understand requirements and feedback.

Stakeholder CommunicationI can provide a strong, permanent foundation for further advancement to C1 (CAE) for more complex professional communication.

Remote CollaborationCertifications

2026

Model Context Protocol: Advanced Topics

AnthropicModel Context Protocol (MCP)AnthropicAI AgentsLLMs2025

Google Data Engineer and Cloud Architect Guide

UdemyGoogle Cloud Platform (GCP)Data EngineeringBigQueryDataflow (Apache Beam)Pub/SubCloud Storage (GCS)Cloud SQLDataprocStreaming DataCloud RunCloud FunctionsVPC2024

AWS Solutions Architect Professional

AWSAWS EC2ELBVPCElastic BeanstalkRDSDMSMGNAWS S3AWS DynamoDBAWS LambdaAWS SQSAWS SNSAWS API GatewayAWS IAMAWS Cost ExplorerAWS OrganizationsAWS ElasticCacheAWS CloudFrontAWS Lambda@EdgeAWS Direct ConnectAWS Private LinkAWS DataSyncAWS SnowballAWS Snowcone2024

Data Science: Deep Learning and Neural Networks in Python

UdemyDeep LearningNeural NetworksPythonPyTorchTensorFlowKerasCNNsModel Training2024

Natural Language Processing with Deep Learning in Python

UdemyNatural Language Processing (NLP)Deep LearningTransformersLLMsHugging FaceEmbeddingsText ClassificationNamed Entity Recognition (NER)Vector SearchPython2024

CyberSecurity

UdemySecurityPrivacyRisk AssesmentEncryptionVulnerabilitiesHash FunctionsDigital CertificatesSSLHTTPSFirewallUpdatesPrivilegesSocial EngineeringSecurity DomainsMAC AddressPhysical IsolationVirtualization2023

Networking Fundamentals

UdemyTCP/IP ModelIP AddressingIP SubnettingIPv4IPv6DomainsTCPUDPWiresharkVLANSpanning TreePacket TracerRouting AlgorithmsStatic RoutingDHCPDNSVLSMNTPSNMPSyslogSecurityTroubleshootingVoIPACLsNATOSPFIPSecWi-Fi2022

Data Structures & Algorithms

UdemyRecursionTime ComplexitySpace ComplexityArraysSetsMatricesLinked ListsDouble Linked ListsCircular Linked ListsStacksQueuesBinary TreeBinary HeapHash TablesBubble SortSelection SortInsertion SortBucket SortMerge SortQuick SortHeap SortGraphsShortest PathDisjoint SetGreedy AlgorithmsDivide and ConquerBell Dynamic Programming2022

Docker & Kubernetes

UdemyDockerDockerfileContainer LifecycleLogsVolumesNetworkingDocker-ComposeDockerHubAWS ECRCI/CDAWS Elastic BeanstalkAWS ECSKubernetesEKS2021

Deep Learning Specialization

DeepLearning.AINeural NetworksBinary ClassificationLogistic RegressionGradient DescentDerivativesNumPyKerasTensorFlowBias vs VarianceRegularizationDropoutVanishing GradientExploding GradientAdam OptimizationXavier InitializationComputer VisionEdge DetectionPaddingStridesConvolutional LayerPooling LayerResNet ArchitectureMobileNet ArchitectureData AugmentationTransfer LearningObject DetectionBounding Box PredictionYOLO AlgorithmU-NetFace RecognitionSequence ModelsRecurrent Neural NetworksGRULSTMWord EmbeddingsGloVe Word VectorsWord2VecSentiment ClassificationBeam SearchAttention ModelSpeech RecognitionTransformers2021

Database Architecture, Scale and NoSQL with Elasticsearch

University of MichiganCourseraACID vs BASEElasticsearchMappingsAnalyzersNormalizersTasksAliasesSettingsShardsReplicas2021

Graph Theory

UdemyGraph AlgorithmsAlgorithmsData StructuresShortest-Path AlgorithmsDijkstra's AlgorithmGraph AnalyticsGraph DatabasesPathfindingCombinatorial OptimizationGraph Theory2021

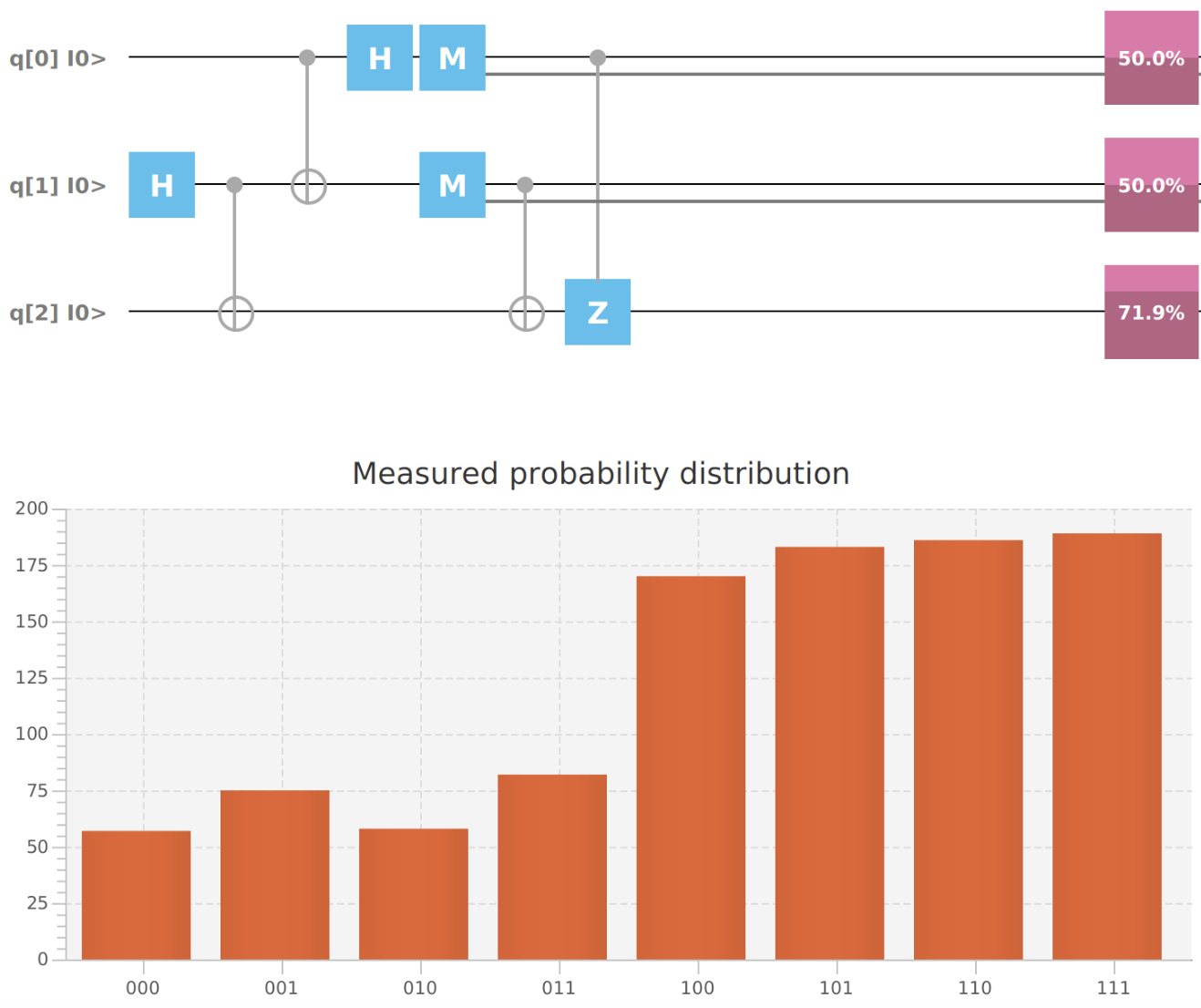

Introduction to Quantum Computing Course

IBM QuantumQuantum MechanicsQiskitQuantum AlgorithmsIBM Quantum ExperienceLinear AlgebraCalculusGeometry2021

AWS Fundamentals Specialization

Amazon Web ServicesCourseraAWSIAMVPCEC2S3RDSDynamoDBLambdaCloudWatchSecurityRoute 53CloudTrail2021

Big Data: Hadoop and Spark

UdemyHadoopHDFSMap ReducePigHiveHBaseSqoopFlumeOozieScalaSparkSpark SQLKafkaSpark Streaming2021

Master in Applied Statistics

Euroinnova Business SchoolDescriptive StatisticsStatistical distributionsIBM SPSSMaximum likelihood methodExpectation maximiatin algorithmConfidence intervalsHypothesis testingFeature engineeringLinear regression modelsBivariate regression modelsMultivariate regression modelsPolinomial regression modelsColinearityMulticolinearityLog-linear ModelsAutocorrelationF-TestOutlier detectionEconometricsBiostatisticsTime series analysisStochastic processesMarkov processesNon-parametric tests2020

AI for Medical Diagnosis

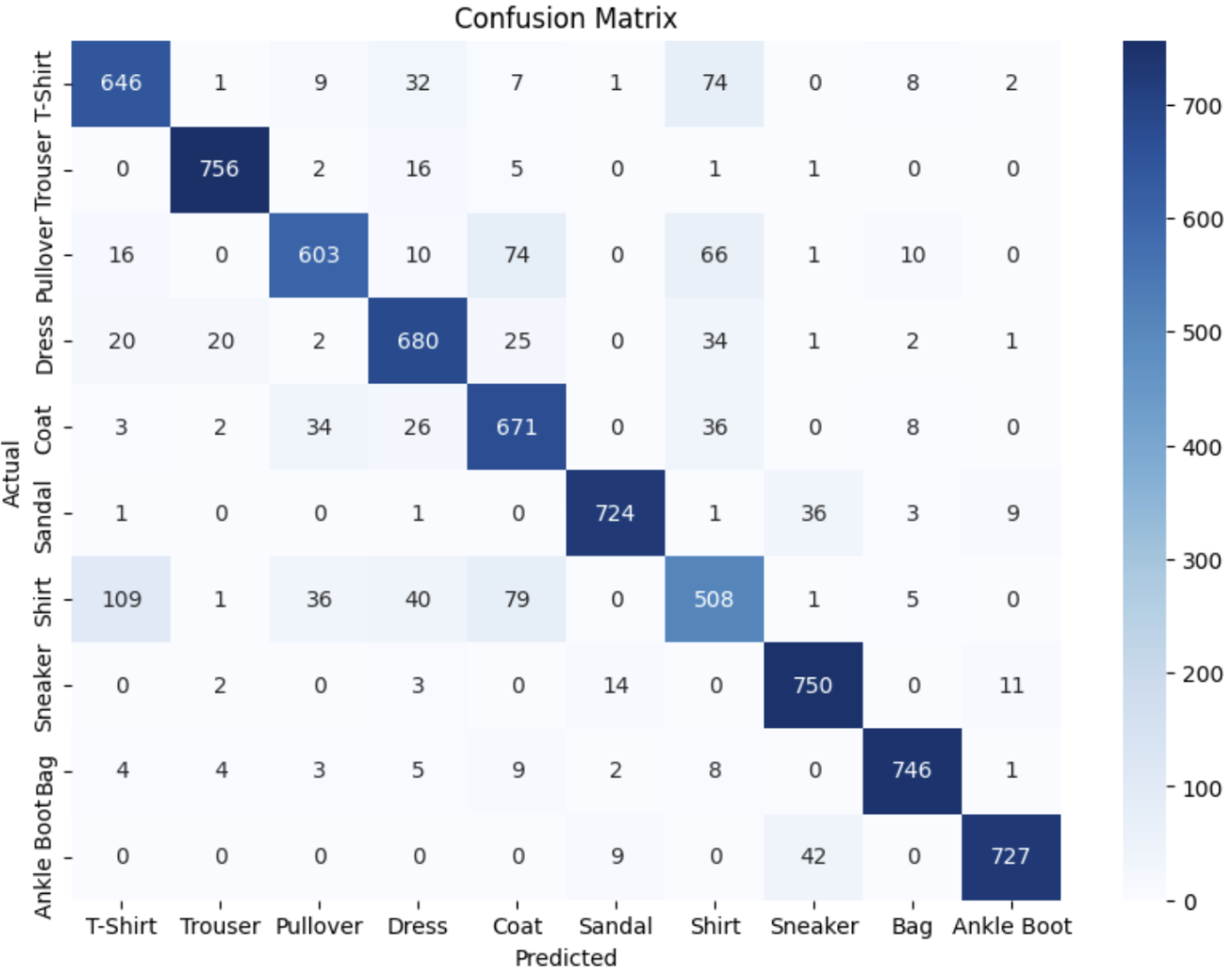

CourseraNumPyNeural NetworksMedical Image DiagnosisMRI DataImage ClassificationImage InbalanceImage SegmentationData AugmentationBinary Cross Entropy Loss FunctionSensitivitySpecificityPrevalncePPVNPVConfusion MatrixROC CurveCNN ArchitectureU-Net2020

Neural Networks & Deep Learning

CourseraLogistic RegressionCost FunctionsActivation FunctionsGradient DescentVectorizationParameters Initialization2020

Modern Deep Learning in Python

Deep Learning AINeural NetworkGradient DescentStochastic Gradient DescentNesterov MomentumGrid SearchVanishing GradientsExploding GradientsTensorflowTheanoKerasPyTorchEnsemblesDropoutBatch Normalization2020

Recommender System and Deep Learning in Python

UdemyUser-User Collaborative FilteringItem-Item Collaborative FilteringMatrix FactorizationSingle Value DecompositionPageRankKerasTensorFlowAutoencodersResidual LearningApache Spark2020

AWS Lambda & Serverless Architecture

UdemyServerless FrameworkAWS LambdaJWT TokensAuthorizersAWS API GatewayAWS Code CommitAWS Code PipelineAWS Code BuildAWS DynamoDBSwaggerCD/CI2019

ReactJS & Redux

UdemyReactFormsComponentsHooksAxiosreact-routerreact-reduxredux-sagaAuthenticationFirebaseUnit TestingWebpackNext.jsAnimations2019

Machine Learning with Python

UdemyData ProcessingLinear RegressionPolynomial RegressionSVRDecision TreesRandom ForestK-ClusteringHierarchical ClusteringK-Fold Cross ValidationParameter TuningGrid Search2019

Natural Language Processing with Python

UdemyPythonnltkSpaCyTokenizationStemmingLemmatizationStop WordsPOS TaggingNERText ClassificationScikit-LearnConfusion MatrixSemantic AnalysisSntiment AnalysisWord VectorizationTopic ModelingText SynthesisChat BotPyPDF2Regular Expressions2015

IBM IT Specialist in Services and Infrastructure

IBMWeb DevelopmentPerlDatasource IntegrationSQLServer AdministrationNetworkingDatabase AdministrationStorage AdministrationVirtualizationIBM AIXRHELOracle SolarisSANNASGFPS2LVMWebSphereLDAPSSO2010

IBM AIX 5 Basics (Q1313) & Administration (Q1314)

IBMFile SystemPermissionsProcessesServicesShell ScriptingArchivingcronInstallationVirtualizationDevicesLVMRun LevelsSoftware ManagementCertifications

2026 · Claude with Amazon Bedrock · Anthropic

2026 · Introduction to agent skills · Anthropic

2026 · Model Context Protocol: Advanced Topics · Anthropic

2026 · Claude 101 · Anthropic

2025 · Google Data Engineer and Cloud Architect Guide · Udemy

2024 · Rust Programming · Udemy

2024 · AWS Solutions Architect Professional · AWS

2024 · Data Science: Deep Learning and Neural Networks in Python · Udemy

2024 · Natural Language Processing with Deep Learning in Python · Udemy

2024 · The Complete Node.js Developer Course · Udemy

2024 · CyberSecurity · Udemy

2023 · Networking Fundamentals · Udemy

2022 · Data Structures & Algorithms · Udemy

2022 · Docker & Kubernetes · Udemy

2021 · Deep Learning Specialization · DeepLearning.AI

2021 · Database Architecture, Scale and NoSQL with Elasticsearch · University of Michigan · Coursera

2021 · Graph Theory · Udemy

2021 · Configuring and Deploying VPCs with Multiple Subnets · AWS

2021 · Introduction to Quantum Computing Course · IBM Quantum

2021 · AWS Fundamentals Specialization · Amazon Web Services · Coursera

2021 · Big Data: Hadoop and Spark · Udemy

2021 · Master in Applied Statistics · Euroinnova Business School

2021 · Full Stack development with Django · Udemy

2021 · SQL: Data Reporting & Analysis · LinkedIn

2020 · AI for Medical Diagnosis · Coursera

2020 · Deep Learning with Keras · CGFIUBA

2020 · Neural Networks & Deep Learning · Coursera

2020 · Modern Deep Learning in Python · Deep Learning AI

2020 · Recommender System and Deep Learning in Python · Udemy

2020 · AWS Lambda & Serverless Architecture · Udemy

2019 · ReactJS & Redux · Udemy

2019 · Web Scraping with Python · Udemy

2019 · Machine Learning with Python · Udemy

2019 · Natural Language Processing with Python · Udemy

2015 · IBM IT Specialist in Services and Infrastructure · IBM

2010 · IBM AIX 5 Basics (Q1313) & Administration (Q1314) · IBM

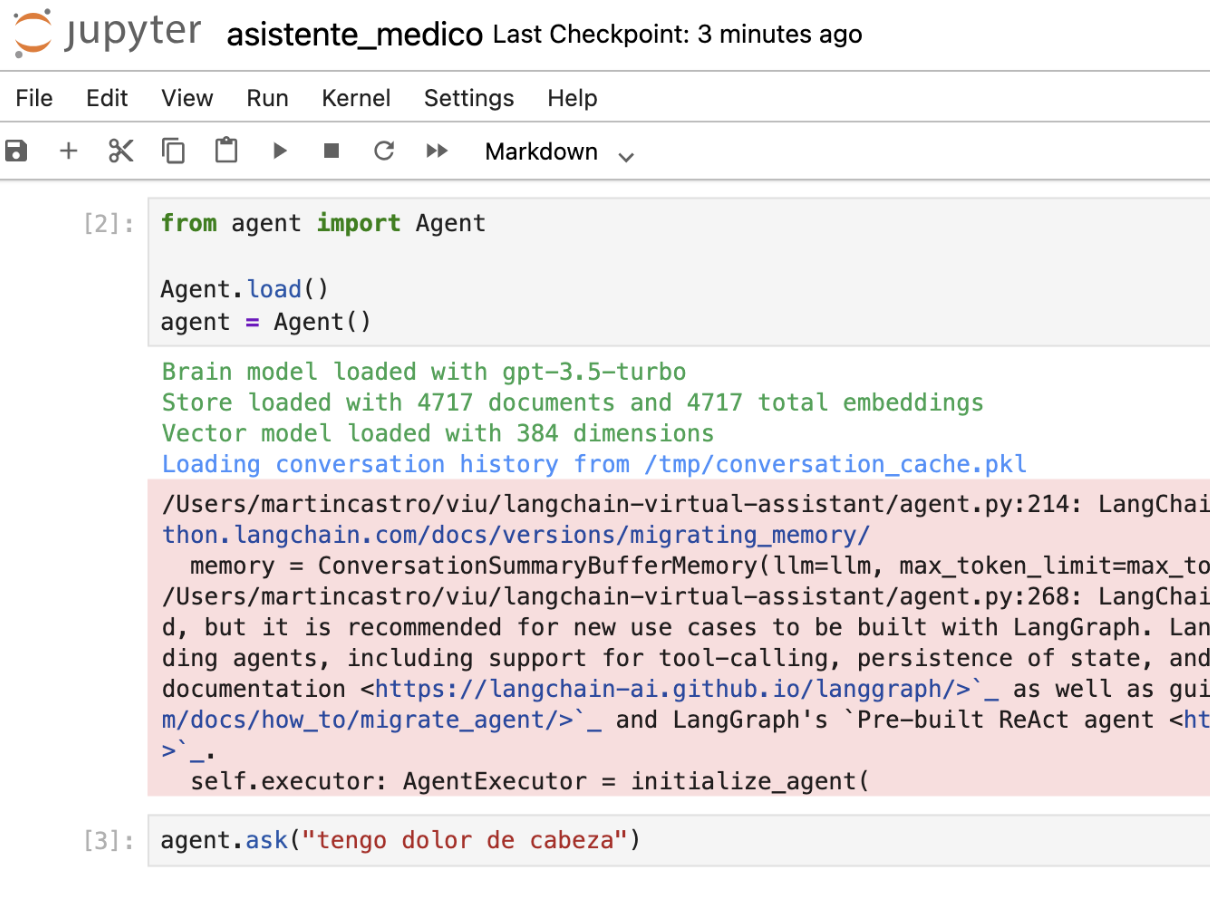

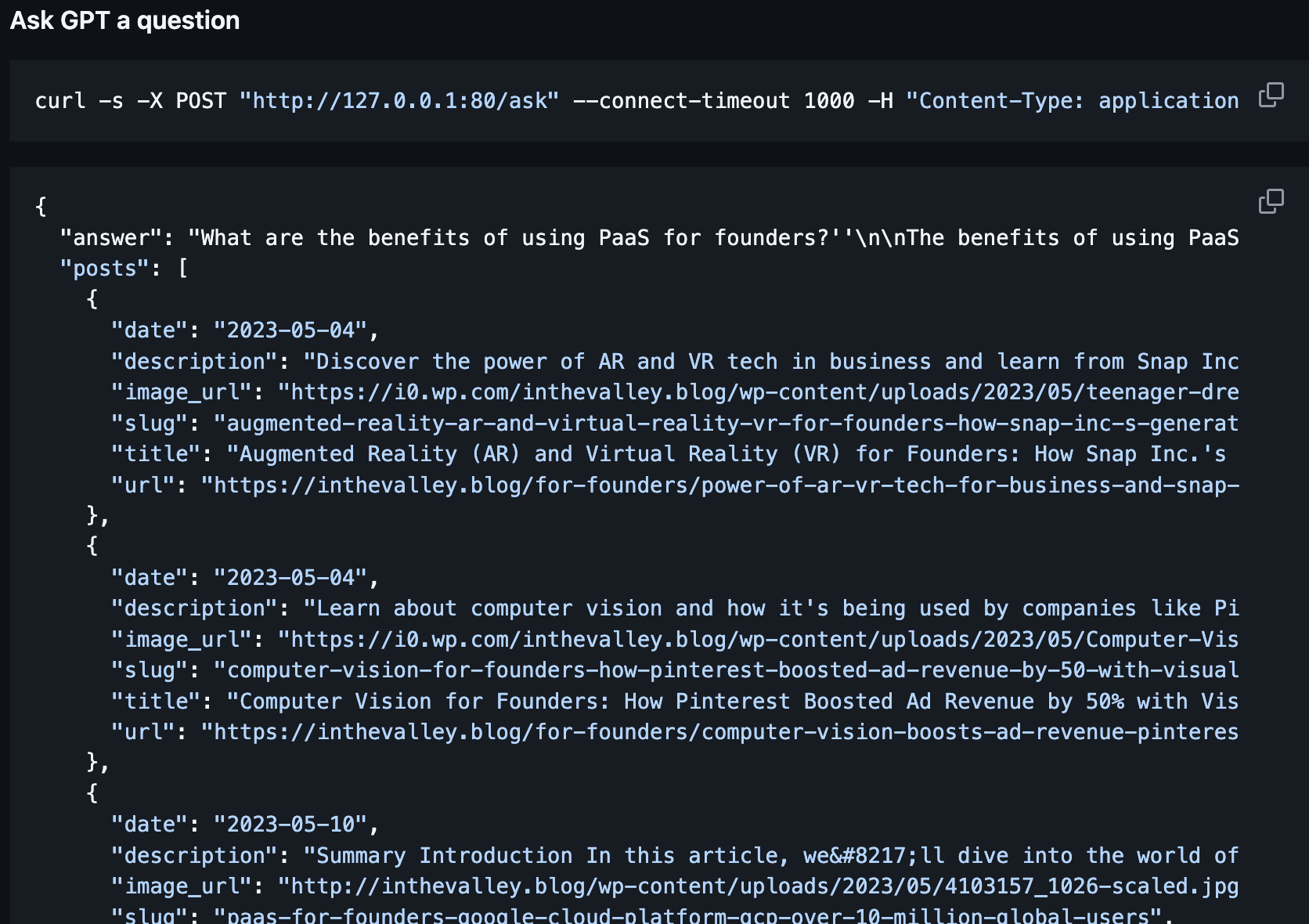

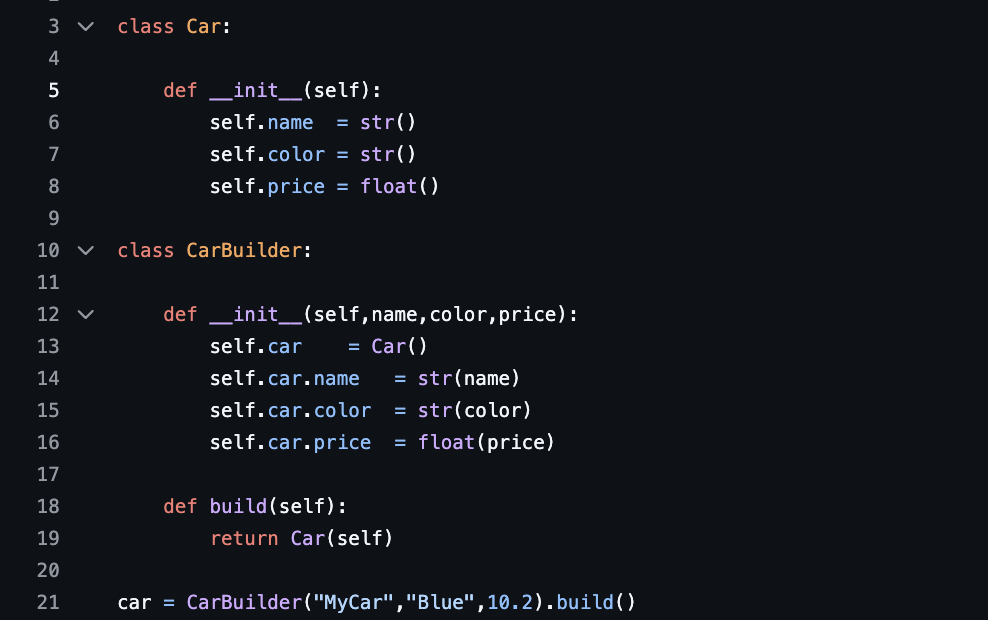

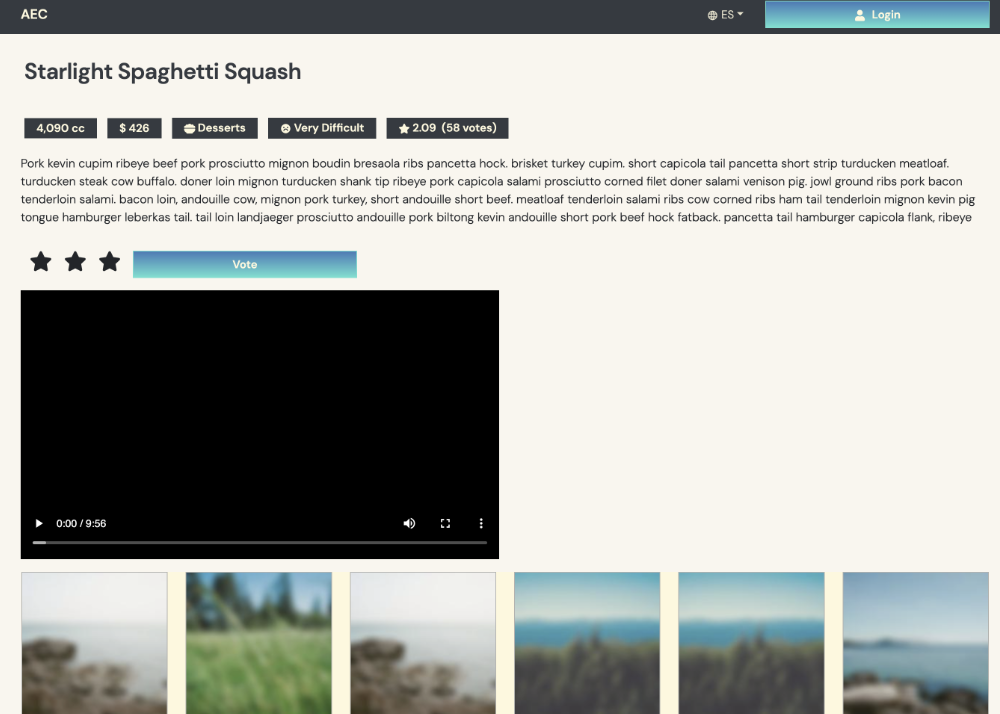

AI Agents

This project delivers an AI-powered virtual assistant built with LangChain and RAG, enabling context-aware conversations and document analysis powered by OpenAI's GPT models. It allows users to query documents and hold coherent, context-rich dialogues with intelligent retrieval and generation capabilities.

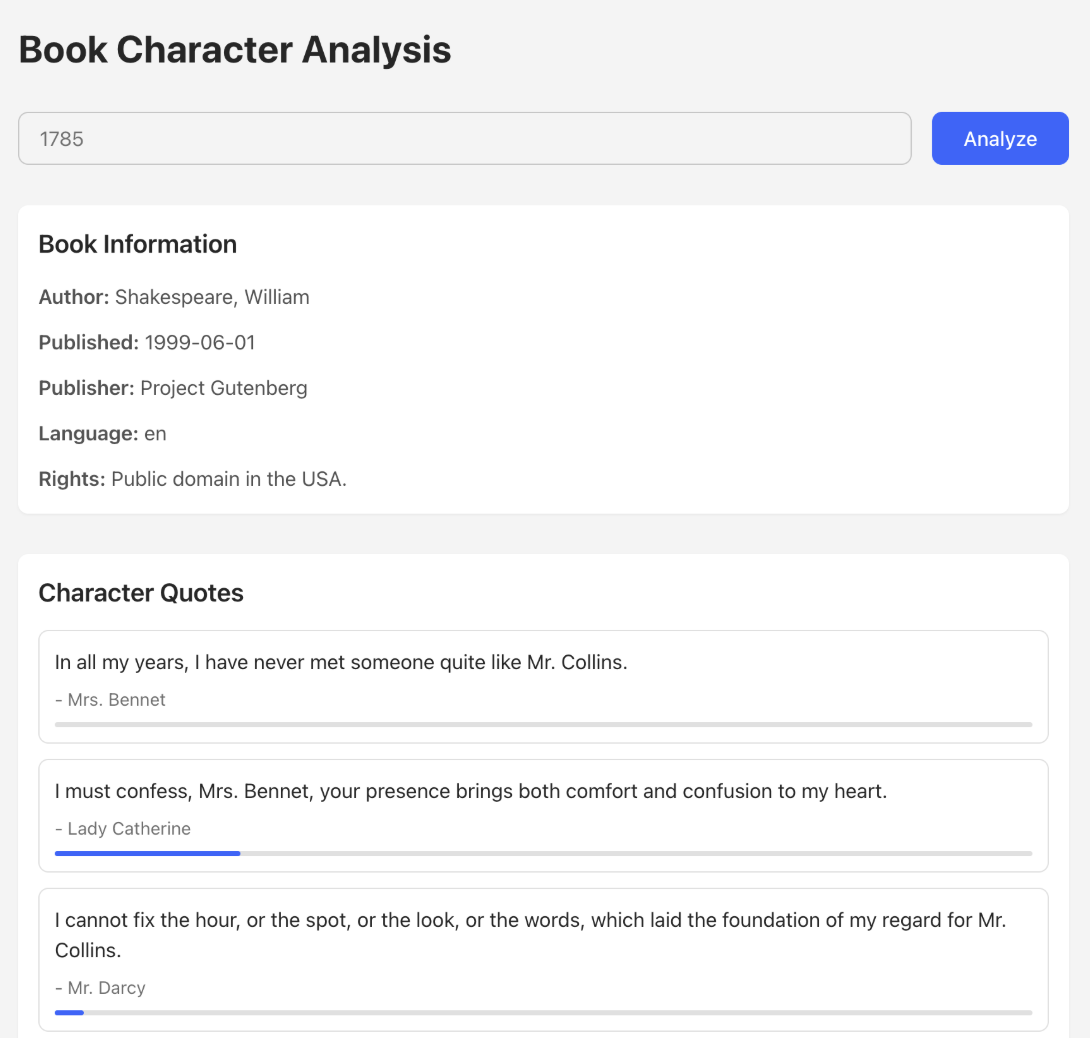

LangChainPythonRAGOpenAIThis project is a React-based single page application, styled using TailwindCSS, that allows users to explore and analyze character interactions in Project Gutenberg e-books. The application leverages a Language Learning Model (LLM) to process the text of e-books and visually represent character interactions through an interactive network graph. The application is deployed on AWS using the AWS Cloud Development Kit (CDK), which automates the setup of AWS Lambda for backend processing and API Gateway for handling requests efficiently.

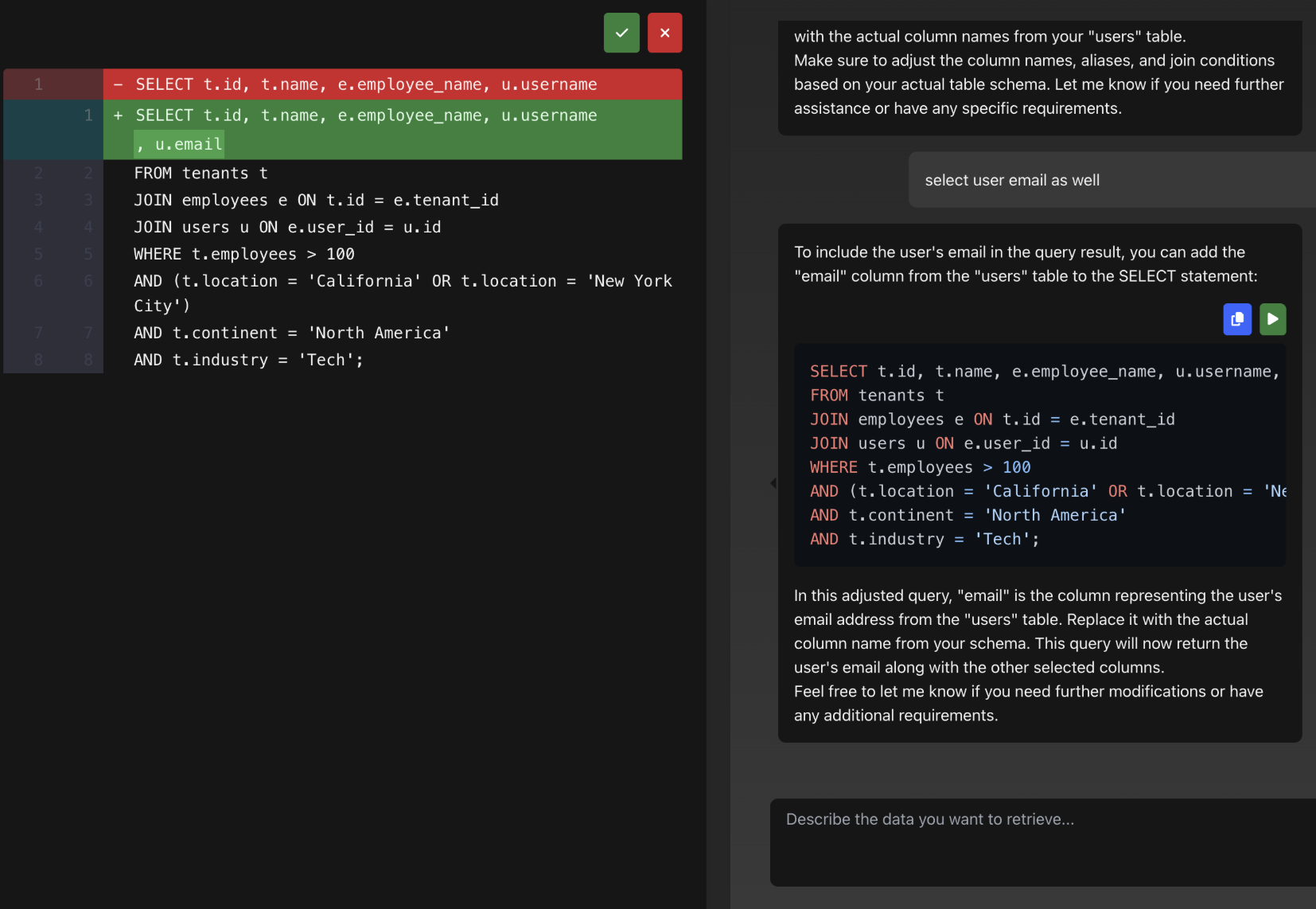

ReactNode.jsTypeScriptOpenAI APIReact FlowAWSThis project is a tool that helps you create SQL queries using AI technology. It has a web interface that works like Cursor, making it easy to use. The system uses two different AI agents that work together: one agent talks with you to understand what you need, and another agent creates the actual SQL code.